AD-MIR: Bridging the Gap from Perception to Persuasion in Advertising Video Understanding via Structured Reasoning

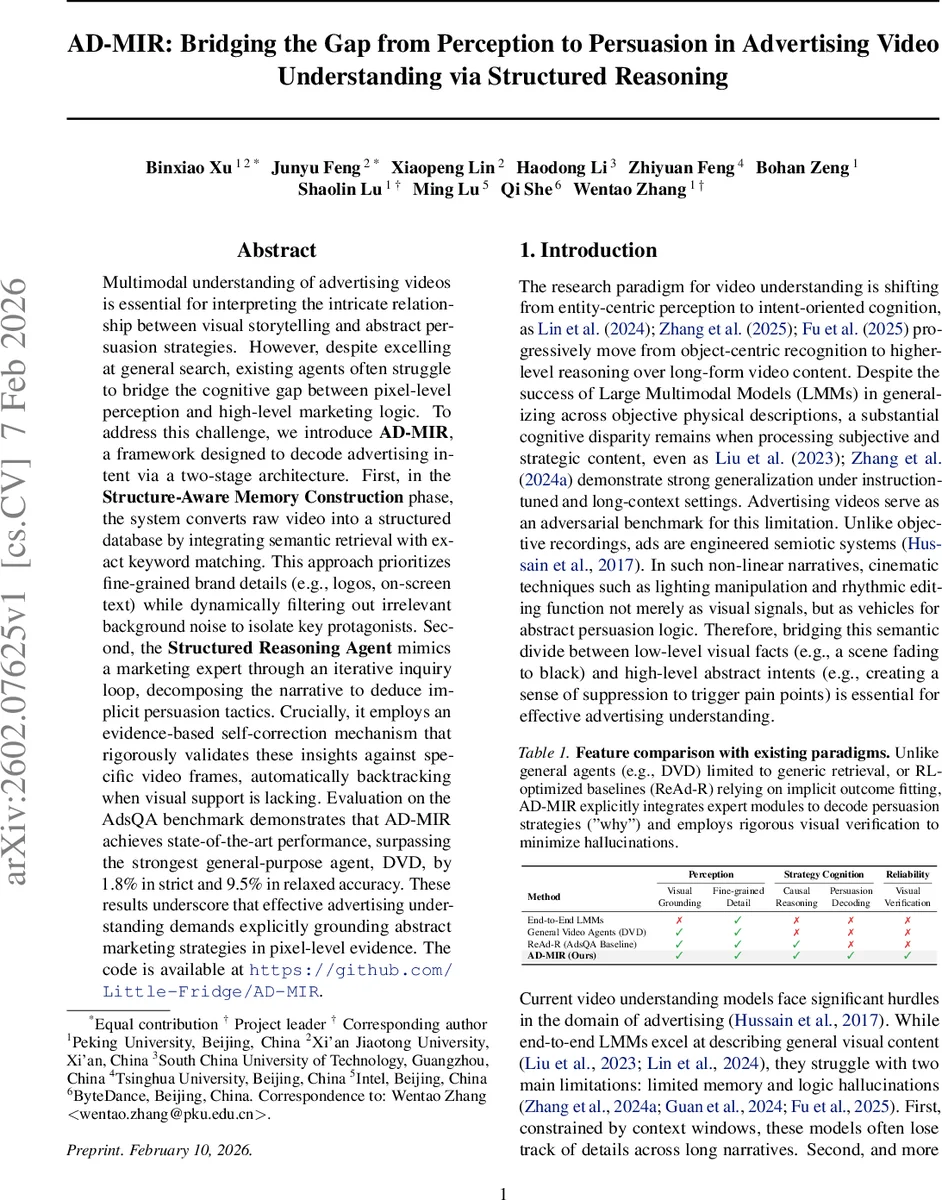

Multimodal understanding of advertising videos is essential for interpreting the intricate relationship between visual storytelling and abstract persuasion strategies. However, despite excelling at general search, existing agents often struggle to bridge the cognitive gap between pixel-level perception and high-level marketing logic. To address this challenge, we introduce AD-MIR, a framework designed to decode advertising intent via a two-stage architecture. First, in the Structure-Aware Memory Construction phase, the system converts raw video into a structured database by integrating semantic retrieval with exact keyword matching. This approach prioritizes fine-grained brand details (e.g., logos, on-screen text) while dynamically filtering out irrelevant background noise to isolate key protagonists. Second, the Structured Reasoning Agent mimics a marketing expert through an iterative inquiry loop, decomposing the narrative to deduce implicit persuasion tactics. Crucially, it employs an evidence-based self-correction mechanism that rigorously validates these insights against specific video frames, automatically backtracking when visual support is lacking. Evaluation on the AdsQA benchmark demonstrates that AD-MIR achieves state-of-the-art performance, surpassing the strongest general-purpose agent, DVD, by 1.8% in strict and 9.5% in relaxed accuracy. These results underscore that effective advertising understanding demands explicitly grounding abstract marketing strategies in pixel-level evidence. The code is available at https://github.com/Little-Fridge/AD-MIR.

💡 Research Summary

The paper introduces AD‑MIR, a novel multimodal reasoning framework specifically designed for understanding advertising videos, which are characterized by complex visual storytelling and abstract persuasion tactics. Existing large multimodal models (LMMs) and general‑purpose video agents excel at describing concrete visual content but struggle to bridge the gap between low‑level perception (what is shown) and high‑level intent (why it is shown). AD‑MIR addresses this by employing a two‑stage architecture.

In the first stage, Structure‑Aware Memory Construction, raw video streams are segmented into temporal clips. For each clip, a vision‑language model generates a detailed visual narrative while Whisper extracts timestamped audio transcripts. To retrieve relevant clips for a given query, the authors propose a hybrid scoring function that linearly combines dense semantic similarity (cosine similarity of text embeddings) with exact keyword matching, weighted by a hyperparameter β. This hybrid approach preserves the ability of dense vectors to capture abstract concepts while ensuring precise localization of brand names, slogans, prices, and other marketing cues that are often missed by pure dense retrieval. The resulting multimodal metadata—including OCR results, object detections, and audio cues—is stored in a hierarchical database. Additionally, a static subject registry S_reg is built using GPT‑4o; each entry contains a rich semantic profile of a character (e.g., “middle‑aged doctor with a stethoscope, looking anxious”), not just bounding boxes.

The second stage, Structured Reasoning Agent, follows a ReAct‑style “Think‑Act‑Observe” loop. Upon receiving a natural‑language query Q, the controller first constructs a context‑anchored query A_anchor by concatenating Q with a global narrative summary obtained via a global browsing tool. The “Act” step dynamically selects between perception tools (frame inspection, OCR, object detection) for factual questions and a Communication Expert tool for strategic questions that require marketing psychology, rhetorical analysis, or emotional arc detection. Each tool returns concrete evidence such as frame timestamps, OCR text, or inferred persuasive strategies. In the “Observe” step, the agent validates high‑level hypotheses against this visual evidence. If a hypothesis lacks support, the system automatically backtracks, revises its reasoning, and re‑queries the appropriate tools. This self‑correction mechanism is formalized as a policy π(aₜ|Hₜ₋₁, oₜ; Θ) within a Partially Observable Markov Decision Process (POMDP), implemented via prompt‑based in‑context learning rather than gradient updates, allowing the agent to maintain long‑term context across lengthy ads.

Experiments are conducted on the AdsQA benchmark, a question‑answer dataset for advertising videos. AD‑MIR achieves 71.2 % strict accuracy and 84.5 % relaxed accuracy, surpassing the strongest baseline, the Deep Video Discovery (DVD) agent, by 1.8 % and 9.5 % absolute points respectively. The gains are especially pronounced on persuasion‑oriented queries, where AD‑MIR reduces hallucinations by roughly 68 % compared to DVD. Ablation studies show that removing the hybrid retrieval component drops performance by 3–5 % points, and disabling the evidence‑based self‑correction loop leads to a 12 % point decline on strategic questions.

The authors highlight three key contributions: (1) a dual‑track memory construction that fuses semantic embeddings with exact keyword matching, (2) a query‑driven tool selection mechanism that routes factual and strategic inquiries to appropriate modules, and (3) an evidence‑grounded reasoning loop that enforces pixel‑level verification of abstract marketing hypotheses. Limitations include reliance on a predefined toolbox (making adaptation to novel ad formats such as AR or interactive media non‑trivial) and the need for further efficiency studies on real‑time streaming scenarios.

In summary, AD‑MIR demonstrates that integrating structured multimodal indexing with domain‑specific reasoning and rigorous visual verification can effectively close the perception‑to‑intent gap in advertising video understanding, opening avenues for automated ad analysis, creative feedback, and marketing intelligence applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment