Escaping Spectral Bias without Backpropagation: Fast Implicit Neural Representations with Extreme Learning Machines

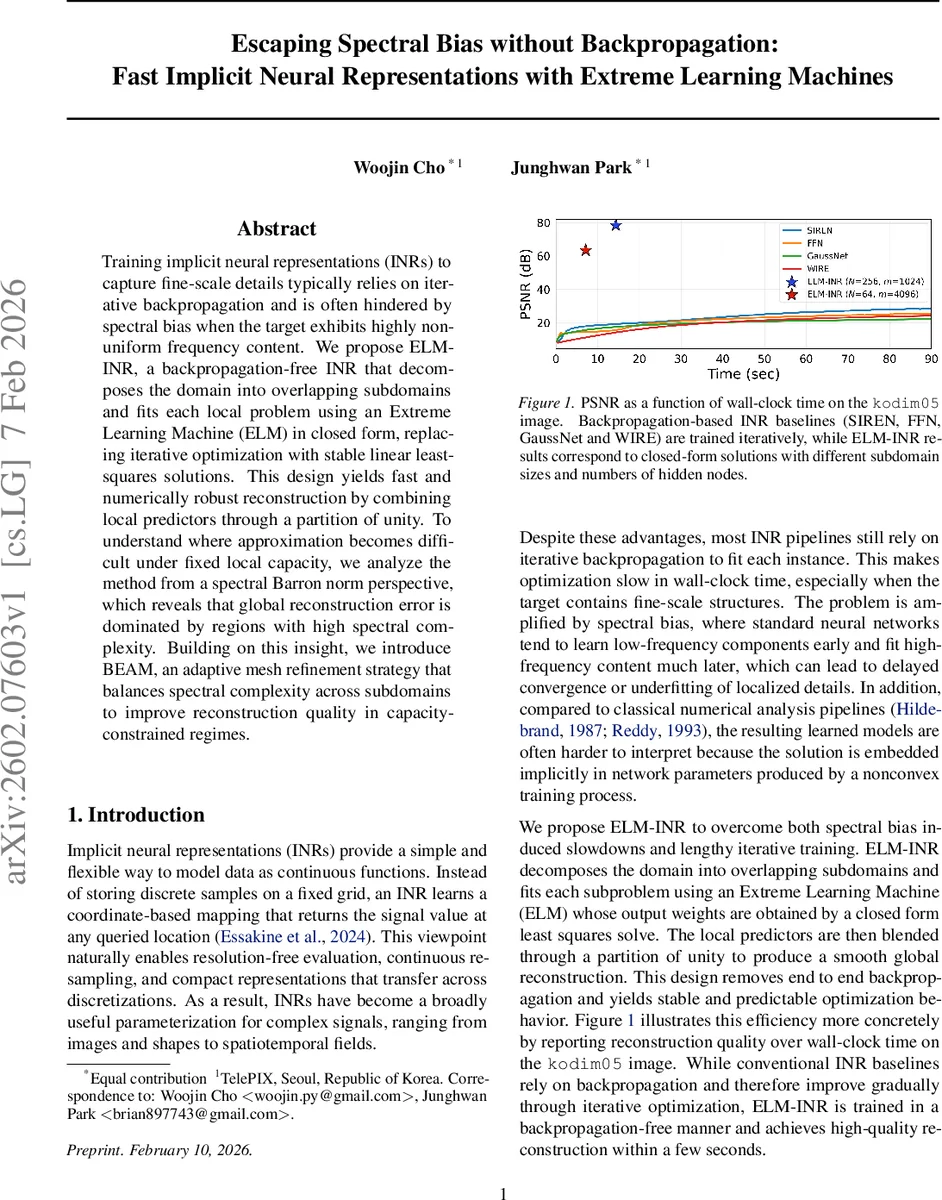

Training implicit neural representations (INRs) to capture fine-scale details typically relies on iterative backpropagation and is often hindered by spectral bias when the target exhibits highly non-uniform frequency content. We propose ELM-INR, a backpropagation-free INR that decomposes the domain into overlapping subdomains and fits each local problem using an Extreme Learning Machine (ELM) in closed form, replacing iterative optimization with stable linear least-squares solutions. This design yields fast and numerically robust reconstruction by combining local predictors through a partition of unity. To understand where approximation becomes difficult under fixed local capacity, we analyze the method from a spectral Barron norm perspective, which reveals that global reconstruction error is dominated by regions with high spectral complexity. Building on this insight, we introduce BEAM, an adaptive mesh refinement strategy that balances spectral complexity across subdomains to improve reconstruction quality in capacity-constrained regimes.

💡 Research Summary

The paper tackles two persistent challenges in implicit neural representations (INRs): spectral bias, which causes neural networks to learn low‑frequency components before high‑frequency details, and the heavy computational burden of iterative back‑propagation. The authors introduce ELM‑INR, a back‑propagation‑free framework that decomposes the input domain into overlapping subdomains and fits each subproblem with an Extreme Learning Machine (ELM). In an ELM, hidden weights and biases are randomly initialized and then frozen; only the output weights are learned, and because the mapping from hidden activations to the output is linear, the optimal weights can be obtained in closed form by solving a least‑squares problem (α* = (HᵀH)⁻¹Hᵀy). This eliminates gradient descent, yields predictable runtimes, and avoids local minima.

To assemble a globally smooth function, the authors employ a partition‑of‑unity (PoU) scheme: smooth window functions ϕ_i(x) are defined for each subdomain Ω_i such that Σ_i ϕ_i(x) = 1 everywhere. The global approximation is then ˆf(x) = Σ_i ϕ_i(x) · ˆf_i(x). Because each local model is solved independently, the overall training cost scales linearly with the number of subdomains and quadratically with the hidden width m, which is far cheaper than the cubic‑or‑higher cost of full‑network back‑propagation.

The theoretical contribution centers on Barron space analysis. The authors define a spectral Barron norm ‖f‖{BS} = ∫‖ξ‖₁|F(ξ)| dξ, where F is the Fourier transform of f. Functions with finite ‖f‖{BS} belong to a class that can be approximated by shallow networks with an error bound O(‖f‖{BS}/√m). This bound implies that, when a fixed width m is used across all subdomains, the global error is dominated by the subdomain with the largest local Barron norm β_i = ‖f|{Ω_i}‖_{BS}. In other words, spectral complexity imbalance is the bottleneck.

Motivated by this insight, the authors propose BEAM (Barron‑Enhanced Adaptive Mesh), an adaptive domain‑decomposition algorithm that equalizes the spectral Barron norm across subdomains. Starting from a fine atomic grid (size s × s), BEAM computes a discrete proxy for β_i using a weighted sum of DFT magnitudes (∑_k‖k‖₁|F_i(k)|). Cells whose β_i falls below a user‑defined threshold τ are merged with neighboring cells as long as the merged region’s β stays ≤ τ. The process repeats until no further merges are possible, yielding a mesh where each cell satisfies the same spectral capacity constraint. This balanced partition reduces the worst‑case local error, especially in regimes where the hidden width m is limited by memory, power, or latency constraints.

Experimental validation spans a diverse set of modalities: standard 2‑D images (e.g., kodim05), multispectral satellite imagery, fluid‑dynamics simulations (Navier–Stokes), climate reanalysis data (ERA5), and medical MRI volumes. Across all benchmarks, ELM‑INR reaches higher PSNR values than state‑of‑the‑art back‑propagation‑based INRs (SIREN, FFN, GaussNet, WIRE) while requiring only a few seconds of wall‑clock time. When BEAM is applied, even with a modest hidden width (m = 256), the method consistently gains 1.5–2 dB PSNR over a uniform mesh, demonstrating that spectral balancing is effective in capacity‑constrained settings. The authors also report that the closed‑form linear solve is numerically stable; adding Tikhonov regularization to (HᵀH)⁻¹ keeps condition numbers low and makes the approach robust to noisy samples.

In summary, the paper makes three key contributions:

- A back‑propagation‑free INR pipeline (ELM‑INR) that leverages random‑feature ELMs and PoU blending for fast, stable, and interpretable function reconstruction.

- A rigorous Barron‑space analysis that links local spectral complexity to global approximation error, providing a theoretical explanation for the observed spectral bias in shallow networks.

- An adaptive mesh refinement strategy (BEAM) that equalizes spectral difficulty across subdomains, enabling high‑quality reconstructions even when the model capacity is tightly limited.

The combination of closed‑form learning, solid theoretical grounding, and practical adaptive meshing positions this work as a significant step toward efficient, high‑fidelity implicit representations suitable for real‑time or resource‑constrained applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment