"Meet My Sidekick!": Effects of Separate Identities and Control of a Single Robot in HRI

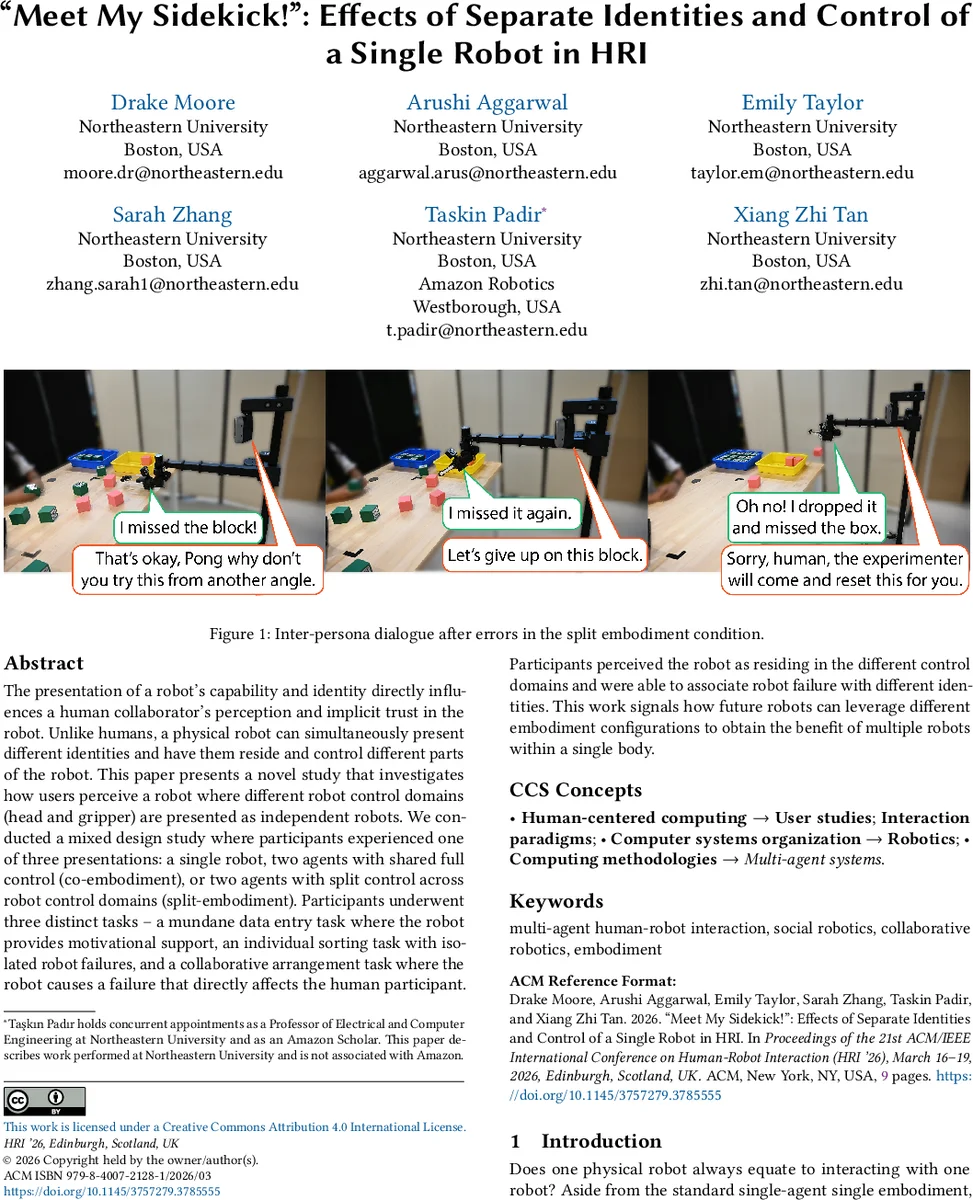

The presentation of a robot’s capability and identity directly influences a human collaborator’s perception and implicit trust in the robot. Unlike humans, a physical robot can simultaneously present different identities and have them reside and control different parts of the robot. This paper presents a novel study that investigates how users perceive a robot where different robot control domains (head and gripper) are presented as independent robots. We conducted a mixed design study where participants experienced one of three presentations: a single robot, two agents with shared full control (co-embodiment), or two agents with split control across robot control domains (split-embodiment). Participants underwent three distinct tasks – a mundane data entry task where the robot provides motivational support, an individual sorting task with isolated robot failures, and a collaborative arrangement task where the robot causes a failure that directly affects the human participant. Participants perceived the robot as residing in the different control domains and were able to associate robot failure with different identities. This work signals how future robots can leverage different embodiment configurations to obtain the benefit of multiple robots within a single body.

💡 Research Summary

The paper investigates how presenting a single robot with multiple, independently controlled identities influences human perception, trust, and error attribution in human‑robot interaction (HRI). The authors introduce a “split‑embodiment” condition in which the robot’s head/base subsystem and its arm/gripper subsystem are each controlled by a distinct persona, while a “co‑embodiment” condition features two personas sharing full control of the robot, and a baseline “single‑agent” condition presents a single unified persona.

Using the Hello Robot Stretch 3 platform, the study implements three tasks of increasing interaction complexity: (1) a motivational support task with minimal physical interaction, (2) an independent sorting task where the robot makes isolated manipulation errors that do not affect the participant’s success, and (3) a collaborative arranging task where the robot’s failure directly disrupts the participant’s work. Planned failures include repeated grasp attempts, mis‑placement of blocks, and a large “sweep‑away” error that knocks all blocks off the table. In each failure scenario, the robot either self‑apologizes (single‑agent) or receives reassurance from the “main” persona (co‑ and split‑embodiment).

Key methodological details: control signals are distributed via ROS 2; visual servoing uses a fine‑tuned YOLO detector for block identification; speech synthesis employs Coqui’s VITS TTS model, with the second persona’s voice differentiated only by a higher pitch to avoid confounding voice type with identity. In split‑embodiment, the “head” persona controls the head camera and base and speaks through a head‑mounted speaker, while the “hand” persona controls the lift, arm, and wrist and speaks through a wrist‑mounted speaker, creating spatially distinct audio cues.

The mixed‑design experiment (3 embodiment conditions × 3 tasks) measured three primary outcomes: (a) identity perception—whether participants treat the subsystems as separate robots, (b) trust and capability ratings before and after failures, and (c) error attribution—whether blame is assigned to the responsible persona only. Results show that participants reliably perceived the split embodiment as two distinct agents, correctly linked failures to the corresponding persona, and maintained trust in the unaffected subsystem. Thus, error attribution can be compartmentalized, preventing a single failure from degrading overall system trust.

The findings suggest that split‑embodiment offers the social benefits of multi‑robot setups (multiple personalities, richer interaction) while requiring only a single hardware platform, reducing cost and integration complexity. Moreover, the ability to isolate trust domains within one robot could be valuable for real‑world applications such as home assistants, where a manipulation failure (hand) need not diminish the user’s confidence in the conversational (head) capabilities.

The paper contributes a novel experimental paradigm, demonstrates practical implementation with minimal hardware modifications (a cheap USB speaker and pitch‑shifted TTS), and opens avenues for future work on more granular subsystem divisions (e.g., vision, audition, haptics) and longitudinal studies of trust dynamics in multi‑persona robots.

Comments & Academic Discussion

Loading comments...

Leave a Comment