SIGMA: Selective-Interleaved Generation with Multi-Attribute Tokens

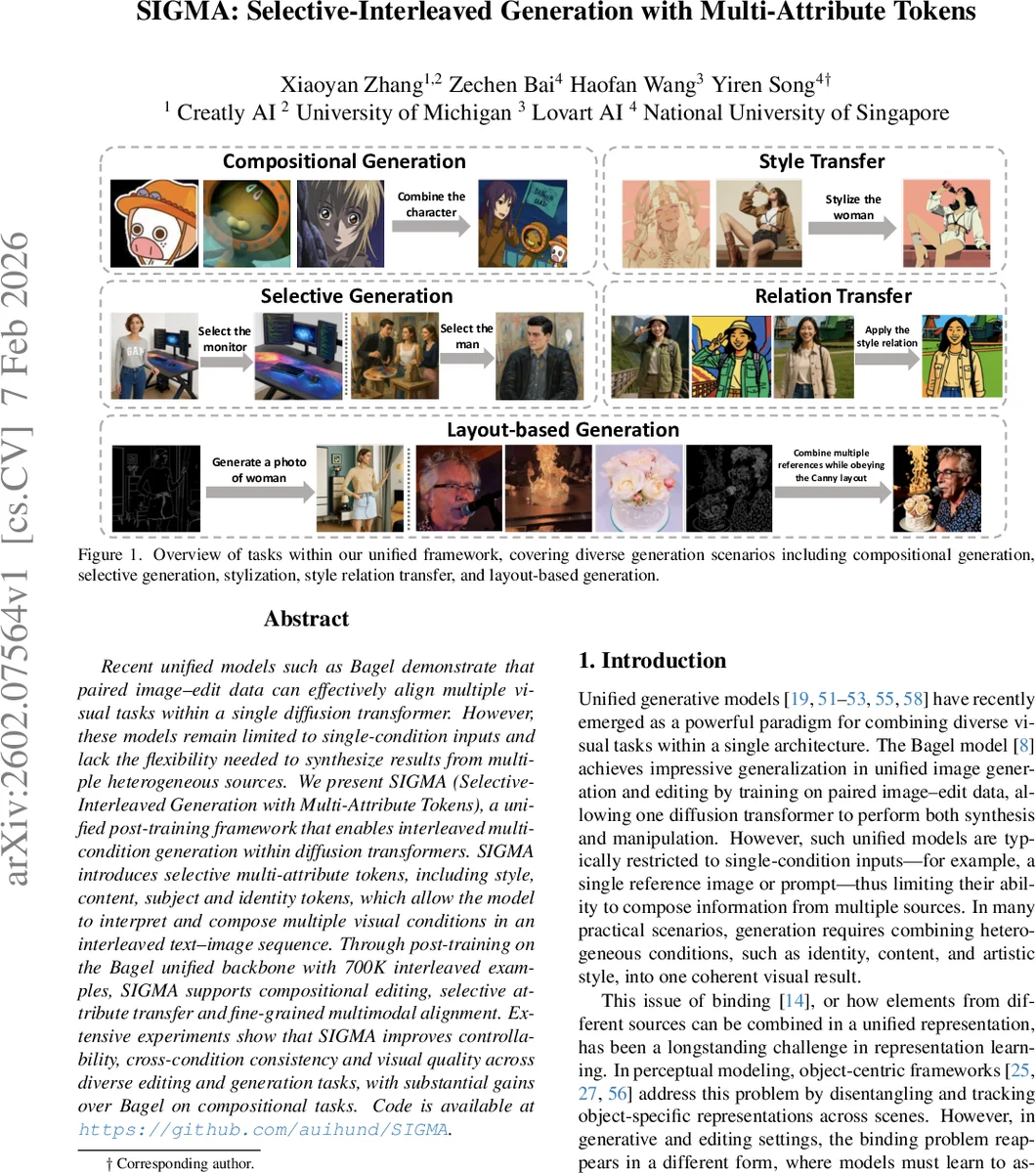

Recent unified models such as Bagel demonstrate that paired image-edit data can effectively align multiple visual tasks within a single diffusion transformer. However, these models remain limited to single-condition inputs and lack the flexibility needed to synthesize results from multiple heterogeneous sources. We present SIGMA (Selective-Interleaved Generation with Multi-Attribute Tokens), a unified post-training framework that enables interleaved multi-condition generation within diffusion transformers. SIGMA introduces selective multi-attribute tokens, including style, content, subject, and identity tokens, which allow the model to interpret and compose multiple visual conditions in an interleaved text-image sequence. Through post-training on the Bagel unified backbone with 700K interleaved examples, SIGMA supports compositional editing, selective attribute transfer, and fine-grained multimodal alignment. Extensive experiments show that SIGMA improves controllability, cross-condition consistency, and visual quality across diverse editing and generation tasks, with substantial gains over Bagel on compositional tasks.

💡 Research Summary

SIGMA (Selective‑Interleaved Generation with Multi‑Attribute Tokens) addresses a fundamental limitation of recent unified diffusion‑transformer models such as Bagel: the inability to handle multiple heterogeneous conditioning signals simultaneously. While Bagel excels at learning a single image‑edit pair and can perform both synthesis and manipulation, it is constrained to a single condition (one reference image or one textual prompt). In many practical scenarios—e.g., combining a portrait, a style reference, and a layout map—models must bind information from several sources into a coherent visual output, a problem often referred to as the “binding problem” in representation learning.

The paper introduces two core technical contributions. First, Selective Multi‑Attribute Tokens are defined for each conditioning image. A fixed vocabulary T includes tokens such as