MUFASA: A Multi-Layer Framework for Slot Attention

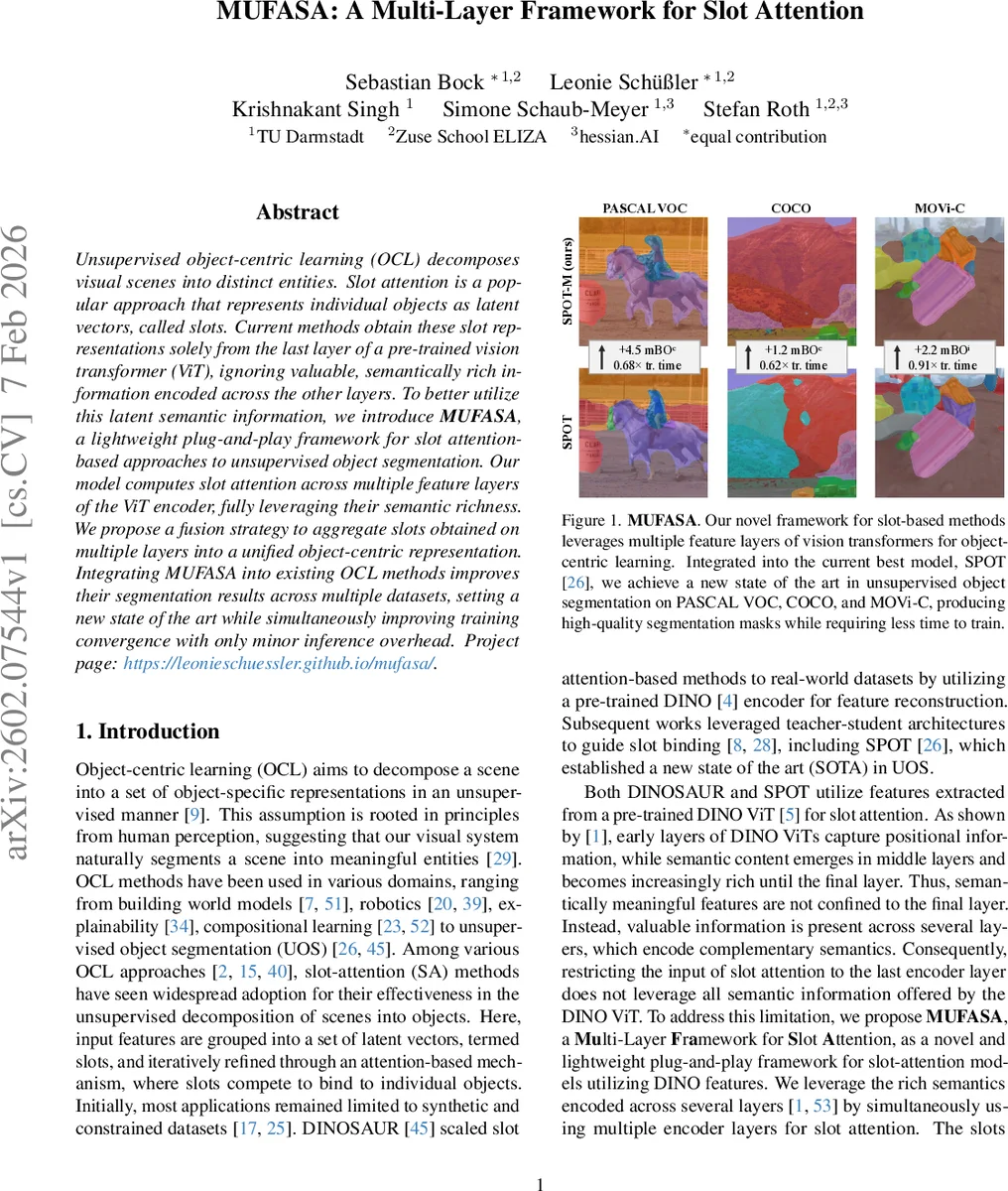

Unsupervised object-centric learning (OCL) decomposes visual scenes into distinct entities. Slot attention is a popular approach that represents individual objects as latent vectors, called slots. Current methods obtain these slot representations solely from the last layer of a pre-trained vision transformer (ViT), ignoring valuable, semantically rich information encoded across the other layers. To better utilize this latent semantic information, we introduce MUFASA, a lightweight plug-and-play framework for slot attention-based approaches to unsupervised object segmentation. Our model computes slot attention across multiple feature layers of the ViT encoder, fully leveraging their semantic richness. We propose a fusion strategy to aggregate slots obtained on multiple layers into a unified object-centric representation. Integrating MUFASA into existing OCL methods improves their segmentation results across multiple datasets, setting a new state of the art while simultaneously improving training convergence with only minor inference overhead.

💡 Research Summary

The paper addresses a fundamental limitation in current unsupervised object‑centric learning (OCL) pipelines that rely on slot attention: they only consume the final‑layer features of a pretrained vision transformer (ViT), typically a DINO‑ViT. Prior work has shown that ViT layers encode a hierarchy of information—early layers capture positional cues, intermediate layers encode increasingly rich semantics, and the deepest layer aggregates the most abstract concepts. Ignoring this hierarchy discards valuable complementary signals that could improve object segmentation.

To exploit the full spectrum of ViT representations, the authors propose MUFASA (Multi‑Layer Framework for Slot Attention), a lightweight, plug‑and‑play module that can be inserted into any slot‑attention based model using a DINO encoder. MUFASA selects a set of M encoder layers (e.g., layers 9‑12) and runs an independent slot‑attention block on each layer’s token embeddings, producing K slots per layer and a corresponding slot‑attention mask. Because slots from different layers must refer to the same physical objects, the method aligns them using Hungarian matching on the binary masks, reordering the slots so that slot k in layer m corresponds to the same object across all layers.

After alignment, the M sets of slots are fused into a single unified slot set (S_fused) via a novel “M‑Fusion” operation: each pair of adjacent layer slots is linearly projected and summed, while their masks are combined with learned weights to form a fused attention mask (A_Slot_fused). The fused slots are then fed to the original autoregressive transformer decoder, which reconstructs the deepest‑layer features and yields a decoder‑attention mask (A_Dec) that serves as the final segmentation prediction. Training uses only the standard reconstruction loss; no extra objectives or regularizers are required.

The authors integrate MUFASA into two state‑of‑the‑art OCL systems: DINOSAUR and SPOT, creating DINOSAUR‑M and SPOT‑M. Experiments on Pascal‑VOC, COCO, and the synthetic MOVi‑C benchmark demonstrate consistent improvements: mean IoU (mIoU) gains of 2–4 percentage points over the baselines, and a reduction in training time by 10–30 %. Qualitative visualizations show that early layers contribute coarse object outlines, while deeper layers refine boundaries and separate adjacent objects; the fusion step effectively merges these complementary cues, correcting over‑segmentation and missing‑detail errors observed in single‑layer models.

Ablation studies explore the impact of (i) the number and choice of layers, (ii) the number of slots K, and (iii) the fusion weighting scheme. Results indicate that using four consecutive deep layers (9‑12) offers the best trade‑off between performance and computational overhead, and that independent slot‑attention parameters per layer are crucial for capturing layer‑specific semantics. The method remains lightweight: the additional parameters are limited to the extra slot‑attention blocks and the fusion linear layers, incurring negligible inference overhead.

In summary, MUFASA demonstrates that multi‑layer ViT features can be directly harnessed by slot‑attention mechanisms to produce richer, more accurate object‑centric representations. Its plug‑and‑play nature makes it applicable to a wide range of existing slot‑based models and potentially to other pretrained encoders (e.g., CLIP, MAE). By bridging the gap between hierarchical ViT representations and object‑centric learning, MUFASA sets a new state‑of‑the‑art in unsupervised object segmentation while also accelerating training convergence.

Comments & Academic Discussion

Loading comments...

Leave a Comment