Differentiate-and-Inject: Enhancing VLAs via Functional Differentiation Induced by In-Parameter Structural Reasoning

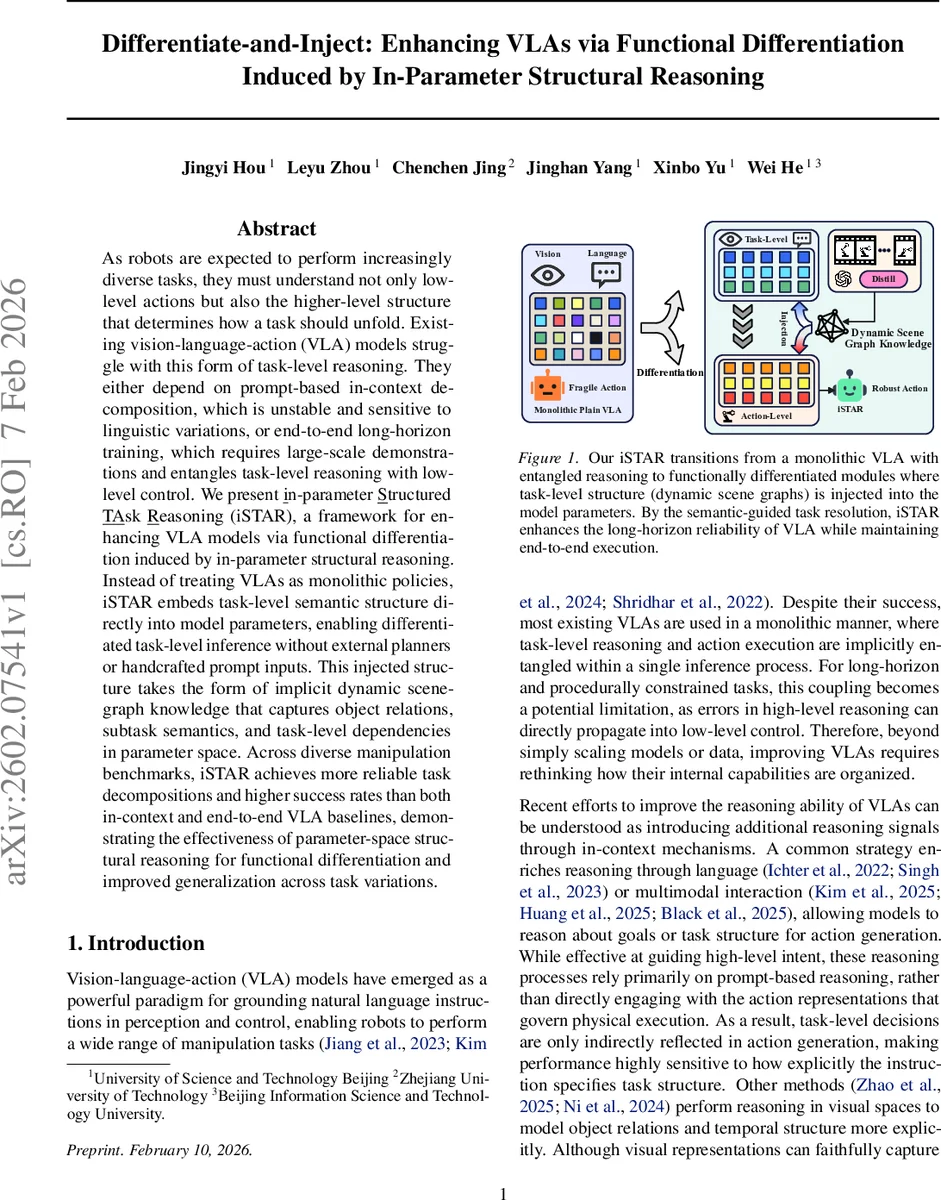

As robots are expected to perform increasingly diverse tasks, they must understand not only low-level actions but also the higher-level structure that determines how a task should unfold. Existing vision-language-action (VLA) models struggle with this form of task-level reasoning. They either depend on prompt-based in-context decomposition, which is unstable and sensitive to linguistic variations, or end-to-end long-horizon training, which requires large-scale demonstrations and entangles task-level reasoning with low-level control. We present in-parameter structured task reasoning (iSTAR), a framework for enhancing VLA models via functional differentiation induced by in-parameter structural reasoning. Instead of treating VLAs as monolithic policies, iSTAR embeds task-level semantic structure directly into model parameters, enabling differentiated task-level inference without external planners or handcrafted prompt inputs. This injected structure takes the form of implicit dynamic scene-graph knowledge that captures object relations, subtask semantics, and task-level dependencies in parameter space. Across diverse manipulation benchmarks, iSTAR achieves more reliable task decompositions and higher success rates than both in-context and end-to-end VLA baselines, demonstrating the effectiveness of parameter-space structural reasoning for functional differentiation and improved generalization across task variations.

💡 Research Summary

The paper addresses a fundamental limitation of current vision‑language‑action (VLA) systems: they treat the entire perception‑language‑control pipeline as a monolithic policy, which entangles high‑level task reasoning with low‑level motor execution. Existing remedies either rely on in‑context prompting, which is fragile to linguistic variations, or on end‑to‑end long‑horizon training that demands massive demonstration data and still mixes reasoning with control.

To overcome these issues, the authors propose in‑parameter Structured Task Reasoning (iSTAR), a framework that injects task‑level semantic structure directly into the parameters of a VLA model, thereby achieving functional differentiation without external planners or handcrafted prompts. iSTAR duplicates the pre‑trained VLA backbone into two functional instances. The first instance, called the pre‑action VLA module, processes the natural‑language instruction together with the current visual observation to extract object‑centric and action‑centric concept embeddings at each timestep. These embeddings form a set V of semantic concepts.

A dynamic implicit concept graph is then built over V. Each node is first filtered by an attribute gate that suppresses dimensions irrelevant to the current subtask. A recurrent unit supplies a dynamic positional encoding that captures temporal phase information, which is further modulated by an order gate. The gated representations are fused and passed through multi‑head attention (optionally enriched with edge embeddings) to perform latent message passing, yielding a structure‑aware node set V_fused.

Training of this graph is guided by a structured loss L_struct. The loss aligns gated node vectors with the embedding of the current subtask description (p_t) using a softmax‑based similarity term, encouraging the graph to highlight the correct objects and actions. A second term penalizes low entropy in the order gate, preventing premature commitment to a fixed temporal ordering and allowing exploration of multiple plausible subtask sequences during learning.

The fused node representations are then projected into a subtask prompt space via a subtask prompt projector. This projector translates the graph‑aware semantic vectors into language embeddings that serve as explicit semantic commitments for the downstream action generator. Consequently, the second VLA instance (the action‑generating module) receives a concise subprompt and only needs to solve a short‑horizon decision problem, dramatically reducing the burden of long‑range reasoning at execution time.

Because most VLA datasets lack explicit subtask prompts, iSTAR leverages large vision‑language models (VLMs) to distill step‑wise textual descriptions from video demonstrations. These distilled descriptions act as supervision for the prompt projector, enabling the model to learn compact subtask embeddings without manual annotation. For multimodal benchmarks like VIMA‑Bench, object masks and spatial references are used to reconstruct multimodal prompts compatible with token‑based VLA architectures.

The authors provide a theoretical analysis showing that a structured policy (graph → prompt → action) decomposes the performance gap relative to an end‑to‑end policy into three error terms: action decoding error (ϵ_a), graph‑to‑prompt realization error (ϵ_r), and graph prediction error (ϵ_G). By isolating task‑level reasoning in the graph, iSTAR reduces ϵ_a (since action decoding becomes a one‑step supervised problem) and mitigates ϵ_r and ϵ_G through graph learning and VLM distillation.

Empirically, iSTAR is evaluated on several manipulation suites, including VIMA‑Bench, ALFRED‑Manip, and other long‑horizon tasks. Across all benchmarks, iSTAR consistently outperforms prompt‑based baselines and standard end‑to‑end VLA models in terms of success rate, plan accuracy, and sample efficiency. Notably, tasks that require complex object relations and sequential dependencies benefit most from the explicit dynamic scene‑graph injected into the model parameters, demonstrating superior compositional generalization.

In summary, iSTAR introduces a principled mechanism for functional differentiation of VLA models by embedding dynamic, task‑level structural knowledge directly into the parameter space. This approach eliminates the need for external planners or fragile prompt engineering while delivering more reliable, data‑efficient, and generalizable long‑horizon robot manipulation.

Comments & Academic Discussion

Loading comments...

Leave a Comment