LLM-Guided Diagnostic Evidence Alignment for Medical Vision-Language Pretraining under Limited Pairing

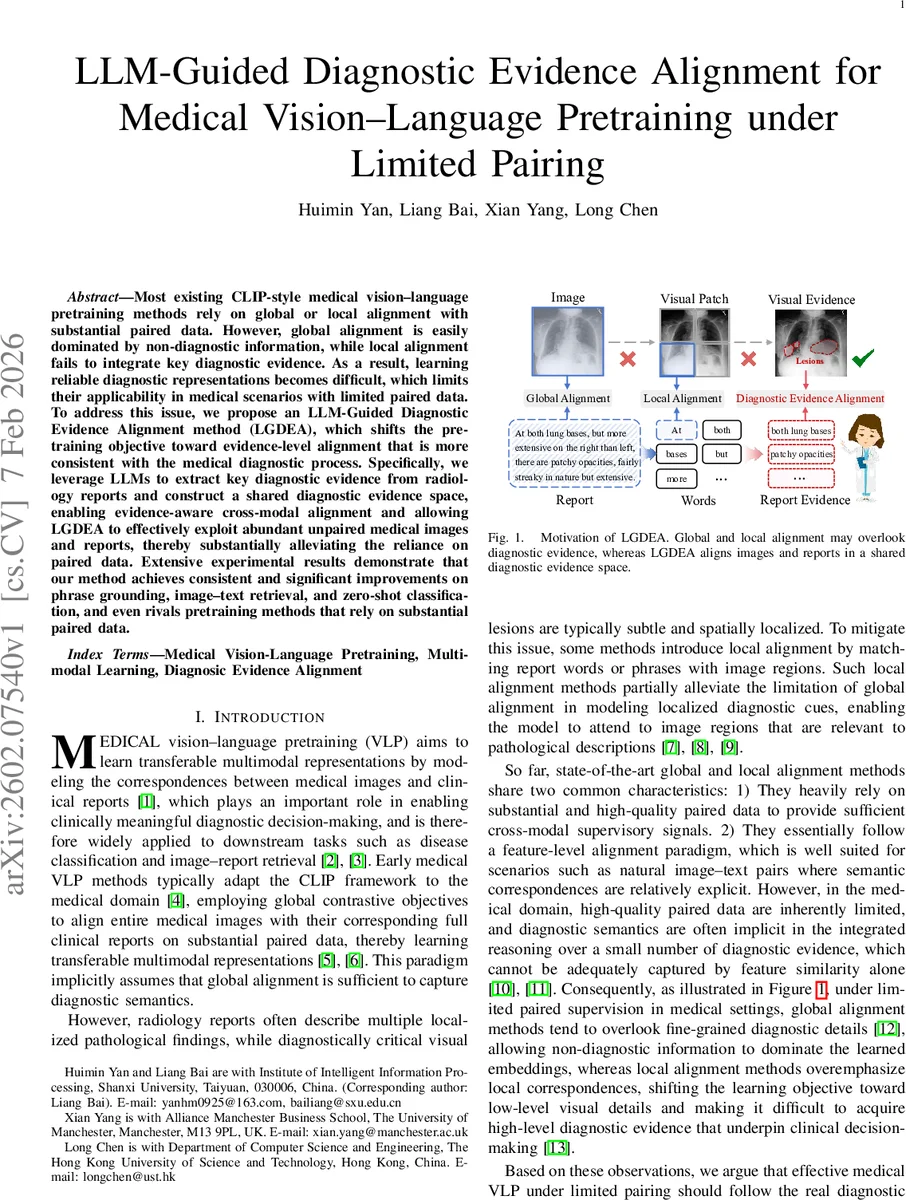

Most existing CLIP-style medical vision–language pretraining methods rely on global or local alignment with substantial paired data. However, global alignment is easily dominated by non-diagnostic information, while local alignment fails to integrate key diagnostic evidence. As a result, learning reliable diagnostic representations becomes difficult, which limits their applicability in medical scenarios with limited paired data. To address this issue, we propose an LLM-Guided Diagnostic Evidence Alignment method (LGDEA), which shifts the pretraining objective toward evidence-level alignment that is more consistent with the medical diagnostic process. Specifically, we leverage LLMs to extract key diagnostic evidence from radiology reports and construct a shared diagnostic evidence space, enabling evidence-aware cross-modal alignment and allowing LGDEA to effectively exploit abundant unpaired medical images and reports, thereby substantially alleviating the reliance on paired data. Extensive experimental results demonstrate that our method achieves consistent and significant improvements on phrase grounding, image–text retrieval, and zero-shot classification, and even rivals pretraining methods that rely on substantial paired data.

💡 Research Summary

The paper addresses a fundamental limitation of current medical vision‑language pre‑training (VLP) methods, which rely either on global contrastive alignment of whole images with whole reports or on local word‑to‑region matching. Global alignment is easily dominated by non‑diagnostic background information, while local alignment focuses on fine‑grained visual details but fails to capture the higher‑level diagnostic evidence that clinicians actually use. Both approaches also require a large amount of high‑quality paired image‑report data, which is scarce in the medical domain.

To overcome these challenges, the authors propose LLM‑Guided Diagnostic Evidence Alignment (LGDEA), a framework that shifts the pre‑training objective from feature‑level alignment to diagnostic‑evidence‑level alignment. The method consists of three main components:

-

LLM‑driven evidence extraction – A large language model (LLM) processes each radiology report and extracts concise, clinically meaningful evidence phrases (e.g., “patchy opacities at both lung bases”). These phrases are encoded by a text encoder into embeddings (z_n).

-

Shared diagnostic evidence space – A set of learnable prototype vectors ({\mu_k}_{k=1}^K) is introduced. Each evidence embedding is softly assigned to the prototypes via a softmax over dot products, producing a distribution (p(k|z_n)). A reconstruction loss forces the original embedding to be recoverable from the weighted prototype sum, encouraging all (paired and unpaired) reports to contribute semantic supervision in the same latent space.

-

Evidence‑aware visual learning – For each image, a set of learnable lesion queries attends to patch‑level visual features, yielding lesion embeddings (v_\ell). These are projected into the prototype space, giving a prototype distribution (Q_I(\ell,\cdot)) for each lesion.

- When an image is paired with a report, the prototype assignments of the report’s extracted evidence are averaged to form a teacher distribution (\bar{Q}_R). A KL‑divergence loss aligns (\bar{Q}_R) with the average lesion distribution (\bar{Q}_I), effectively distilling diagnostic evidence into the visual modality without explicit lesion annotations.

- For unpaired images, visual similarity is exploited: lesions that are nearest neighbours in the embedding space are encouraged to have similar prototype distributions via a similarity‑weighted KL loss. This propagates diagnostic semantics from paired to unpaired images.

-

Higher‑order cross‑modal alignment – The framework builds a soft evidence‑relation matrix (P_{ij}) that estimates how likely image (i) and report (j) share the same diagnostic evidence. For paired samples, (P) is simply the binary pairing matrix; for the largely unpaired setting, (P) is inferred by propagating the sparse seed relations over intra‑modal evidence graphs (image‑image and report‑report). The final cross‑modal contrastive loss uses (P_{ij}) as soft positives, allowing the model to learn from abundant unpaired data while still being anchored by the limited paired supervision.

Experimental validation is performed on large public chest X‑ray datasets (e.g., MIMIC‑CXR, OpenI). The authors simulate limited pairing by retaining only a small fraction (≤10 %) of true image‑report pairs. Across three downstream tasks—phrase grounding (localizing textual evidence in images), image‑text retrieval, and zero‑shot disease classification—LGDEA consistently outperforms strong baselines that use only global alignment (PubMedCLIP, MedCLIP) or local alignment (GLoRIA, MedKLIP, AFLoc). Notably, LGDEA even surpasses some methods trained with substantially more paired data, demonstrating its ability to leverage unpaired images and reports effectively. Qualitative visualizations show that the model’s attention maps align with the clinically extracted evidence, indicating improved interpretability.

Key contributions highlighted by the authors are:

- Introducing an LLM‑driven pipeline to automatically extract diagnostic evidence from free‑text reports, thereby creating a high‑quality semantic supervision signal without manual annotation.

- Designing a prototype‑based shared evidence space that bridges the textual and visual modalities at the level of diagnostic concepts.

- Proposing evidence‑aware visual learning and similarity‑based consistency losses that enable unpaired images to participate in training.

- Developing a graph‑based propagation mechanism to infer higher‑order cross‑modal relations, turning sparse paired data into a dense supervisory signal for contrastive learning.

In summary, LGDEA presents a novel, evidence‑centric paradigm for medical VLP that dramatically reduces dependence on costly paired datasets while delivering superior performance and better clinical relevance. The work opens avenues for scaling multimodal medical AI in real‑world settings where annotated pairs are limited but large collections of unlabeled images and reports are readily available.

Comments & Academic Discussion

Loading comments...

Leave a Comment