Evaluating Object-Centric Models beyond Object Discovery

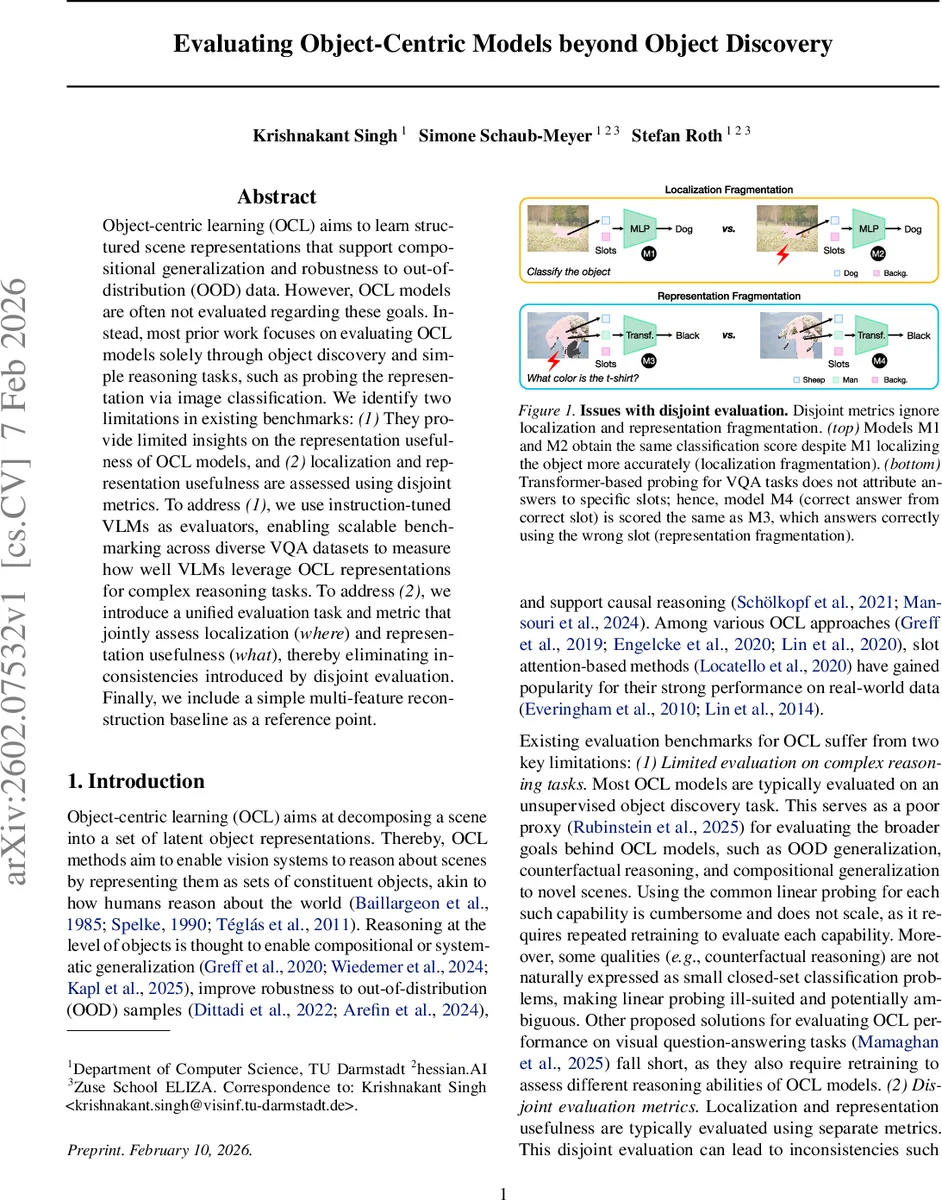

Object-centric learning (OCL) aims to learn structured scene representations that support compositional generalization and robustness to out-of-distribution (OOD) data. However, OCL models are often not evaluated regarding these goals. Instead, most prior work focuses on evaluating OCL models solely through object discovery and simple reasoning tasks, such as probing the representation via image classification. We identify two limitations in existing benchmarks: (1) They provide limited insights on the representation usefulness of OCL models, and (2) localization and representation usefulness are assessed using disjoint metrics. To address (1), we use instruction-tuned VLMs as evaluators, enabling scalable benchmarking across diverse VQA datasets to measure how well VLMs leverage OCL representations for complex reasoning tasks. To address (2), we introduce a unified evaluation task and metric that jointly assess localization (where) and representation usefulness (what), thereby eliminating inconsistencies introduced by disjoint evaluation. Finally, we include a simple multi-feature reconstruction baseline as a reference point.

💡 Research Summary

The paper critically examines the current state of evaluation for object‑centric learning (OCL) models and identifies two fundamental shortcomings. First, most benchmarks focus on unsupervised object discovery or simple linear probing for image classification, which provide only a weak proxy for the broader goals of OCL—compositional generalization, out‑of‑distribution robustness, counterfactual reasoning, and causal inference. These tasks often require diverse, open‑ended outputs that cannot be captured by closed‑set classification probes, and the need to retrain a probe for each new benchmark incurs a high amortized cost. Second, existing evaluation protocols treat localization (where an object is) and representation usefulness (what is known about the object) as separate metrics. This disjoint assessment allows models to achieve high scores despite “localization fragmentation” (accurate semantics but poor spatial grounding) or “representation fragmentation” (a single object’s features spread across multiple slots).

To address these gaps, the authors propose a two‑pronged evaluation framework. The first component replaces traditional vision encoders in instruction‑tuned vision‑language models (VLMs) with OCL encoders (e.g., slot‑attention networks). By training only a lightweight connector (a two‑layer MLP) and fine‑tuning the language model on multimodal instruction data (following the LLaVA paradigm), the resulting VLM can be used as a zero‑shot evaluator across a wide range of visual question‑answering (VQA) datasets. This setup measures how readily downstream multimodal systems can exploit the latent object representations without any task‑specific retraining, providing a practical “utility” metric for OCL models.

The second component introduces Attribution‑aware Grounded Accuracy (AwGA), a unified metric that simultaneously captures both “what” and “where”. Traditional Grounded Accuracy (G‑Acc) multiplies answer correctness by the IoU between predicted and ground‑truth masks, penalizing spatial errors but ignoring which slots contributed to the answer. AwGA first computes a gradient‑based attribution score for each slot with respect to the loss of the predicted answer, selects the top‑K slots where K equals the number of objects required by the question, and then computes the mean IoU between the union of those slots’ masks and the ground‑truth mask. By tying attribution to spatial overlap, AwGA penalizes both localization fragmentation and representation fragmentation in a single scalar.

The authors benchmark several state‑of‑the‑art OCL models—including Slot‑Diffusion, StableLSD, DINOSAUR, FT‑DINOSAUR, and SPOT—using both the VLM‑based zero‑shot VQA protocol and the AwGA metric. Results show that (i) VLM‑based evaluation yields consistent model rankings across different large language model backbones and connector architectures, while dramatically reducing evaluation cost; (ii) AwGA provides a more discriminative ranking than G‑Acc, revealing fragmentation failures that are invisible to separate metrics; and (iii) a simple multi‑feature reconstruction baseline, which predicts multiple self‑supervised features rather than raw pixels, consistently improves both VQA utility and AwGA scores, suggesting that reconstruction targets strongly influence downstream usefulness.

The paper’s contributions are fourfold: (1) a scalable, zero‑shot VLM evaluation framework for OCL that directly measures downstream utility on diverse reasoning tasks; (2) the AwGA metric that jointly assesses localization and representation quality, closing the gap left by disjoint metrics; (3) empirical evidence that the proposed framework yields stable rankings across LLM variants and connector designs, supporting reproducibility; and (4) a baseline demonstrating that even modest architectural changes (multi‑feature reconstruction) can substantially boost OCL performance under the new evaluation regime.

Overall, this work shifts the evaluation of object‑centric models from isolated object discovery toward a holistic assessment of how well the learned slots serve real multimodal reasoning systems. By providing both a practical zero‑shot benchmark and a unified quality metric, the authors lay the groundwork for future OCL research to be judged on its true end‑to‑end utility rather than on proxy tasks.

Comments & Academic Discussion

Loading comments...

Leave a Comment