Surveillance Facial Image Quality Assessment: A Multi-dimensional Dataset and Lightweight Model

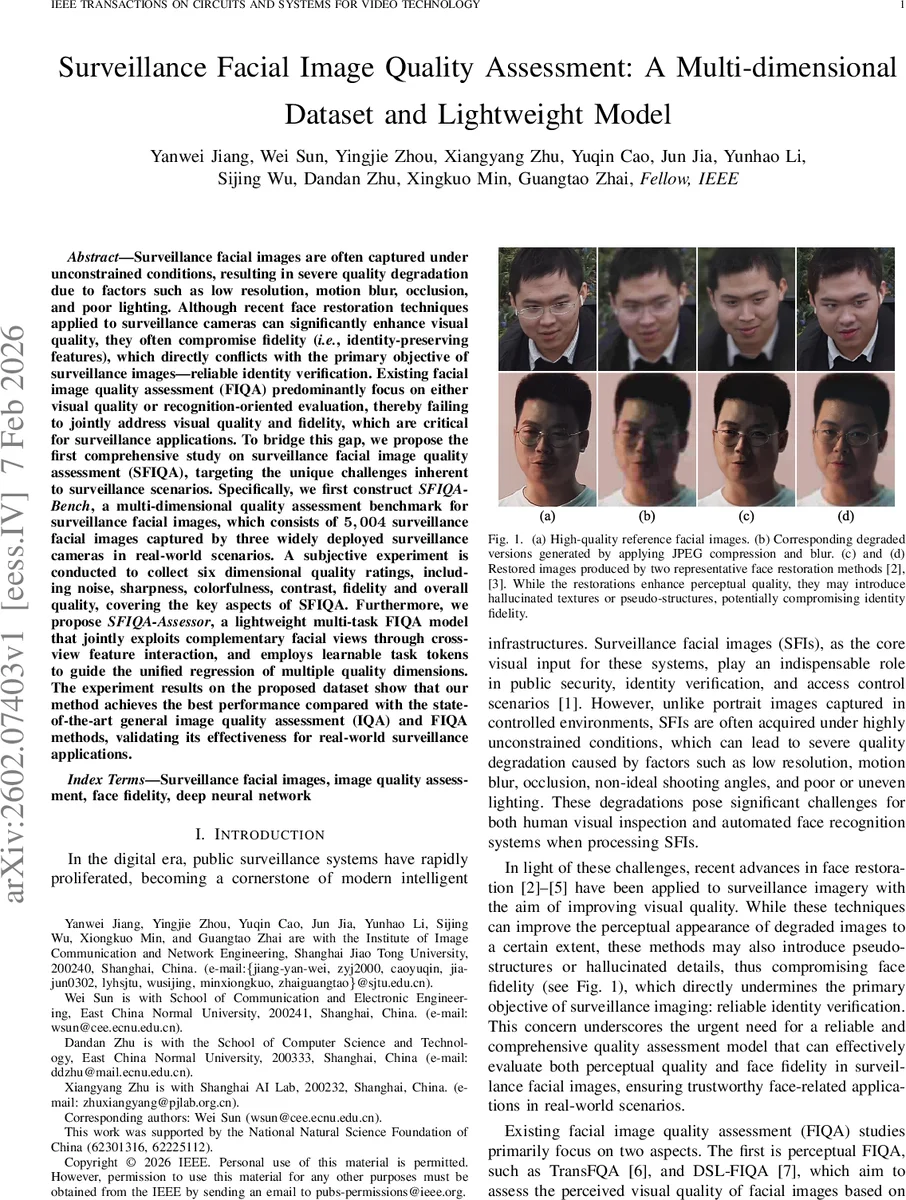

Surveillance facial images are often captured under unconstrained conditions, resulting in severe quality degradation due to factors such as low resolution, motion blur, occlusion, and poor lighting. Although recent face restoration techniques applied to surveillance cameras can significantly enhance visual quality, they often compromise fidelity (i.e., identity-preserving features), which directly conflicts with the primary objective of surveillance images – reliable identity verification. Existing facial image quality assessment (FIQA) predominantly focus on either visual quality or recognition-oriented evaluation, thereby failing to jointly address visual quality and fidelity, which are critical for surveillance applications. To bridge this gap, we propose the first comprehensive study on surveillance facial image quality assessment (SFIQA), targeting the unique challenges inherent to surveillance scenarios. Specifically, we first construct SFIQA-Bench, a multi-dimensional quality assessment benchmark for surveillance facial images, which consists of 5,004 surveillance facial images captured by three widely deployed surveillance cameras in real-world scenarios. A subjective experiment is conducted to collect six dimensional quality ratings, including noise, sharpness, colorfulness, contrast, fidelity and overall quality, covering the key aspects of SFIQA. Furthermore, we propose SFIQA-Assessor, a lightweight multi-task FIQA model that jointly exploits complementary facial views through cross-view feature interaction, and employs learnable task tokens to guide the unified regression of multiple quality dimensions. The experiment results on the proposed dataset show that our method achieves the best performance compared with the state-of-the-art general image quality assessment (IQA) and FIQA methods, validating its effectiveness for real-world surveillance applications.

💡 Research Summary

This paper addresses a critical gap in the evaluation of surveillance facial images by introducing both a novel benchmark and a lightweight multi‑task model that jointly assess visual quality and identity fidelity—two aspects that are essential for real‑world security applications but have been treated separately in prior work.

Benchmark (SFIQA‑Bench)

The authors collected 5,004 facial images from three widely deployed surveillance cameras covering indoor person monitoring, outdoor pedestrian monitoring, and intelligent transportation system (ITS) vehicle‑occupant monitoring. Each camera is equipped with an automatic face‑enhancement module that activates under poor lighting or motion conditions, providing a realistic mix of raw, enhanced, and restored images. A large‑scale subjective study involved 100 participants; every image received at least 25 independent ratings across six dimensions: noise, sharpness, colorfulness, contrast, fidelity, and overall quality. The fidelity dimension is particularly novel—it captures the degree to which restoration or enhancement introduces unnatural, hallucinated facial details that could jeopardize identity verification. The resulting multi‑dimensional labels constitute the first human‑ground‑truth dataset that simultaneously reflects perceptual degradation and identity preservation for surveillance‑type faces.

Model (SFIQA‑Assessor)

The proposed assessor is designed for real‑time deployment. It ingests three complementary views of each face: (1) the full image (including background), (2) a tightly cropped face region, and (3) an eyes‑and‑mouth region that emphasizes the most discriminative facial components. Each view passes through a multi‑scale convolutional encoder that extracts rich feature maps. A lightweight cross‑view attention module then fuses these representations, allowing the network to capture inter‑view dependencies without incurring the heavy cost of full‑image Transformers.

The fused representation feeds into a task‑aware decoder. First, a set of learnable task tokens (one per quality dimension) undergoes self‑attention to model relationships among the six tasks (e.g., how contrast influences perceived fidelity). Next, each token acts as a query in a cross‑attention layer that extracts task‑specific features from the unified representation. Finally, six separate regression heads map these task‑specific embeddings to continuous quality scores. The entire architecture contains fewer than 2 M parameters and requires modest FLOPs, enabling inference at >30 FPS on a single GPU—well within the constraints of live surveillance pipelines.

Experimental Findings

When evaluated on SFIQA‑Bench, SFIQA‑Assessor consistently outperforms state‑of‑the‑art general image quality assessment (IQA) methods (e.g., NIQE, BRISQUE, DBCNN) and recent facial‑specific FIQA approaches (TransFQA, DSL‑FIQA, CLIB‑FIQA). Across all six dimensions, the model achieves higher Spearman’s rank correlation coefficient (SRCC) and Pearson’s linear correlation coefficient (PLCC) by 0.05–0.12 points, with notably lower root‑mean‑square error (RMSE). The fidelity dimension, where recognition‑oriented FIQA typically overestimates quality after restoration, shows a strong 0.93 correlation with human judgments, demonstrating the model’s ability to detect hallucinated artifacts. Ablation studies confirm that each component—multi‑view input, cross‑view attention, and task‑token‑driven decoding—contributes measurably to performance.

Implications and Future Work

The study establishes a practical pipeline for assessing both perceptual and identity‑preserving quality of surveillance faces, a prerequisite for trustworthy downstream tasks such as automated recognition, alert generation, and forensic analysis. By providing a human‑annotated, multi‑dimensional benchmark, the authors also lay groundwork for future research on quality‑aware face restoration, adaptive enhancement strategies, and robustness testing of recognition systems under realistic degradation. Limitations include the relatively narrow camera diversity (three models) and the focus on still images; extending the dataset to more sensor types, extreme lighting, and video sequences would further strengthen the community’s ability to develop robust, real‑time security solutions.

In summary, the paper delivers a comprehensive, well‑validated solution that bridges the gap between visual quality enhancement and identity fidelity in surveillance facial imaging, offering both a valuable dataset and an efficient model ready for deployment in real‑world security infrastructures.

Comments & Academic Discussion

Loading comments...

Leave a Comment