Controllable Value Alignment in Large Language Models through Neuron-Level Editing

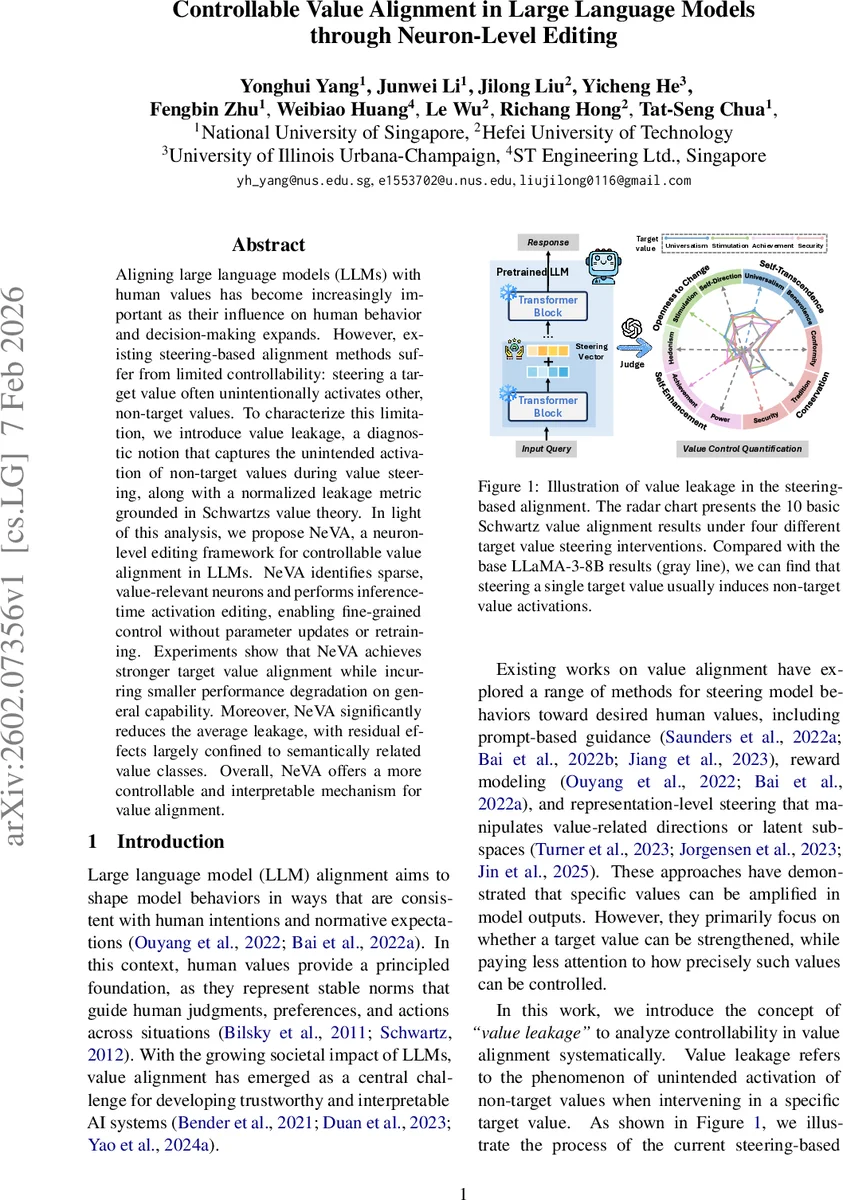

Aligning large language models (LLMs) with human values has become increasingly important as their influence on human behavior and decision-making expands. However, existing steering-based alignment methods suffer from limited controllability: steering a target value often unintentionally activates other, non-target values. To characterize this limitation, we introduce value leakage, a diagnostic notion that captures the unintended activation of non-target values during value steering, along with a normalized leakage metric grounded in Schwartz’s value theory. In light of this analysis, we propose NeVA, a neuron-level editing framework for controllable value alignment in LLMs. NeVA identifies sparse, value-relevant neurons and performs inference-time activation editing, enabling fine-grained control without parameter updates or retraining. Experiments show that NeVA achieves stronger target value alignment while incurring smaller performance degradation on general capability. Moreover, NeVA significantly reduces the average leakage, with residual effects largely confined to semantically related value classes. Overall, NeVA offers a more controllable and interpretable mechanism for value alignment.

💡 Research Summary

The paper tackles the problem of aligning large language models (LLMs) with human values while avoiding unintended activation of non‑target values—a phenomenon the authors term “value leakage.” Building on Schwartz’s ten basic values, the authors first formalize value leakage: for a steering intervention aimed at value vᵢ, any increase in the Control Success Ratio (CSR) of a different value vⱼ (ΔSᵢ→ⱼ > 0) is counted as leakage. They introduce two quantitative metrics: (1) Normalized Leakage Ratio (NLR), which scales the absolute leakage by the maximal achievable gain for the leaked value, and (2) Normalized Group Leakage Ratio (NGLR), which aggregates leakage across Schwartz’s higher‑order value groups and normalizes each row to reveal whether leakage stays within the same value family or spreads across families.

Existing alignment techniques—prompt engineering, reward‑model steering, and representation‑level steering (e.g., ConVA)—are shown to amplify the target value but also cause substantial leakage into other values, indicating that value dimensions are entangled in dense hidden representations.

To address this, the authors propose NeVA (Neuron‑level Value Alignment), a three‑stage framework:

-

Value‑specific probing – Linear probes are trained on publicly available Schwartz‑value datasets to obtain a high‑confidence direction Wᵥ in the residual stream for each value. Only probes with >95 % validation accuracy are retained, ensuring reliable measurement of value‑sensitive directions.

-

Neuron identification – For each feed‑forward neuron (layer l, index k) the cosine similarity sₗₖ = cos(vₗₖ, Wᵥ) between the neuron’s value vector and the probe direction is computed. The top‑K neurons with the largest absolute similarity are split into “value‑aligned” (positive similarity) and “value‑opposed” (negative similarity) sets; all others are treated as value‑agnostic and excluded from intervention. This yields a sparse, interpretable decomposition of the value signal at the neuron level.

-

Context‑aware neuron editing – During inference, each selected neuron’s activation mₗₖ is modified as

mₗₖ,edit = mₗₖ ·

Comments & Academic Discussion

Loading comments...

Leave a Comment