AtomMOF: All-Atom Flow Matching for MOF-Adsorbate Structure Prediction

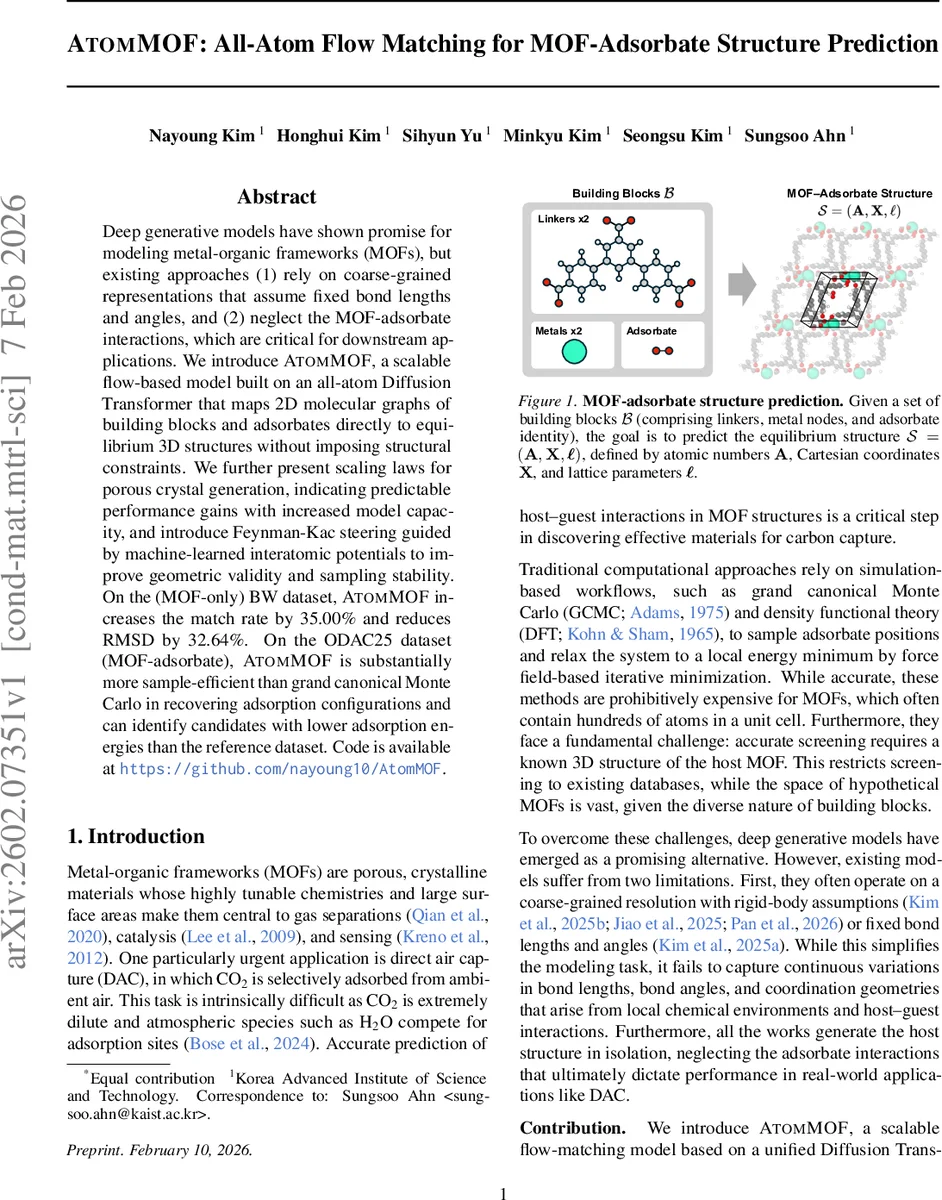

Deep generative models have shown promise for modeling metal-organic frameworks (MOFs), but existing approaches (1) rely on coarse-grained representations that assume fixed bond lengths and angles, and (2) neglect the MOF-adsorbate interactions, which are critical for downstream applications. We introduce AtomMOF, a scalable flow-based model built on an all-atom Diffusion Transformer that maps 2D molecular graphs of building blocks and adsorbates directly to equilibrium 3D structures without imposing structural constraints. We further present scaling laws for porous crystal generation, indicating predictable performance gains with increased model capacity, and introduce Feynman-Kac steering guided by machine-learned interatomic potentials to improve geometric validity and sampling stability. On the (MOF-only) BW dataset, AtomMOF increases the match rate by 35.00% and reduces RMSD by 32.64%. On the ODAC25 dataset (MOF-adsorbate), AtomMOF is substantially more sample-efficient than grand canonical Monte Carlo in recovering adsorption configurations and can identify candidates with lower adsorption energies than the reference dataset. Code is available at https://github.com/nayoung10/AtomMOF.

💡 Research Summary

AtomMOF introduces a novel all‑atom generative framework for predicting the three‑dimensional crystal structures of metal‑organic frameworks (MOFs) together with their adsorbates. Existing deep generative approaches for MOFs typically rely on coarse‑grained, rigid‑body representations that fix bond lengths, angles, or even entire building‑block geometries, and they generate the host framework in isolation, ignoring host‑guest interactions that are crucial for applications such as direct air capture. AtomMOF removes these constraints by directly mapping the 2‑D molecular graphs of linkers, metal nodes, and optional adsorbates to equilibrium atomic coordinates and lattice parameters without imposing any predefined geometric priors.

The core of the method is Variational Flow Matching (VFM), a recent flow‑based generative technique that trains with an L1 reconstruction loss rather than a KL‑divergence. The authors treat atomic positions X and lattice parameters ℓ (edge lengths d and angles ϕ) as a single continuous variable z = (X, ℓ). A linear interpolation path zₜ = (1‑t)z₀ + t z₁ connects a simple Gaussian prior z₀ to the data point z₁. During training, a neural network µθₜ predicts the clean data (X̂₁, ℓ̂₁) from a noisy intermediate state (Xₜ, ℓₜ) conditioned on the building‑block graphs B. The variational posterior is modeled as a Laplace distribution, which reduces the objective to an L1 error on coordinates and lattice parameters, weighted by λcoord and λlattice. This formulation yields stable training and fast convergence.

The denoising network µθₜ is built on a Diffusion Transformer (DiT) backbone and consists of three stages: an encoder that constructs single‑atom features Q and pairwise features P from atom types, formal charges, RDKit‑derived local coordinates, inter‑atomic distances, bond types, and block‑membership masks; a deep trunk of DiT blocks that propagates global interactions, where pairwise bias Z is enriched with linear projections of the single‑atom latent S; and a decoder with two parallel heads that output the predicted coordinates (N × 3) and lattice vector parameters (6‑dimensional). The encoder injects the noisy global coordinates and lattice values into Q, while P serves as an additive bias to the attention logits, allowing the model to respect periodicity and block connectivity.

To ensure that generated structures are physically plausible, the authors augment the base generative model with Feynman‑Kac steering guided by a machine‑learned interatomic potential (MLIP). They define a tilted target distribution p_target(X, ℓ | B) ∝ pθ(X, ℓ | B) · exp(‑λ E(S)), where E(S) is the energy predicted by the MLIP and λ controls the steering strength. By converting the deterministic ODE defined by the learned velocity field v(Xₜ, t) into a stochastic differential equation, they obtain a score function s(Xₜ, t) = t v(Xₜ, t) − Xₜ / (1‑t). This enables particle resampling that preferentially explores low‑energy regions, dramatically improving geometric validity (e.g., eliminating atom‑overlap violations) and sampling stability (reducing ODE integration error).

The experimental evaluation covers two benchmarks. First, on the MOF‑only BW dataset (which contains only nine unique metal node templates), three model sizes are trained: AtomMOF‑S (38 M parameters), AtomMOF‑M (129 M), and AtomMOF‑L (491 M). Using a standard 8:1:1 train/val/test split, the authors generate up to five samples per query and report the best‑matching candidate according to pymatgen’s StructureMatcher. AtomMOF‑L achieves a 35 % increase in match rate and a 32.6 % reduction in RMSD compared with the strongest prior baseline (MOFFlow‑2). Adding Feynman‑Kac steering further raises structural validity by 10.86 % and stability by 84.19 %.

Second, on the ODAC25 dataset, which includes CO₂, H₂O, and other small molecules adsorbed in MOFs, AtomMOF is compared against grand canonical Monte Carlo (GCMC) simulations. AtomMOF requires far fewer samples to recover adsorption configurations of comparable quality, leading to a substantial reduction in wall‑clock time. Moreover, when the MLIP‑relaxed AtomMOF samples are subsequently minimized, the resulting adsorption energies are lower than the lowest‑energy configurations present in the reference dataset, demonstrating that the model can discover novel, energetically favorable host‑guest arrangements.

A noteworthy contribution is the empirical scaling law for porous crystal generation. By training models of increasing size and data volume, the authors observe that match rate improves roughly linearly with log‑model‑size, while RMSD follows a log‑linear decay. This suggests that continued scaling—both in parameters and training data—will yield predictable performance gains, mirroring trends seen in language and vision models.

In summary, AtomMOF delivers four key innovations: (1) an all‑atom, unconstrained generative model that directly predicts both coordinates and lattice parameters; (2) a variational flow‑matching training objective that is simple, stable, and computationally efficient; (3) a physics‑aware sampling enhancement via Feynman‑Kac steering with a learned interatomic potential; and (4) a demonstrated scaling behavior that points to future improvements with larger models. By jointly modeling MOF hosts and adsorbates, AtomMOF bridges a critical gap between crystal structure prediction and adsorption property estimation, offering a fast, accurate alternative to expensive DFT‑based relaxation and GCMC sampling pipelines. The publicly released code and datasets further enable the community to build upon this foundation for accelerated materials discovery in gas separation, catalysis, and beyond.

Comments & Academic Discussion

Loading comments...

Leave a Comment