Optimizing Few-Step Generation with Adaptive Matching Distillation

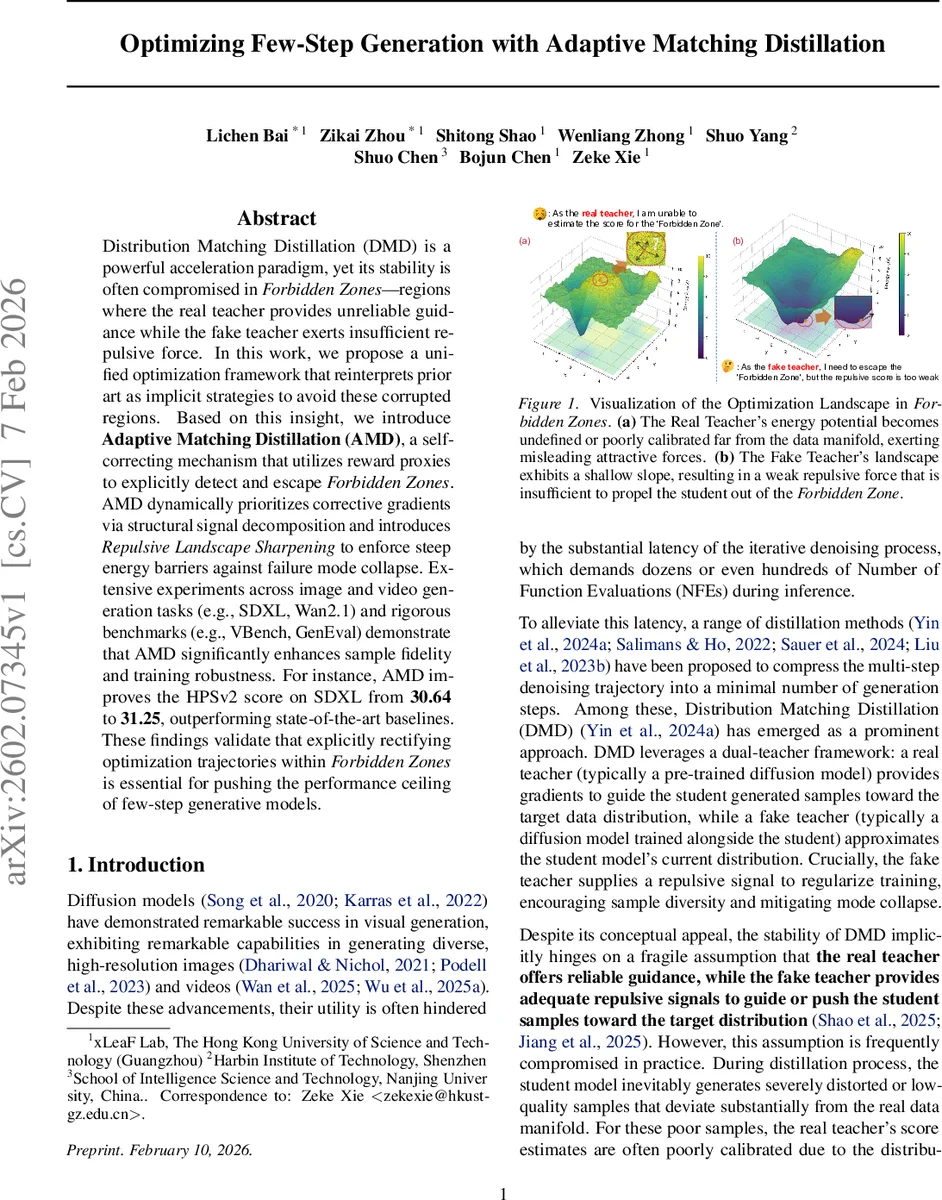

Distribution Matching Distillation (DMD) is a powerful acceleration paradigm, yet its stability is often compromised in Forbidden Zone, regions where the real teacher provides unreliable guidance while the fake teacher exerts insufficient repulsive force. In this work, we propose a unified optimization framework that reinterprets prior art as implicit strategies to avoid these corrupted regions. Based on this insight, we introduce Adaptive Matching Distillation (AMD), a self-correcting mechanism that utilizes reward proxies to explicitly detect and escape Forbidden Zones. AMD dynamically prioritizes corrective gradients via structural signal decomposition and introduces Repulsive Landscape Sharpening to enforce steep energy barriers against failure mode collapse. Extensive experiments across image and video generation tasks (e.g., SDXL, Wan2.1) and rigorous benchmarks (e.g., VBench, GenEval) demonstrate that AMD significantly enhances sample fidelity and training robustness. For instance, AMD improves the HPSv2 score on SDXL from 30.64 to 31.25, outperforming state-of-the-art baselines. These findings validate that explicitly rectifying optimization trajectories within Forbidden Zones is essential for pushing the performance ceiling of few-step generative models.

💡 Research Summary

The paper addresses a critical instability in Distribution Matching Distillation (DMD), a popular technique for compressing multi‑step diffusion models into a few inference steps. The authors identify “Forbidden Zones” as regions where the real teacher’s score becomes unreliable (high energy beyond its empirical support) while the fake teacher’s repulsive force vanishes, causing the student model to become trapped in low‑quality samples. Existing DMD variants (DMD2, D‑DMD, MagicDist, etc.) implicitly avoid these zones but lack explicit detection or dynamic correction mechanisms.

To solve this, the authors propose Adaptive Matching Distillation (AMD), a self‑correcting framework that (1) uses a pre‑trained reward model to flag samples with low reward as entering a Forbidden Zone, (2) trains the fake teacher asymmetrically so that it exerts stronger, targeted repulsion on those flagged samples, and (3) prioritizes the repulsive component over the attractive component via a structural decomposition of the gradient field. The weighting factors α (repulsion) and β (attraction) are continuously adjusted based on a reward‑aware advantage signal, ensuring that inside a Forbidden Zone the repulsive force dominates while outside the normal pull‑push balance is restored.

A further contribution is Repulsive Landscape Sharpening (RLS), which augments the fake teacher’s energy function with a gradient‑norm regularizer, creating steep energy barriers around high‑energy regions. This eliminates the “gradient vacuum” that otherwise leaves the student without useful direction.

Mathematically, the standard DMD loss L_DMD = –E

Comments & Academic Discussion

Loading comments...

Leave a Comment