Diabetic Retinopathy Lesion Segmentation through Attention Mechanisms

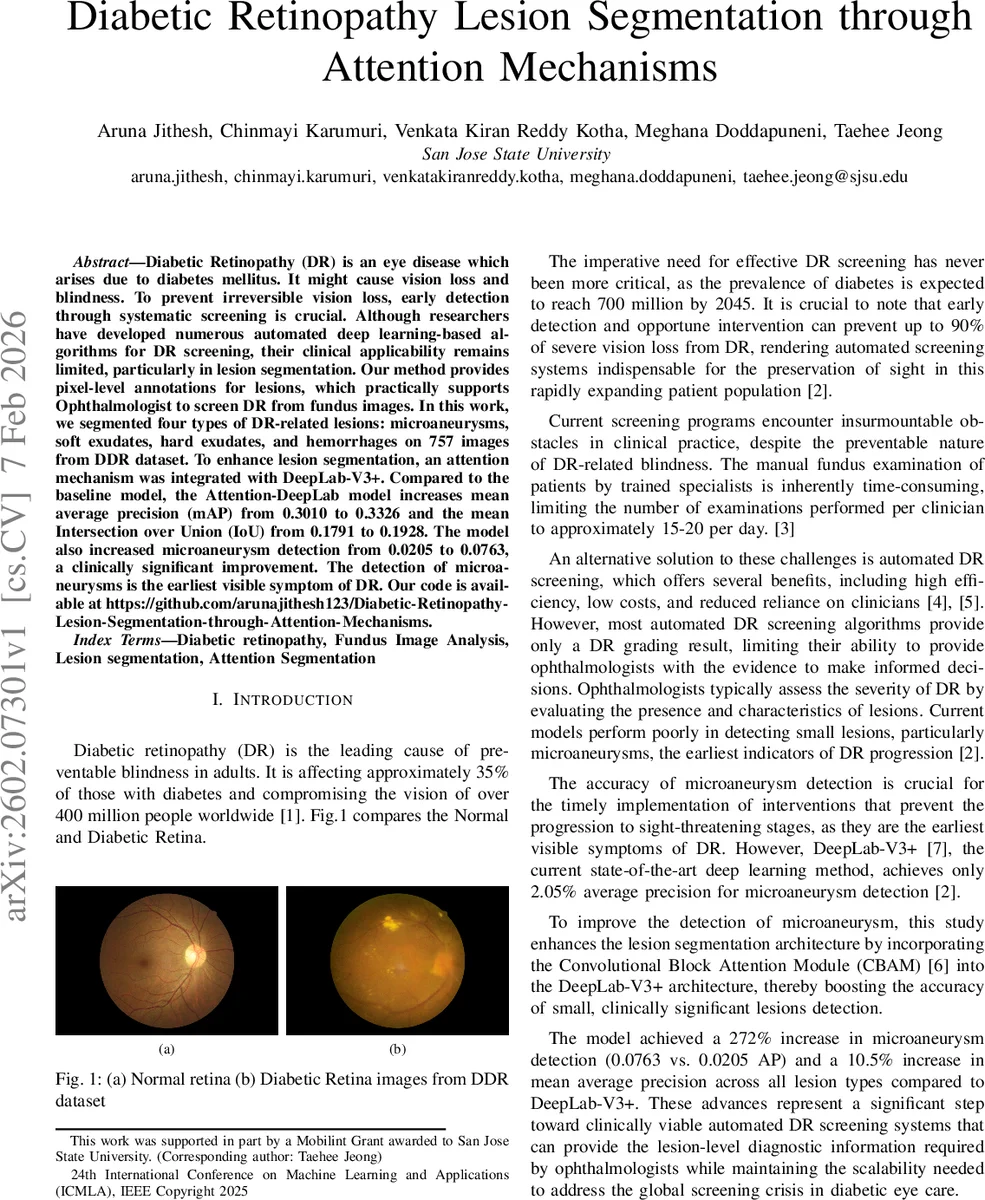

Diabetic Retinopathy (DR) is an eye disease which arises due to diabetes mellitus. It might cause vision loss and blindness. To prevent irreversible vision loss, early detection through systematic screening is crucial. Although researchers have developed numerous automated deep learning-based algorithms for DR screening, their clinical applicability remains limited, particularly in lesion segmentation. Our method provides pixel-level annotations for lesions, which practically supports Ophthalmologist to screen DR from fundus images. In this work, we segmented four types of DR-related lesions: microaneurysms, soft exudates, hard exudates, and hemorrhages on 757 images from DDR dataset. To enhance lesion segmentation, an attention mechanism was integrated with DeepLab-V3+. Compared to the baseline model, the Attention-DeepLab model increases mean average precision (mAP) from 0.3010 to 0.3326 and the mean Intersection over Union (IoU) from 0.1791 to 0.1928. The model also increased microaneurysm detection from 0.0205 to 0.0763, a clinically significant improvement. The detection of microaneurysms is the earliest visible symptom of DR.

💡 Research Summary

Diabetic retinopathy (DR) is a leading cause of preventable blindness, and early detection of its earliest signs—particularly micro‑aneurysms (MAs)—is crucial for timely intervention. While many deep‑learning approaches exist for DR grading, few provide pixel‑level lesion segmentation, limiting their clinical utility. This paper addresses that gap by augmenting the state‑of‑the‑art semantic segmentation network DeepLab‑V3+ with a Convolutional Block Attention Module (CBAM), creating an “Attention‑DeepLab” architecture specifically tuned for the detection of small retinal lesions.

The authors used the DDR dataset, selecting a subset of 757 fundus images that contain pixel‑wise annotations for four lesion types: micro‑aneurysms, soft exudates, hard exudates, and hemorrhages. The data were split into 384 training, 150 validation, and 226 test images. To mitigate the extreme class imbalance (lesions occupy a tiny fraction of pixels) and improve robustness, a comprehensive augmentation pipeline was employed: random flips, rotations, scaling, hue‑saturation‑value shifts, brightness/contrast adjustments, gamma correction, Gaussian noise and blur, as well as elastic, grid, and optical distortions aimed at preserving the morphology of tiny lesions while simulating realistic acquisition variability. All images were resized to 512 × 512 and normalized using ImageNet statistics.

The Attention‑DeepLab model inserts two CBAM blocks into the DeepLab‑V3+ backbone. The first CBAM follows the Atrous Spatial Pyramid Pooling (ASPP) module, operating on high‑level semantic features (256 channels, 16 × 16 resolution) to refine “what” information via channel‑wise attention and then “where” via spatial attention. The second CBAM is placed in the decoder after a 1 × 1 convolution that reduces low‑level features (256 → 48 channels, 128 × 128 resolution), focusing on fine‑grained spatial details and boundary precision. This dual‑attention strategy enables the network to simultaneously enhance semantic discrimination of lesions and preserve accurate boundary localization—an essential requirement for detecting micro‑aneurysms that may span only a few pixels.

Training was performed on an NVIDIA A100 GPU using PyTorch, the Adam optimizer (β₁ = 0.9, β₂ = 0.999), an initial learning rate of 1 × 10⁻⁴ with ReduceLROnPlateau scheduling, batch size = 4, and early stopping after 15 epochs. The loss function is a weighted sum of four components: binary cross‑entropy, focal loss (α = 0.25, γ = 2.0), Dice loss, and a boundary‑aware loss that multiplies BCE by an exponential term of the ground‑truth gradient (θ = 1.5). Weights were set to w_dice = 1.0, w_bce = 0.5, w_focal = 1.0, w_boundary = 0.5, based on empirical validation.

Evaluation metrics included Intersection over Union (IoU), average precision (AP) across IoU thresholds from 0.5 to 0.95, and mean average precision (mAP) across lesion classes. On the test set, the baseline DeepLab‑V3+ achieved mAP = 0.3010 and mean IoU = 0.1791, while a standard U‑Net obtained mAP = 0.2391 and mean IoU = 0.1757. The proposed Attention‑DeepLab improved overall performance to mAP = 0.3326 (+10.5 %) and mean IoU = 0.1928 (+7.6 %). The most striking gain was observed for micro‑aneurysms: AP rose from 0.0205 to 0.0763, a 272 % increase, moving the detection capability from near‑random to a level that could meaningfully support clinical decision‑making. Hemorrhage detection also showed notable improvement. Visual examples demonstrate that the model produces cleaner, more accurate lesion contours, with color‑coded masks distinguishing each lesion type.

The authors discuss several limitations. The annotated subset is relatively small, which may restrict generalization to broader populations and to other imaging devices. The batch size was limited by GPU memory, potentially affecting training stability. Moreover, the study does not include external validation on independent DR datasets, leaving open the question of cross‑dataset robustness. Future work could explore larger multi‑center datasets, incorporate multi‑scale feature pyramids, or replace the binary cross‑entropy component with more sophisticated uncertainty‑aware losses. Real‑time inference optimization would also be necessary for deployment in screening programs.

In summary, this paper demonstrates that integrating lightweight attention modules into a powerful segmentation backbone can substantially boost the detection of clinically critical, tiny retinal lesions. By achieving a three‑fold increase in micro‑aneurysm detection accuracy and modest gains across all lesion types, the Attention‑DeepLab model moves automated DR screening closer to a tool that not only grades disease severity but also provides lesion‑level evidence that ophthalmologists can trust and act upon.

Comments & Academic Discussion

Loading comments...

Leave a Comment