Progressive Searching for Retrieval in RAG

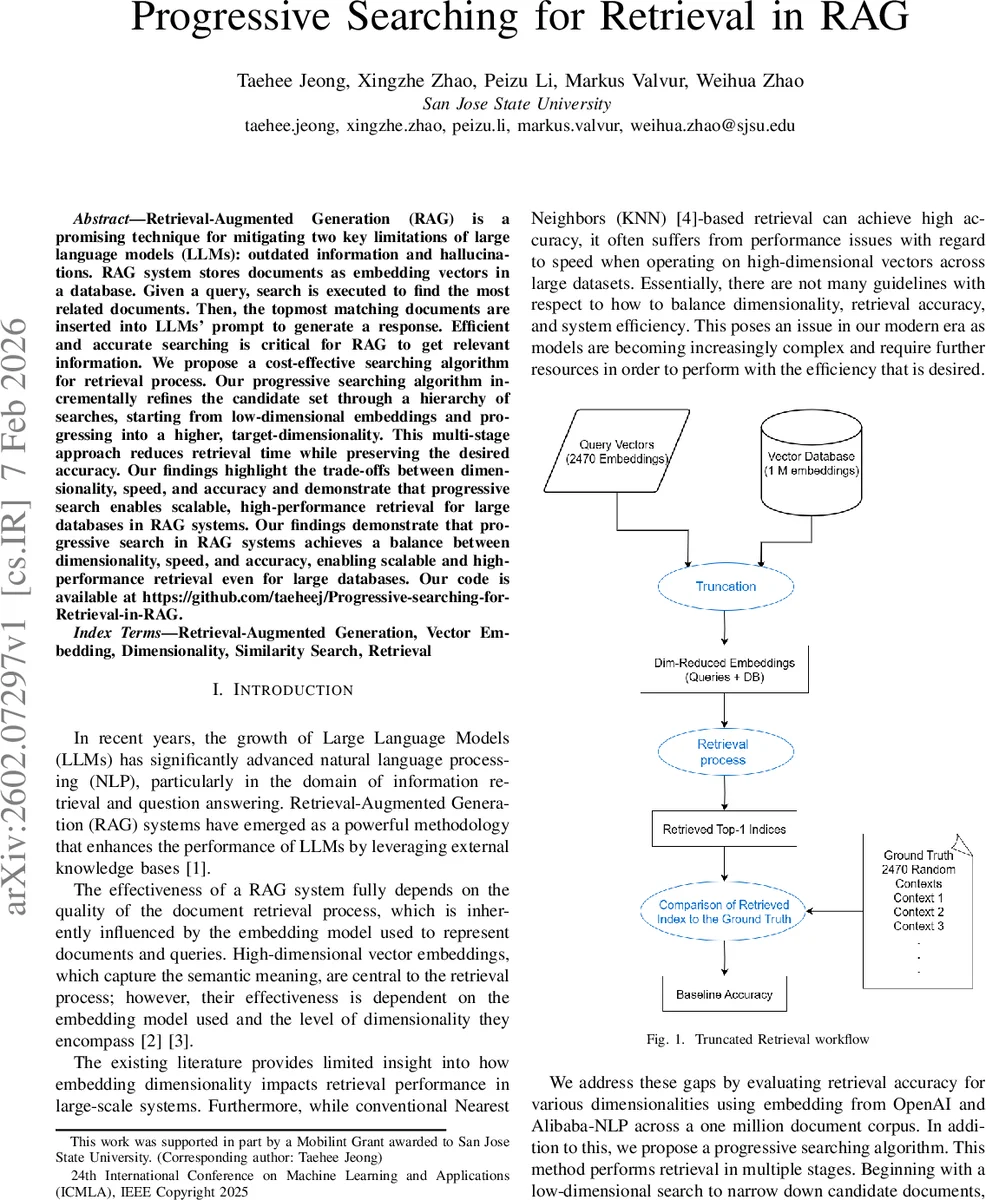

Retrieval Augmented Generation (RAG) is a promising technique for mitigating two key limitations of large language models (LLMs): outdated information and hallucinations. RAG system stores documents as embedding vectors in a database. Given a query, search is executed to find the most related documents. Then, the topmost matching documents are inserted into LLMs’ prompt to generate a response. Efficient and accurate searching is critical for RAG to get relevant information. We propose a cost-effective searching algorithm for retrieval process. Our progressive searching algorithm incrementally refines the candidate set through a hierarchy of searches, starting from low-dimensional embeddings and progressing into a higher, target-dimensionality. This multi-stage approach reduces retrieval time while preserving the desired accuracy. Our findings demonstrate that progressive search in RAG systems achieves a balance between dimensionality, speed, and accuracy, enabling scalable and high-performance retrieval even for large databases.

💡 Research Summary

The paper addresses a central bottleneck in Retrieval‑Augmented Generation (RAG) systems: the cost of searching a large vector database to retrieve the most relevant documents for a language model’s prompt. While high‑dimensional embeddings (3‑4 k dimensions) capture rich semantic information, exhaustive nearest‑neighbor search over a million documents becomes prohibitively slow for real‑time applications. To mitigate this, the authors propose a “Progressive Searching” algorithm that gradually refines the candidate set through a hierarchy of increasingly higher‑dimensional searches.

The method works as follows. First, the entire document collection is reduced to a low dimensionality (e.g., 64 or 128) by simple truncation of the embedding vectors. A K‑Nearest‑Neighbor (K‑NN) search is performed on this low‑dimensional space, returning a relatively large set of candidate documents (parameter K). In a loop, the dimensionality is doubled (128 → 256 → 512 …) and the candidate set is re‑searched at the new dimension. At each step the value of K is halved (with a lower bound of 1) to keep the candidate pool shrinking. The process stops when the target (maximum) dimension—typically the original embedding size of 3072 for OpenAI’s model or 3584 for Alibaba‑NLP’s model—is reached. A final 1‑NN search on the remaining candidates yields the document that will be fed to the LLM.

The authors evaluate the approach on a realistic RAG scenario. They use the dbpedia‑openai‑1M‑1536‑angular dataset (1 M English documents) and generate 2 470 query‑document pairs via ChatGPT‑4o. Two embedding models are employed: OpenAI’s text‑embedding‑3‑large (3072 D) and Alibaba‑NLP’s gte‑Qwen2‑7B‑instruct (3584 D). For baseline comparison they use a single‑stage “truncated” search (exact 1‑NN on the whole collection at a fixed reduced dimension) and, for completeness, HNSW‑based graph search. Dimensionality reduction is performed by simple truncation rather than PCA because truncation offers comparable accuracy with far lower computational overhead.

Key findings:

- Accuracy improves monotonically with dimensionality, reaching ~95 % Top‑1 accuracy at the full embedding size for both models.

- The progressive method achieves comparable or slightly higher accuracy than the baseline while dramatically reducing median query time. For example, with the Alibaba‑NLP model, a configuration of start‑dim = 128, max‑dim = 3584, and initial K = 64 yields 95.02 % accuracy in 12.2 seconds, versus 99.4 seconds for the single‑stage 3584‑D search—a speed‑up of roughly eightfold.

- At intermediate dimensions (e.g., 2048), the progressive approach is about five times faster while maintaining >94.8 % accuracy.

- The runtime profile shows that the initial low‑dimensional scan dominates total latency; subsequent higher‑dimensional passes are cheap because the candidate pool has already been pruned.

The paper also discusses limitations. Experiments were conducted on Google Colab’s CPU environment, so network latency and lack of GPU acceleration may affect absolute timings. The method relies on manually chosen hyper‑parameters (starting dimension, initial K, step size), and the authors acknowledge the need for automated tuning or meta‑learning. Moreover, only linear truncation was explored; non‑linear dimensionality reduction (e.g., autoencoders) could preserve more semantic information at low dimensions and potentially further improve the trade‑off.

In conclusion, progressive searching offers a practical, low‑overhead solution to the scalability problem of vector‑based retrieval in RAG pipelines. By exploiting a cascade of low‑to‑high dimensional searches, it preserves the semantic fidelity of high‑dimensional embeddings while delivering query latencies compatible with real‑time LLM‑driven applications such as chatbots, question‑answering systems, and large‑scale knowledge‑augmented generation. The approach is simple to implement, requires no specialized indexing structures beyond basic K‑NN, and can be readily integrated into existing RAG frameworks.

Comments & Academic Discussion

Loading comments...

Leave a Comment