Mapping the Design Space of User Experience for Computer Use Agents

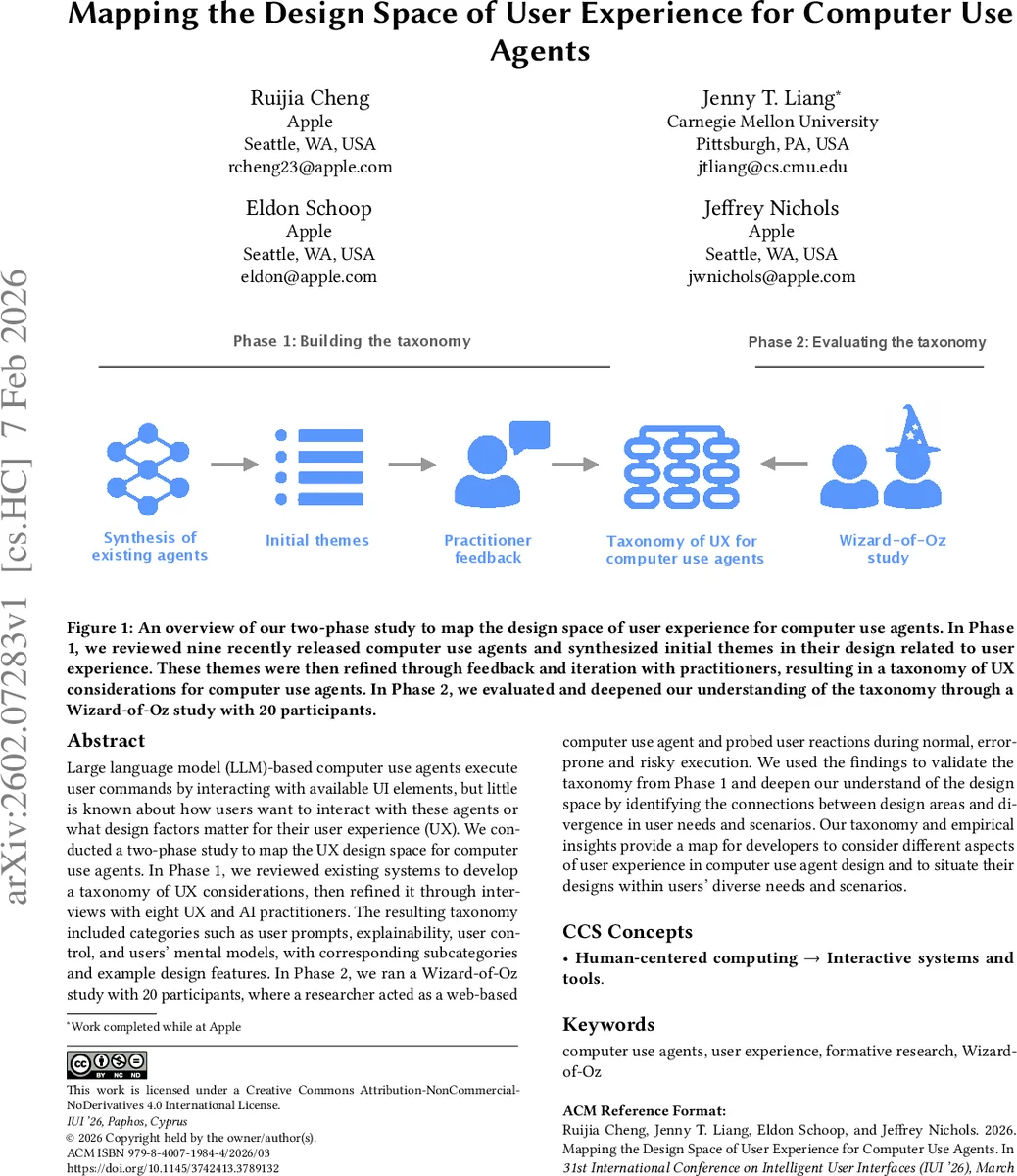

Large language model (LLM)-based computer use agents execute user commands by interacting with available UI elements, but little is known about how users want to interact with these agents or what design factors matter for their user experience (UX). We conducted a two-phase study to map the UX design space for computer use agents. In Phase 1, we reviewed existing systems to develop a taxonomy of UX considerations, then refined it through interviews with eight UX and AI practitioners. The resulting taxonomy included categories such as user prompts, explainability, user control, and users’ mental models, with corresponding subcategories and example design features. In Phase 2, we ran a Wizard-of-Oz study with 20 participants, where a researcher acted as a web-based computer use agent and probed user reactions during normal, error-prone and risky execution. We used the findings to validate the taxonomy from Phase 1 and deepen our understand of the design space by identifying the connections between design areas and divergence in user needs and scenarios. Our taxonomy and empirical insights provide a map for developers to consider different aspects of user experience in computer use agent design and to situate their designs within users’ diverse needs and scenarios.

💡 Research Summary

This paper addresses the largely unexplored user‑experience (UX) design space of large‑language‑model (LLM)‑driven computer‑use agents—software that fulfills user commands by directly interacting with graphical user interfaces. The authors conduct a two‑phase formative study to (1) build a comprehensive taxonomy of UX considerations and (2) validate and enrich that taxonomy through real‑world user interactions.

In Phase 1 the researchers surveyed nine state‑of‑the‑art agents released in 2024‑2025 across desktop, browser, and mobile platforms. For each system they examined demo videos, installed the agents when possible, and took field notes on how the agents solicited input, displayed progress, handled errors, and communicated intent. From this analysis they derived an initial taxonomy comprising five high‑level categories: User Query, Explainability of Agent Activities, User Control, End‑of‑Task Indicators, and Task Preview, each with several sub‑categories and concrete design features (e.g., multimodal prompts, step‑by‑step visual highlights, confirmation dialogs).

To refine the taxonomy, the authors interviewed eight UX and AI practitioners from a large technology company. Participants evaluated the relevance of each category, identified missing dimensions, and suggested refinements. The interview process added a fourth major category—Mental Model—focused on how users understand the agent’s internal reasoning pipeline (perception, exploration, planning, execution). It also expanded “User Control” from simple pause/cancel to a multi‑level control spectrum (automatic, semi‑automatic, manual) that can be dynamically adjusted based on task risk. The final taxonomy therefore consists of four top‑level categories (User Query, Explainability, User Control, Mental Model) with 3‑5 sub‑categories each, and a set of illustrative design features drawn from existing agents or speculative extensions.

Phase 2 involved a Wizard‑of‑Oz experiment with 20 participants interacting with a web‑based computer‑use agent simulated by a researcher. Participants issued natural‑language commands while the “agent” performed the actions in a browser, pausing to probe participants’ reactions. The study covered three scenarios: normal execution, error‑prone execution, and risky execution (e.g., deleting personal data). Findings showed:

- Task Preview & End‑of‑Task Signals – Users strongly preferred to see a preview of the planned actions and an explicit completion indicator, which helped them gauge progress and intervene if needed.

- Explainability in Error Situations – When the agent made a mistake, participants demanded a clear, step‑by‑step explanation of what went wrong and concrete recovery options (retry, revert, edit plan). Transparency directly affected trust.

- Control Granularity for Risky Tasks – For high‑risk operations users wanted the ability to approve each step, view which UI elements would be touched, and understand data access implications before the agent proceeded.

- Mental Model Support – Visualizations of the agent’s reasoning pipeline (e.g., flowcharts of perception → planning → execution) enabled participants to predict behavior, spot inconsistencies, and feel more in control.

These empirical insights map neatly onto the taxonomy: “User Query” must accommodate multimodal, conversational input; “Explainability” requires both real‑time visual cues and post‑hoc rationales; “User Control” needs tiered intervention mechanisms; and “Mental Model” benefits from explicit pipeline visualizations and history browsing.

The authors synthesize several design recommendations: (a) support flexible input modalities and allow users to choose between single‑shot and turn‑based interaction; (b) provide step‑wise visual highlights, logs, and intent explanations to maintain transparency; (c) implement a dynamic control framework that can shift between autonomous, semi‑autonomous, and manual modes based on task risk and user preference; (d) expose the agent’s internal reasoning through visualizations and enable users to edit or replay past plans; (e) always include clear task‑completion signals and optional task previews.

By delivering a rigorously derived taxonomy and validating it with end‑users, the paper offers a practical roadmap for designers of future computer‑use agents. The work not only fills a gap in the HCI literature on agentic UI control but also provides empirical foundations for trust calibration, safety mechanisms, and regulatory considerations in the emerging era of LLM‑powered autonomous agents.

Comments & Academic Discussion

Loading comments...

Leave a Comment