AOrchestra: Automating Sub-Agent Creation for Agentic Orchestration

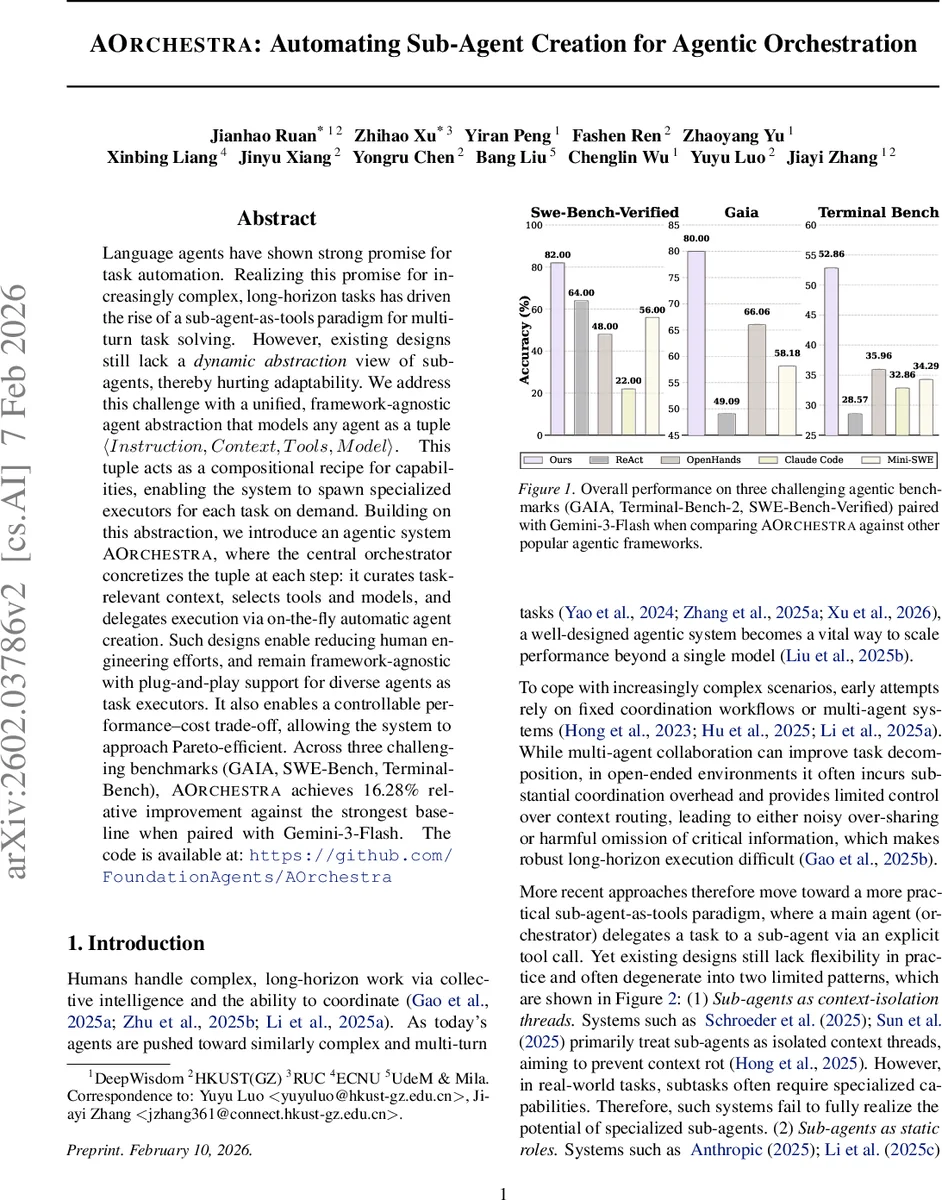

Language agents have shown strong promise for task automation. Realizing this promise for increasingly complex, long-horizon tasks has driven the rise of a sub-agent-as-tools paradigm for multi-turn task solving. However, existing designs still lack a dynamic abstraction view of sub-agents, thereby hurting adaptability. We address this challenge with a unified, framework-agnostic agent abstraction that models any agent as a tuple Instruction, Context, Tools, Model. This tuple acts as a compositional recipe for capabilities, enabling the system to spawn specialized executors for each task on demand. Building on this abstraction, we introduce an agentic system AOrchestra, where the central orchestrator concretizes the tuple at each step: it curates task-relevant context, selects tools and models, and delegates execution via on-the-fly automatic agent creation. Such designs enable reducing human engineering efforts, and remain framework-agnostic with plug-and-play support for diverse agents as task executors. It also enables a controllable performance-cost trade-off, allowing the system to approach Pareto-efficient. Across three challenging benchmarks (GAIA, SWE-Bench, Terminal-Bench), AOrchestra achieves 16.28% relative improvement against the strongest baseline when paired with Gemini-3-Flash. The code is available at: https://github.com/FoundationAgents/AOrchestra

💡 Research Summary

The paper introduces AOrchestra, a novel agentic framework that treats sub‑agents as dynamically created, specialized executors rather than static, pre‑defined roles. The authors first identify two prevailing patterns in existing sub‑agent‑as‑tools systems: (1) sub‑agents as isolated context threads, which prevent context rot but lack task‑specific capabilities, and (2) sub‑agents as static roles, which provide specialization but are inflexible and require heavy human engineering. To overcome these limitations, AOrchestra abstracts any agent—including the main orchestrator and its sub‑agents—into a unified four‑tuple ⟨Instruction, Context, Tools, Model⟩. Instruction encodes the current objective and success criteria; Context supplies only the most relevant information for the sub‑task; Tools defines the permissible action space; Model selects the underlying LLM best suited for the task’s difficulty and tool set.

The orchestrator never takes direct environment actions. Its action space consists solely of Delegate(Φ) and Finish(y). When Delegate is invoked with a concrete tuple Φ, a new sub‑agent instance is instantiated on‑the‑fly, equipped with the specified model and tool set, and conditioned on the provided instruction and context. The sub‑agent executes the sub‑task, returning a structured observation that includes a concise result summary, any generated artifacts, and error logs if applicable. Finish terminates the interaction with the final answer. This clear separation of orchestration from execution enables (i) precise capability allocation per sub‑task, (ii) clean working contexts that mitigate context rot, and (iii) plug‑and‑play compatibility with any model or tool suite, making the system framework‑agnostic.

AOrchestra can be used in a training‑free mode, where the orchestrator follows a heuristic policy, as well as in a learnable mode. The authors demonstrate two learning strategies: supervised fine‑tuning of the sub‑task decomposition and tuple synthesis, which yields an 11.51 % increase in GAIA pass@1, and cost‑aware routing via in‑context learning, which reduces average execution cost by 18.5 % while improving GAIA pass@1 by an additional 3.03 %. These results show that the orchestration policy can be optimized both for accuracy and efficiency.

Empirical evaluation spans three challenging benchmarks: GAIA (digital‑world tasks), SWE‑Bench‑Verified (coding tasks), and Terminal‑Bench 2.0 (bash environment). When paired with Gemini‑3‑Flash, AOrchestra consistently outperforms strong baselines such as ReAct, OpenHands, Claude Code, and other sub‑agent orchestration frameworks, achieving a 16.28 % relative improvement over the strongest baseline. The system also demonstrates favorable Pareto‑efficient trade‑offs between performance and cost.

In summary, AOrchestra advances the sub‑agent‑as‑tools paradigm by introducing a dynamic, tuple‑based abstraction that enables on‑demand specialization, context‑aware execution, and model‑tool flexibility. It reduces human engineering effort, improves robustness on long‑horizon tasks, and offers a clear path for further research into cost‑optimal orchestration, broader domain adaptation, and human‑agent collaborative workflows.

Comments & Academic Discussion

Loading comments...

Leave a Comment