ReasonEdit: Editing Vision-Language Models using Human Reasoning

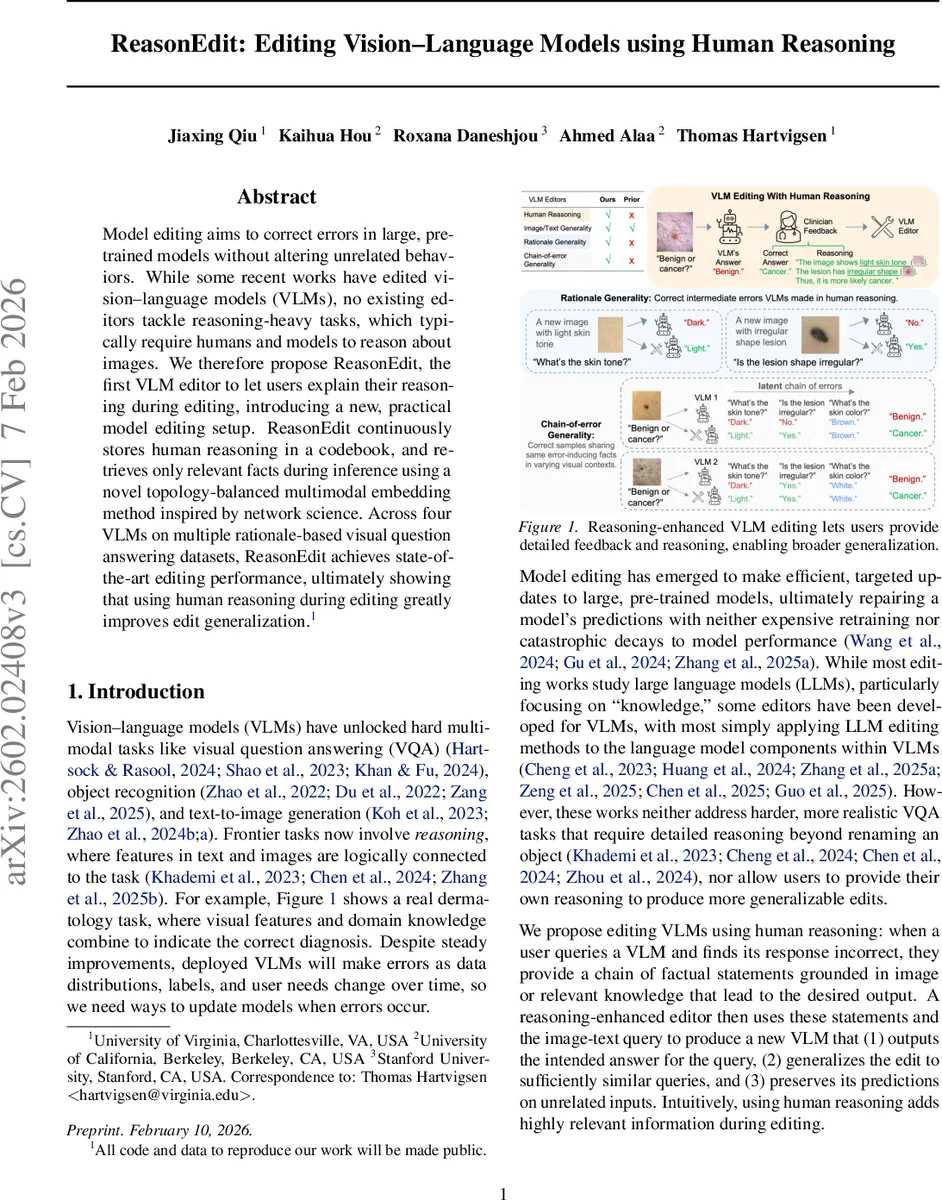

Model editing aims to correct errors in large, pretrained models without altering unrelated behaviors. While some recent works have edited vision-language models (VLMs), no existing editors tackle reasoning-heavy tasks, which typically require humans and models to reason about images. We therefore propose ReasonEdit, the first VLM editor to let users explain their reasoning during editing, introducing a new, practical model editing setup. ReasonEdit continuously stores human reasoning in a codebook, and retrieves only relevant facts during inference using a novel topology-balanced multimodal embedding method inspired by network science. Across four VLMs on multiple rationale-based visual question answering datasets, ReasonEdit achieves state-of-the-art editing performance, ultimately showing that using human reasoning during editing greatly improves edit generalization.

💡 Research Summary

ReasonEdit introduces a novel, retrieval‑based editing framework for vision‑language models (VLMs) that leverages human‑provided reasoning to correct model errors without updating model weights. Traditional VLM editors either adapt large‑language‑model (LLM) editing techniques to the language component of VLMs or focus on simple label‑correction tasks, leaving reasoning‑heavy visual question answering (VQA) untouched. ReasonEdit fills this gap by allowing users, when encountering an incorrect VLM response, to supply a chain of factual statements (the “reasoning”) together with visual evidence (image patches) that support each statement.

Each edit is formalized as a tuple (image i, question t, correct answer y⁺, reasoning r⁺), where r⁺ = {s₁,…,s_N} are step‑by‑step facts. During editing, ReasonEdit creates a discrete codebook C of key‑value pairs. Keys are multimodal embeddings E(i, t) of image‑question pairs, while values are natural‑language sentences. Two kinds of entries are added: (1) an answer entry with a templated sentence “The answer to question t about the image is y⁺.” (2) for each fact s_j, ReasonEdit pairs it with one or more image patches i_pj that visually instantiate the fact; the key becomes E(i_pj, s_j) and the value is the fact sentence s_j itself. If users do not provide patches, the system automatically proposes candidates by prompting the VLM with “Does the image show s_j?” and selecting patches with high likelihood and appropriate spatial granularity.

At inference time, a new query (i_new, t_new) is embedded, and a K‑nearest‑neighbors search is performed in the codebook’s embedding space. If the query is close enough to existing keys (distance below a percentile‑based threshold), the retrieved facts are concatenated and prepended to the original prompt, effectively providing the VLM with the missing reasoning context. Because no model parameters are altered, the original VLM’s performance on unrelated inputs remains intact, and the method scales to sequential, real‑time editing.

A critical contribution is the topology‑balanced multimodal embedding (E^dual) used for keys. The authors treat the set of embeddings as a weighted similarity graph and evaluate candidate embeddings using five network‑science metrics: vision modularity, language modularity, bimodal modularity, vision bias, and language bias. Modularity measures how well the graph aligns with partitions that group nodes by shared image or text identity; balanced embeddings avoid clustering solely by visual or textual similarity, which would impair retrieval. By selecting the embedding that maximizes a composite of these metrics, ReasonEdit achieves more accurate neighbor relationships, leading to better generalization of edits to semantically similar image‑question pairs.

To keep the codebook size manageable during continuous editing, a key‑merging procedure is introduced. For each new key, a local neighborhood radius is estimated; if the new key’s neighborhood substantially overlaps with an existing entry, the keys are averaged and their values concatenated. Otherwise, the key is added independently. This prevents uncontrolled growth while preserving the diversity of factual knowledge.

Experiments span four state‑of‑the‑art VLMs (including CLIP‑based architectures) and two rationale‑rich VQA datasets: VCR and VQA‑R. Evaluation follows standard model‑editing metrics: (i) edit success – the edited model returns the correct answer for the edited query; (ii) generalization – the model correctly answers unseen queries that share visual or textual similarity or rely on the same underlying facts; (iii) preservation – overall performance on a held‑out test set remains unchanged. ReasonEdit consistently outperforms prior VLM editors such as GRACE, IKE, and BalanceEdit across all three metrics. Notably, ReasonEdit demonstrates a new form of generalization: edits propagate to samples where the same erroneous reasoning pattern appears in different visual contexts, confirming that human‑provided reasoning captures deeper causal structures than surface labels.

Computationally, ReasonEdit incurs only the cost of embedding queries and performing a K‑NN lookup, making it suitable for real‑time, user‑in‑the‑loop scenarios. The authors also report low memory overhead thanks to the merging strategy, and ablation studies confirm that both the visual‑evidence patchification and the topology‑balanced embedding are essential for peak performance.

In summary, ReasonEdit presents a practical, scalable solution for editing vision‑language models on reasoning‑intensive tasks. By converting human feedback into a structured codebook of multimodal facts and retrieving them with a graph‑theoretically balanced embedding, it achieves high edit accuracy, strong generalization to related queries, and zero degradation of unrelated capabilities—all without any weight updates. This work opens a path toward interactive, continual maintenance of large VLMs in real‑world deployments, where users can directly teach models by explaining why an answer should be different.

Comments & Academic Discussion

Loading comments...

Leave a Comment