RealPDEBench: A Benchmark for Complex Physical Systems with Real-World Data

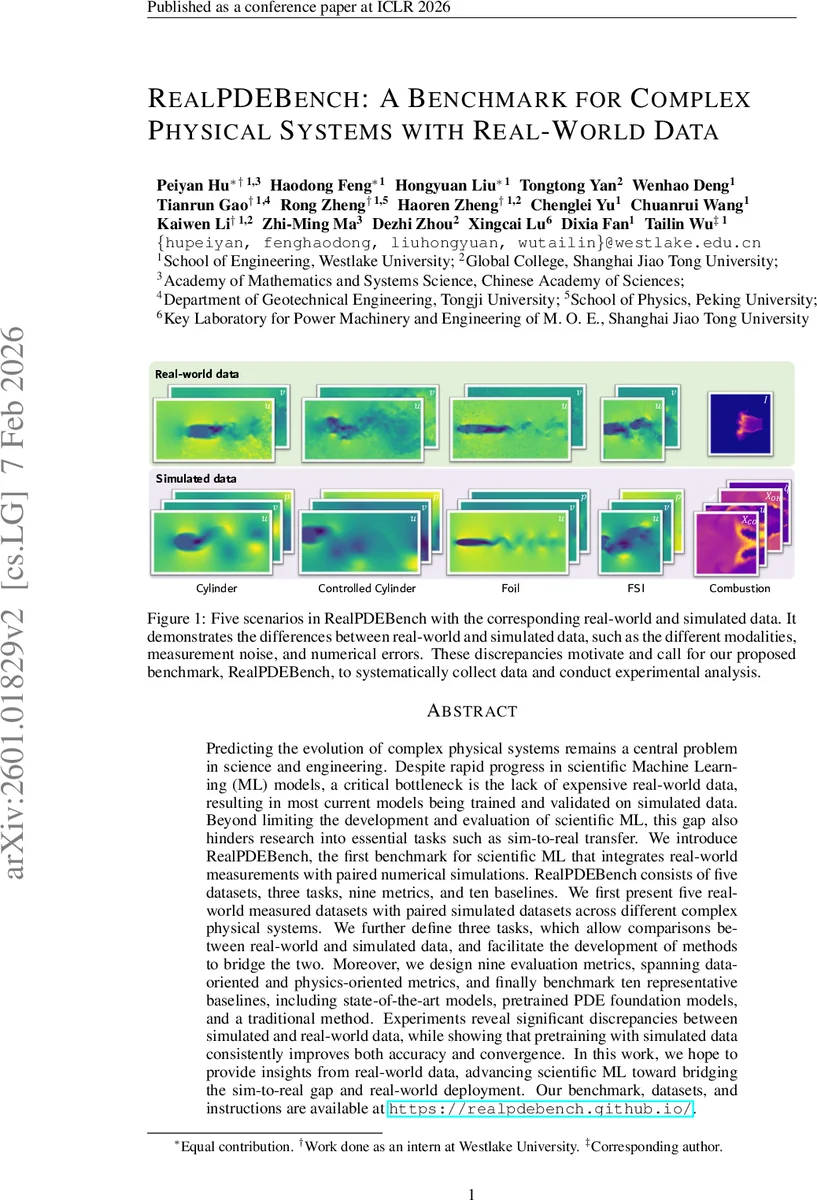

Predicting the evolution of complex physical systems remains a central problem in science and engineering. Despite rapid progress in scientific Machine Learning (ML) models, a critical bottleneck is the lack of expensive real-world data, resulting in most current models being trained and validated on simulated data. Beyond limiting the development and evaluation of scientific ML, this gap also hinders research into essential tasks such as sim-to-real transfer. We introduce RealPDEBench, the first benchmark for scientific ML that integrates real-world measurements with paired numerical simulations. RealPDEBench consists of five datasets, three tasks, eight metrics, and ten baselines. We first present five real-world measured datasets with paired simulated datasets across different complex physical systems. We further define three tasks, which allow comparisons between real-world and simulated data, and facilitate the development of methods to bridge the two. Moreover, we design eight evaluation metrics, spanning data-oriented and physics-oriented metrics, and finally benchmark ten representative baselines, including state-of-the-art models, pretrained PDE foundation models, and a traditional method. Experiments reveal significant discrepancies between simulated and real-world data, while showing that pretraining with simulated data consistently improves both accuracy and convergence. In this work, we hope to provide insights from real-world data, advancing scientific ML toward bridging the sim-to-real gap and real-world deployment. Our benchmark, datasets, and instructions are available at https://realpdebench.github.io/.

💡 Research Summary

RealPDEBench introduces the first scientific‑ML benchmark that couples large‑scale real‑world measurements with paired numerical simulations across five complex physical scenarios: cylinder wake flow, controlled cylinder flow, fluid‑structure interaction, three‑dimensional foil aerodynamics, and turbulent swirl‑stabilized combustion. The authors collected more than 700 trajectories, each exceeding 2,000 time steps, using high‑resolution experimental setups (water‑tunnel PIV, high‑speed cameras, laser illumination, and combustion rigs) and generated matching simulation data with state‑of‑the‑art CFD/LES solvers. For every operating condition (Reynolds number, control frequency, mass ratio, etc.) a pair of real and simulated datasets is provided, enabling direct comparison and transfer‑learning studies.

Three training paradigms are defined: (1) Real‑World Training – models are trained solely on experimental data; (2) Simulated Training – models are trained only on synthetic data; (3) Sim‑Pretrain + Real‑Finetune – models are first pretrained on the full simulated corpus and then fine‑tuned on a limited set of real measurements. This design mirrors practical situations where abundant simulated data exist but real data are scarce and noisy.

Evaluation uses nine metrics split into data‑oriented (MSE, MAE, PSNR, SSIM) and physics‑oriented (energy conservation error, mass conservation error, spectral discrepancy, physics‑law violation rate). An additional “pretrain gain” metric quantifies the benefit of simulation pretraining.

Ten baselines are benchmarked: nine recent neural operator architectures (DeepONet, Fourier Neural Operator, Wavelet Neural Operator, Graph Neural Operator, etc.) and a traditional POD‑RBF surrogate. A PDE‑Foundation model pretrained on a massive simulation corpus is also included to test foundation‑model transfer. All models share a common hyper‑parameter search and training schedule for fair comparison.

Results show a pronounced gap between simulated and real data: models trained on simulations achieve low MSE on synthetic test sets but suffer large physics‑oriented errors when evaluated on real data, reflecting numerical discretization errors and unmodeled experimental noise. Pure real‑world training yields higher MSE and slower convergence, especially in turbulent regimes where measurement noise dominates. Crucially, the Sim‑Pretrain + Real‑Finetune pipeline consistently improves performance: MSE reductions of 15‑30 % and notable drops in physics‑oriented errors across all scenarios. The PDE‑Foundation model benefits the most, leveraging its broad physics knowledge to adapt quickly with few real samples.

The paper highlights two key insights. First, the disparity between simulation and reality is not merely statistical; it manifests as violations of fundamental conservation laws, underscoring the need for noise modeling and physics‑aware loss functions during training. Second, simulation‑based pretraining is an effective strategy to bridge the sim‑to‑real gap, offering both accuracy gains and faster convergence.

In discussion, the authors note limitations such as the focus on 2‑D/3‑D grid data and a fixed set of physical fields, suggesting future extensions toward higher‑dimensional, multi‑scale, and multi‑physics datasets, as well as streaming real‑time measurements. They also propose integrating domain adaptation techniques and uncertainty quantification to further enhance robustness.

Overall, RealPDEBench provides a comprehensive, modular framework that enables systematic evaluation of scientific‑ML models on real‑world physics, establishes baseline performance across diverse tasks, and demonstrates the practical value of simulation‑pretraining for real‑world deployment. The benchmark is publicly released at https://realpdebench.github.io/, inviting the community to develop next‑generation models that can truly operate on complex physical systems outside of idealized simulations.

Comments & Academic Discussion

Loading comments...

Leave a Comment