Storycaster: An AI System for Immersive Room-Based Storytelling

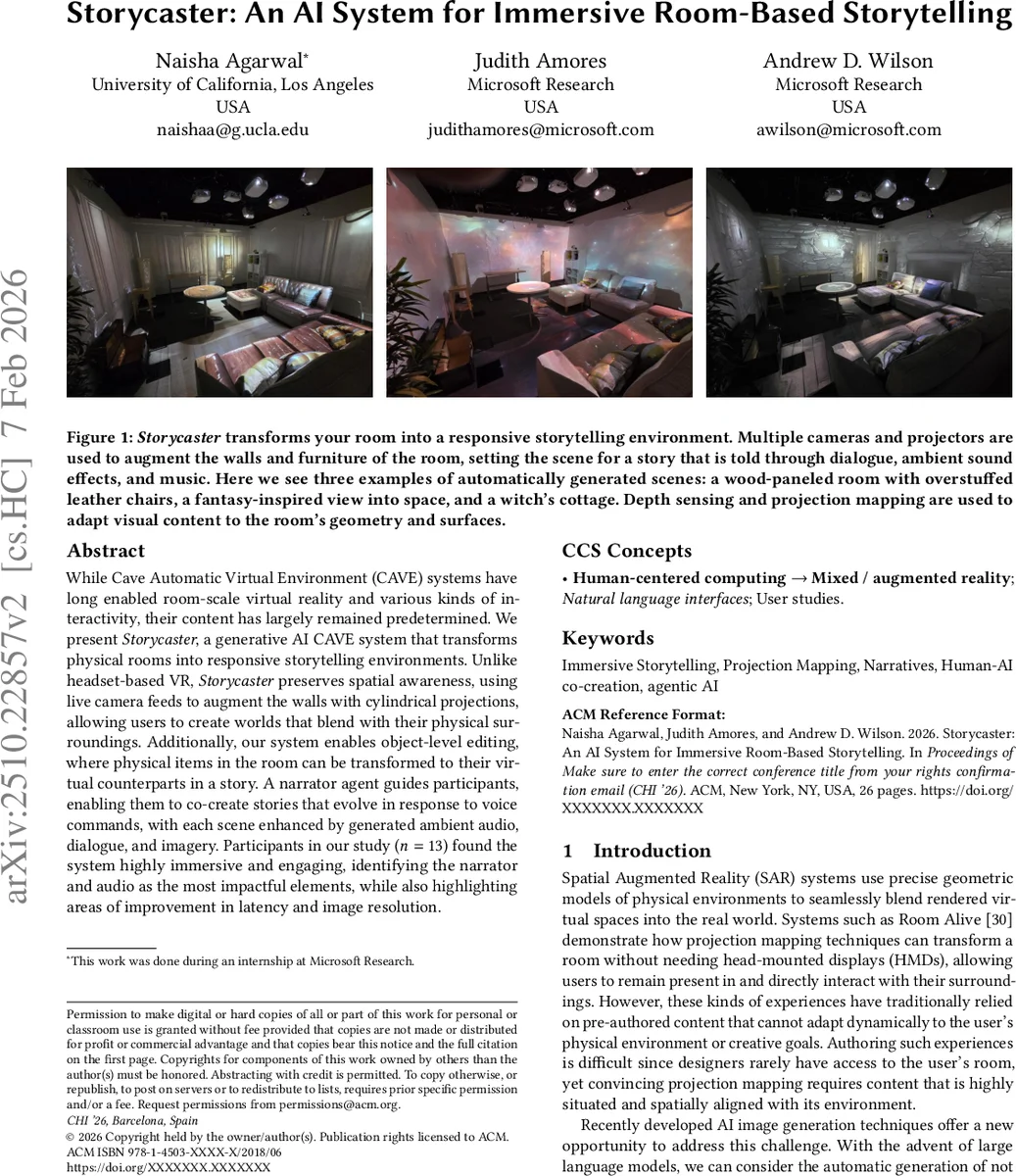

While Cave Automatic Virtual Environment (CAVE) systems have long enabled room-scale virtual reality and various kinds of interactivity, their content has largely remained predetermined. We present \textit{Storycaster}, a generative AI CAVE system that transforms physical rooms into responsive storytelling environments. Unlike headset-based VR, \textit{Storycaster} preserves spatial awareness, using live camera feeds to augment the walls with cylindrical projections, allowing users to create worlds that blend with their physical surroundings. Additionally, our system enables object-level editing, where physical items in the room can be transformed to their virtual counterparts in a story. A narrator agent guides participants, enabling them to co-create stories that evolve in response to voice commands, with each scene enhanced by generated ambient audio, dialogue, and imagery. Participants in our study ($n=13$) found the system highly immersive and engaging, with narrator and audio most impactful, while also highlighting areas for improvement in latency and image resolution.

💡 Research Summary

Storycaster introduces a novel AI‑driven immersive storytelling platform that leverages a Cave Automatic Virtual Environment (CAVE) to transform an ordinary physical room into a dynamic narrative stage without the need for head‑mounted displays. The system integrates large language models (LLM), text‑to‑image diffusion models, and spatial audio generators to produce coherent visual, auditory, and narrative content in response to natural‑language voice commands.

The architecture consists of three tightly coupled modules. First, a real‑time image generation pipeline captures 360° cylindrical views of the room using RGB‑D cameras. These depth‑aware images are fed to an SDXL‑based diffusion model enhanced with Depth‑Control Net and a custom cylindrical LoRA, which synthesizes high‑quality panoramic textures that match the user’s textual prompt (e.g., “a fantasy forest under a starry sky”). The resulting panoramas are projected onto walls and furniture via calibrated projectors, preserving geometric alignment and minimizing distortion.

Second, the Narrator Agent serves as the conversational backbone. Speech captured by an array of microphones is transcribed by Azure Speech‑to‑Text, then processed by a GPT‑4.1‑powered prompt engine. The agent generates plot outlines, character dialogue, scene‑transition instructions, and object‑editing commands. It also invokes Stability Audio 1.0 to create matching ambient soundscapes, which are rendered through a Dolby Atmos system to provide spatialized audio that moves with the narrative.

Third, object‑level editing enables granular transformation of physical items. Grounded‑SAM segments objects within the cylindrical image; an SD 1.5 in‑painting model then overlays virtual textures (e.g., turning a coffee mug into a magical crystal). These overlays are re‑projected onto the actual objects, allowing them to become story props or plot devices.

A user study with 13 participants (each session lasting 20–25 minutes) evaluated immersion, enjoyment, and usability via pre‑ and post‑questionnaires and semi‑structured interviews. Participants reported high immersion, highlighting the AI narrator’s guidance and the ambient audio as the most compelling elements. However, they noted latency of 2–3 seconds in visual/audio generation and limited projection resolution (1080p) as drawbacks. Object‑editing sometimes suffered from segmentation errors under variable lighting, indicating a need for more robust scene understanding.

The authors discuss several avenues for improvement: hardware acceleration to reduce latency, higher‑resolution projection, lightweight multimodal models for on‑device inference, and more sophisticated prompt templates to guide the LLM. They also envision extensions such as multi‑user collaborative storytelling, domain‑specific adaptations for education or therapy, and richer interaction modalities (e.g., gesture‑based control).

In summary, Storycaster demonstrates that generative AI can be tightly coupled with spatial‑augmented reality to deliver real‑time, co‑creative storytelling experiences that keep users physically present in their environment. By eliminating headsets and enabling voice‑first interaction, the system opens new possibilities for accessible, immersive narrative experiences across entertainment, learning, and wellbeing contexts.

Comments & Academic Discussion

Loading comments...

Leave a Comment