Guideline Forest: Retrieval-Augmented Reasoning with Branching Experience-Induced Guidelines

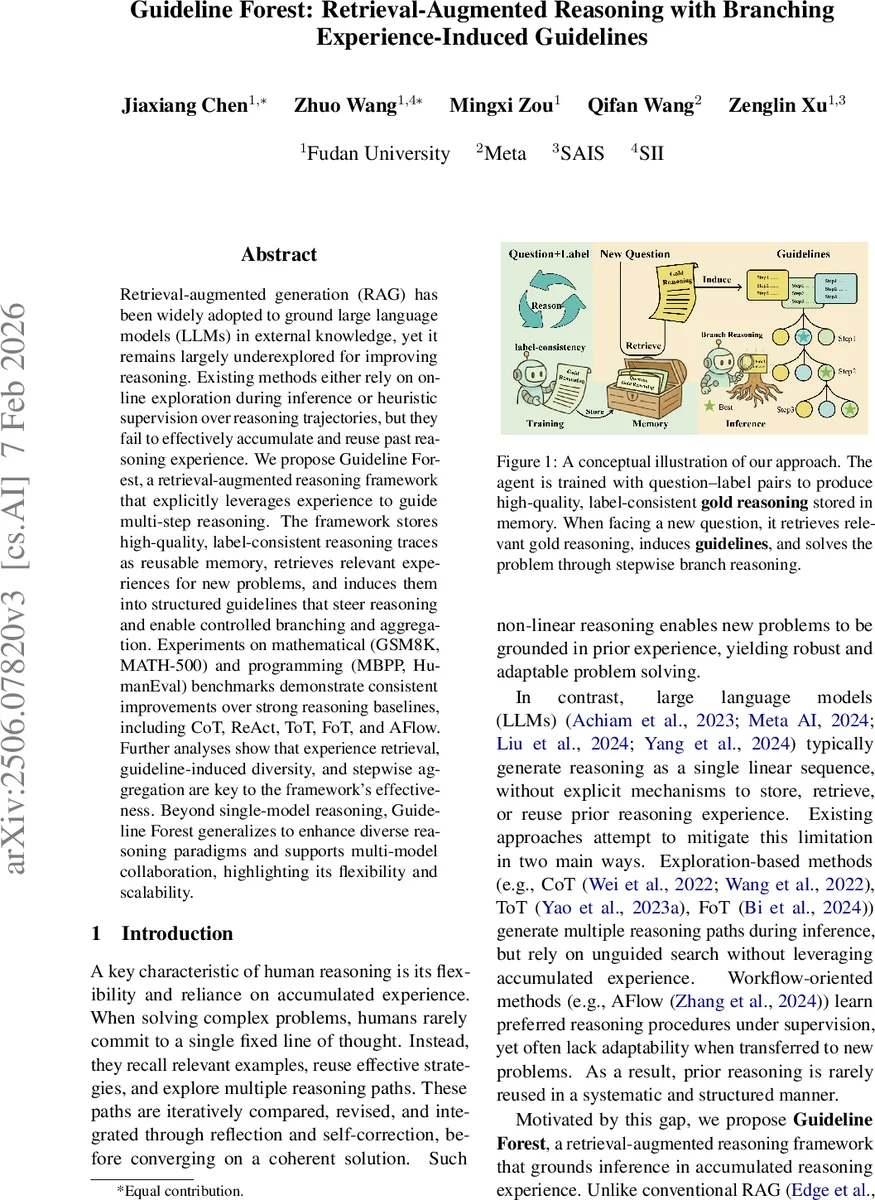

Retrieval-augmented generation (RAG) has been widely adopted to ground large language models (LLMs) in external knowledge, yet it remains largely underexplored for improving reasoning. Existing methods either rely on online exploration during inference or heuristic supervision over reasoning trajectories, but they fail to effectively accumulate and reuse past reasoning experience. We propose Guideline Forest, a retrieval-augmented reasoning framework that explicitly leverages experience to guide multi-step reasoning. The framework stores high-quality, label-consistent reasoning traces as reusable memory, retrieves relevant experiences for new problems, and induces them into structured guidelines that steer reasoning and enable controlled branching and aggregation. Experiments on mathematical (GSM8K, MATH-500) and programming (MBPP, HumanEval) benchmarks demonstrate consistent improvements over strong reasoning baselines, including CoT, ReAct, ToT, FoT, and AFlow. Further analyses show that experience retrieval, guideline-induced diversity, and stepwise aggregation are key to the framework’s effectiveness. Beyond single-model reasoning, Guideline Forest generalizes to enhance diverse reasoning paradigms and supports multi-model collaboration, highlighting its flexibility and scalability.

💡 Research Summary

Guideline Forest introduces a novel retrieval‑augmented reasoning framework that treats verified reasoning traces as reusable “experience” rather than external knowledge. During training, the system builds a memory of high‑quality, label‑consistent gold reasoning trajectories. For each supervised (question, answer) pair, an initial chain‑of‑thought (CoT) generation is performed; if the answer is incorrect, a label‑guided generation step conditions on the ground‑truth label to produce a coherent, correct reasoning trace. These verified traces are stored together with their embeddings, forming a continuously growing repository. Optional structured exploration (e.g., Tree‑of‑Thought) is used to increase diversity, and the repository is updated in a closed‑loop fashion, enabling the model to learn from its own successes.

At inference time, the model retrieves K semantically similar examples from the memory using embedding similarity. From each retrieved gold trace, a high‑level abstract plan—called a guideline—is induced. A guideline captures the generic reasoning structure (e.g., problem formulation → equation setup → computation → verification) without containing problem‑specific details. The set of guidelines guides a multi‑branch reasoning process: at each reasoning step t, every guideline proposes a candidate step, which may be refined through lightweight self‑correction. The candidates from all branches are then aggregated into a single step using confidence‑based scoring or voting. This aggregated step becomes part of the shared context for the next iteration, ensuring that branching improves robustness without fragmenting the overall reasoning chain.

Guideline Forest also supports collaborative inference across multiple LLMs. Different models can be assigned distinct guidelines, generate step‑wise candidates in parallel, and then combine their outputs via weighted voting. This mitigates individual model bias and leverages complementary strengths, especially useful for tasks like code generation where models may excel in different aspects (e.g., API usage vs algorithmic reasoning).

The authors evaluate the approach on four benchmarks covering mathematical problem solving (GSM8K, MATH‑500) and code generation (MBPP, HumanEval). Using GPT‑4o‑mini as the primary reasoning engine, Guideline Forest achieves 93.5% solve rate on GSM8K, 69.2% on MATH‑500, 81.6% pass@1 on MBPP, and 95.4% pass@1 on HumanEval. While it falls slightly short of AFlow on MBPP (81.6% vs 83.4%), it outperforms all other baselines, including CoT, ReAct, ToT, FoT, and Self‑Consistency variants. Scaling experiments show that larger models (GPT‑4o, Qwen2.5‑32B‑Instruct) benefit even more from the framework, with GPT‑4o reaching 71.4% on MATH‑500 and 96.2% on HumanEval.

A token‑efficiency analysis reveals that Guideline Forest consumes about 12.6 k tokens per problem, which is higher than ToT (7.2 k) and ReAct (5.4 k) but substantially lower than the MCTS‑based AFlow (≈21.8 k). This demonstrates that the method balances the exploration benefits of multi‑branch reasoning with practical inference costs.

Ablation studies confirm three core contributors to performance: (1) the size and quality of the retrieved experience pool, (2) the diversity introduced by multiple guideline‑driven branches, and (3) the step‑wise aggregation mechanism that selects the most promising continuation at each stage. Removing any of these components leads to noticeable drops in accuracy, underscoring their synergistic effect.

The paper’s contributions are threefold: (i) extending retrieval‑augmented generation from external knowledge to reasoning experience, (ii) proposing a label‑guided generation pipeline that yields high‑quality, label‑consistent gold traces for memory, and (iii) designing a structured multi‑branch reasoning and aggregation framework that naturally supports multi‑model collaboration.

Limitations include the linear growth of the memory repository, which may pose scalability challenges for very large corpora, and the reliance on prompt‑based guideline induction, which could be replaced by more systematic graph‑based abstractions in future work. Moreover, while the method shows strong results on math and code tasks, its applicability to domains requiring richer contextual understanding (e.g., scientific literature synthesis, legal reasoning) remains an open question.

In summary, Guideline Forest offers a compelling paradigm for making LLM reasoning more experience‑driven, efficient, and collaborative. By grounding inference in reusable, abstracted guidelines derived from verified past reasoning, it achieves consistent performance gains across diverse tasks and model scales, pointing toward a new direction for retrieval‑augmented reasoning research.

Comments & Academic Discussion

Loading comments...

Leave a Comment