Efficient Policy Optimization in Robust Constrained MDPs with Iteration Complexity Guarantees

Constrained decision-making is essential for designing safe policies in real-world control systems, yet simulated environments often fail to capture real-world adversities. We consider the problem of learning a policy that will maximize the cumulativ…

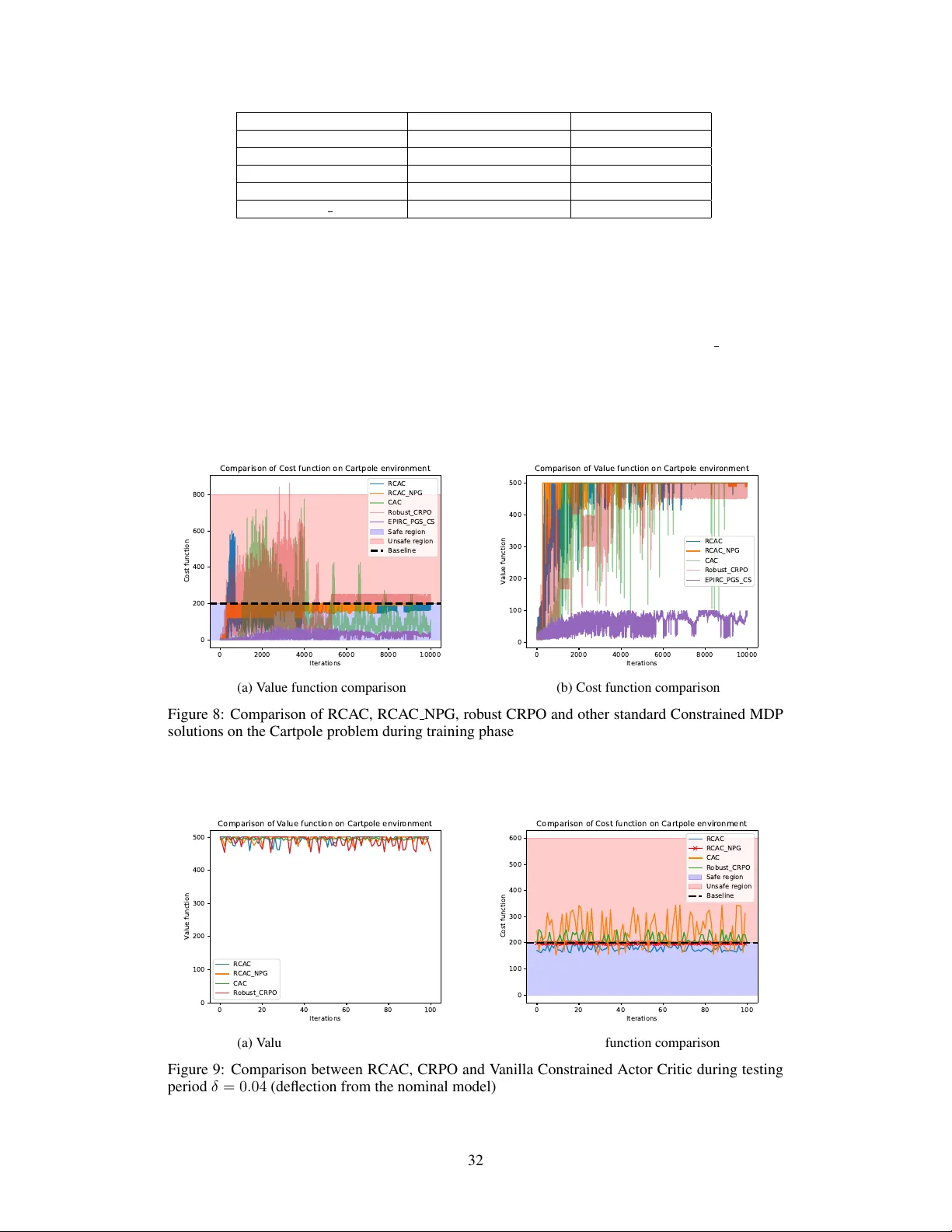

Authors: Sourav Ganguly, Kishan Panaganti, Arnob Ghosh