AgentDrug: Utilizing Large Language Models in An Agentic Workflow for Zero-Shot Molecular Editing

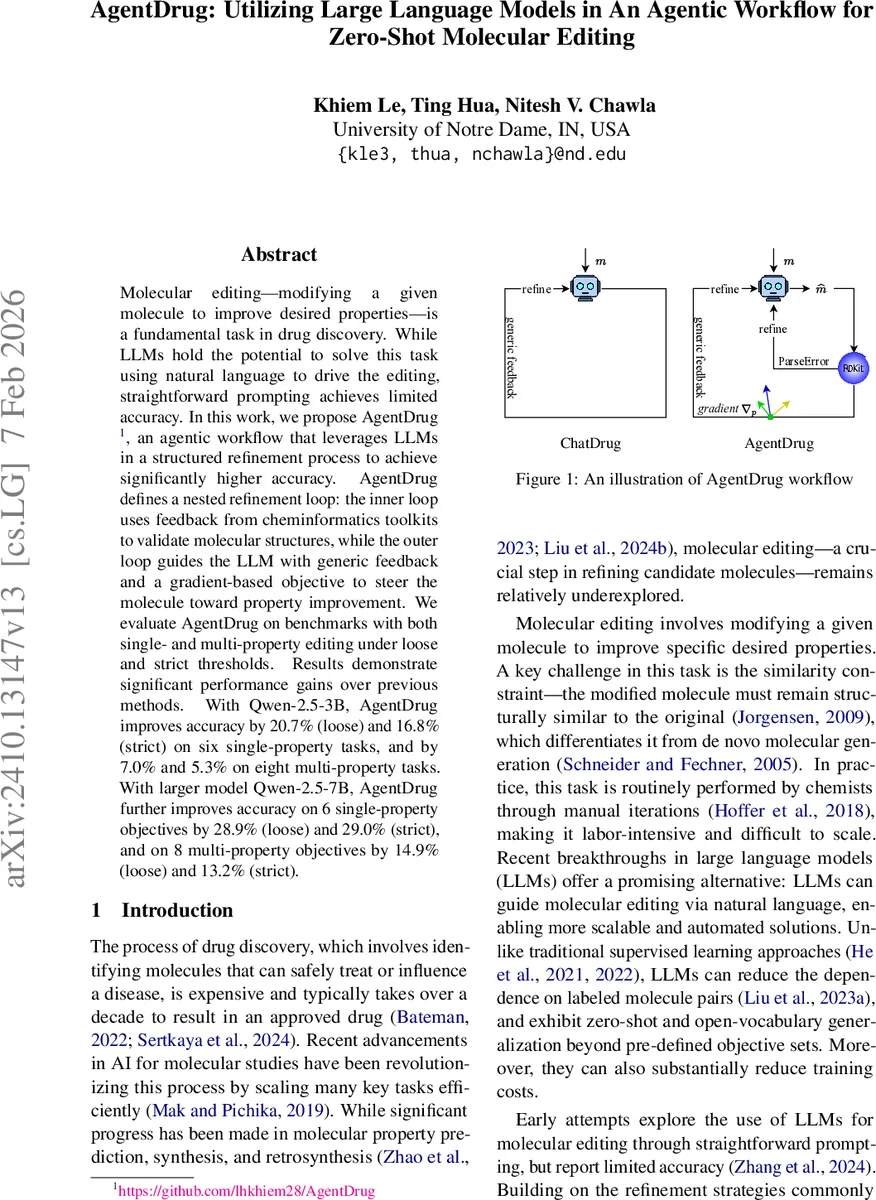

Molecular editing-modifying a given molecule to improve desired properties-is a fundamental task in drug discovery. While LLMs hold the potential to solve this task using natural language to drive the editing, straightforward prompting achieves limited accuracy. In this work, we propose AgentDrug, an agentic workflow that leverages LLMs in a structured refinement process to achieve significantly higher accuracy. AgentDrug defines a nested refinement loop: the inner loop uses feedback from cheminformatics toolkits to validate molecular structures, while the outer loop guides the LLM with generic feedback and a gradient-based objective to steer the molecule toward property improvement. We evaluate AgentDrug on benchmarks with both single- and multi-property editing under loose and strict thresholds. Results demonstrate significant performance gains over previous methods. With Qwen-2.5-3B, AgentDrug improves accuracy by 20.7% (loose) and 16.8% (strict) on six single-property tasks, and by 7.0% and 5.3% on eight multi-property tasks. With larger model Qwen-2.5-7B, AgentDrug further improves accuracy on 6 single-property objectives by 28.9% (loose) and 29.0% (strict), and on 8 multi-property objectives by 14.9% (loose) and 13.2% (strict).

💡 Research Summary

AgentDrug introduces a novel agentic workflow that leverages large language models (LLMs) for zero‑shot molecular editing, addressing the shortcomings of earlier LLM‑based approaches. Traditional methods rely on straightforward prompting to generate SMILES strings, providing only generic feedback such as “the edited molecule does not meet the objective.” This leads to limited accuracy and frequent “molecule hallucination,” where the generated structures are chemically invalid.

AgentDrug solves these problems through a nested refinement loop consisting of an inner validity loop and an outer optimization loop.

The inner loop uses RDKit to parse the LLM‑generated SMILES. If a ParseError occurs, the error is classified into one of six categories (syntax, parentheses, duplicate bond, valence, aromaticity, unclosed ring) and fed back to the LLM. The model iteratively corrects the SMILES until a valid molecule is produced, dramatically reducing the rate of invalid outputs.

Once validity is achieved, the outer loop supplies two complementary sources of guidance. First, a gradient vector ∇p is computed for each target property p_i based on the difference between the current value and the desired threshold d_i, encoding both direction (increase or decrease) and magnitude of required change. This explicit gradient acts as a quantitative steering signal, akin to gradient ascent, enabling the LLM to focus its edits on the most impactful modifications. Second, a similarity‑based retrieval step selects a reference molecule m_e from a pre‑constructed database (e.g., ZINC) that is both structurally similar to the edited molecule (high Tanimoto similarity) and already satisfies the editing objective. This retrieved example is supplied as an in‑context demonstration, allowing the LLM to draw on existing chemical knowledge rather than generating from scratch.

The authors evaluate AgentDrug on 500 ZINC molecules, targeting three physicochemical properties—LogP, TPSA, and QED—under both single‑property and multi‑property editing scenarios. Two difficulty levels are defined: “loose” thresholds (modest property changes) and “strict” thresholds (substantial changes). Experiments are conducted with Qwen‑2.5‑3B and the larger Qwen‑2.5‑7B models, and performance is compared against the prior ChatDrug framework and the REINVENT baseline.

Results show that AgentDrug consistently outperforms baselines. With Qwen‑2.5‑3B, accuracy improves by 20.7 % (loose) and 16.8 % (strict) on six single‑property tasks, and by 7.0 % and 5.3 % on eight multi‑property tasks. Scaling to Qwen‑2.5‑7B yields even larger gains: 28.9 % (loose) and 29.0 % (strict) on single‑property tasks, and 14.9 % (loose) and 13.2 % (strict) on multi‑property tasks. Importantly, the validity rate remains above 90 % across all settings, confirming the effectiveness of the inner loop in eliminating hallucinated molecules.

Key contributions of the paper are: (1) a systematic error‑correction mechanism that integrates cheminformatics tools directly into the LLM feedback cycle; (2) the introduction of property‑gradient signals that provide actionable, quantitative guidance for molecular optimization; and (3) the use of similarity‑based in‑context examples to ground the LLM’s generative process in real chemical space. Together, these innovations transform the LLM from a purely linguistic model into a chemistry‑aware agent capable of iterative, constraint‑aware molecular design.

Future directions suggested include extending the framework to larger LLMs, handling more complex multi‑objective trade‑offs, and incorporating synthetic feasibility or cost considerations into the gradient signal, thereby moving closer to practical, end‑to‑end AI‑driven drug discovery pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment