RuleFlow : Generating Reusable Program Optimizations with LLMs

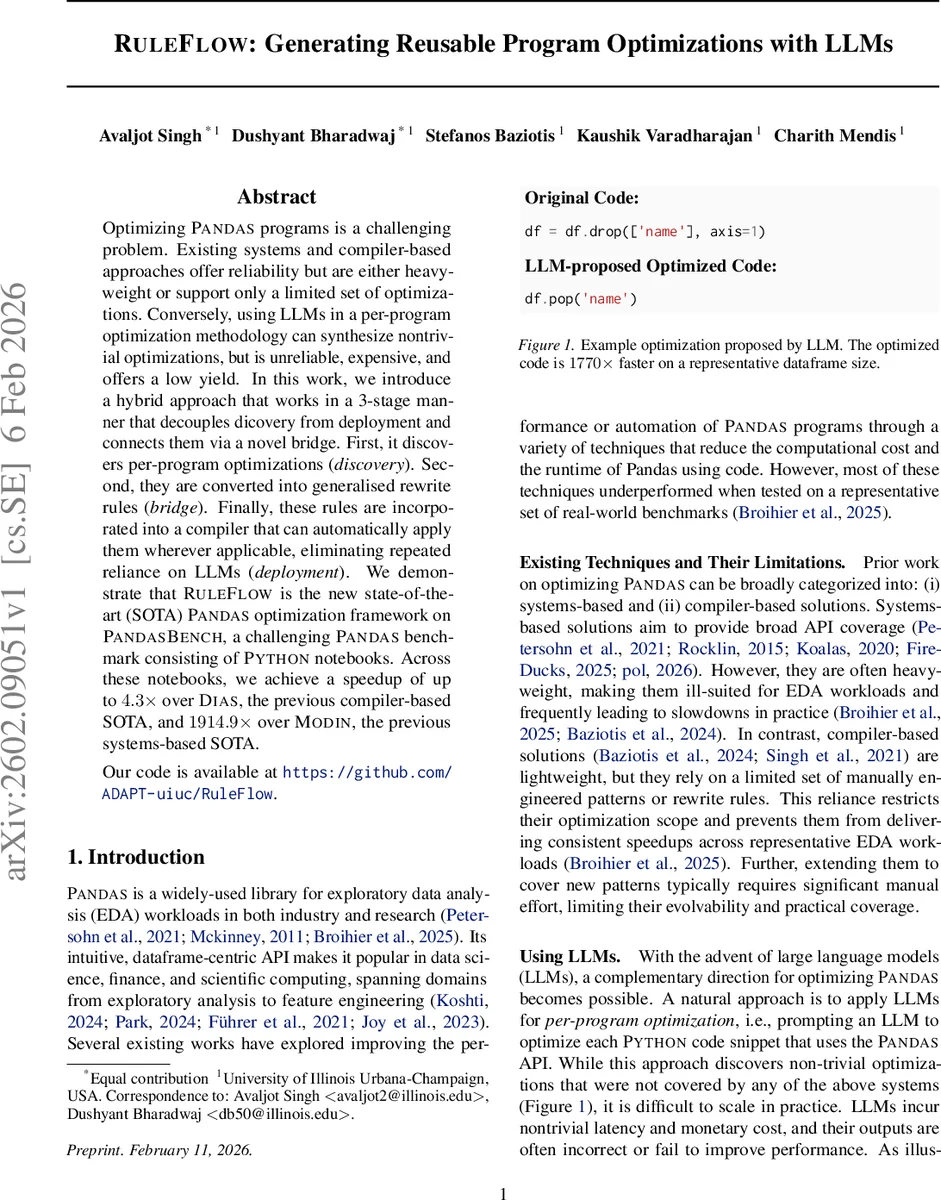

Optimizing Pandas programs is a challenging problem. Existing systems and compiler-based approaches offer reliability but are either heavyweight or support only a limited set of optimizations. Conversely, using LLMs in a per-program optimization methodology can synthesize nontrivial optimizations, but is unreliable, expensive, and offers a low yield. In this work, we introduce a hybrid approach that works in a 3-stage manner that decouples discovery from deployment and connects them via a novel bridge. First, it discovers per-program optimizations (discovery). Second, they are converted into generalised rewrite rules (bridge). Finally, these rules are incorporated into a compiler that can automatically apply them wherever applicable, eliminating repeated reliance on LLMs (deployment). We demonstrate that RuleFlow is the new state-of-the-art (SOTA) Pandas optimization framework on PandasBench, a challenging Pandas benchmark consisting of Python notebooks. Across these notebooks, we achieve a speedup of up to 4.3x over Dias, the previous compiler-based SOTA, and 1914.9x over Modin, the previous systems-based SOTA. Our code is available at https://github.com/ADAPT-uiuc/RuleFlow.

💡 Research Summary

The paper introduces RuleFlow, a three‑stage hybrid system that leverages large language models (LLMs) to discover performance‑enhancing transformations for Pandas code, converts those transformations into reusable rewrite rules, and then applies the rules at compile time without further LLM involvement. The authors motivate the work by pointing out the limitations of existing approaches: systems‑based solutions (e.g., Modin, cuDF) are heavyweight and often under‑perform on exploratory data analysis workloads, while compiler‑based solutions (e.g., DIAS, SCIRPy) rely on a small, manually crafted rule set that cannot cover the breadth of real‑world notebooks. Direct per‑program LLM optimization, although capable of generating novel optimizations, suffers from low yield (only 5.7 % of generated suggestions are both correct and faster) and high latency/cost.

RuleFlow separates the high‑variance discovery phase from the deterministic deployment phase. In the Discovery stage (SNIPPET‑GEN), an LLM is prompted for each code cell extracted from a large corpus (the PandasBench benchmark of 102 notebooks). Multiple candidate rewrites are sampled, then automatically filtered by two constraints: (1) semantic equivalence, verified by executing both original and candidate on a suite of random dataframes, and (2) measurable runtime improvement, measured against absolute and relative thresholds. This process yields 2,639 semantically correct candidates, of which 235 provide genuine speedups.

The Bridge stage (RULE‑GEN) lifts each validated pair into a generalized rewrite rule expressed in a domain‑specific language (DSL) originally used by DIAS. The DSL captures abstract AST variables (e.g., @{Name:v}) and runtime preconditions (e.g., isinstance(v, pandas.DataFrame) and c in v.columns). By abstracting concrete syntax, a single rule can match many syntactically similar code fragments, dramatically increasing reuse. The authors implement an LLM‑assisted agent that extracts the LHS/RHS patterns and synthesizes the necessary preconditions.

In the Deployment stage (CODE‑GEN), a lightweight static matcher and rewriter apply the accumulated rule set to unseen notebooks. No LLM calls are required, eliminating inference latency and monetary cost. Empirically, the rule set covers 87.13 % of test notebooks, with individual notebooks matching up to 13 distinct rules and the most popular rule applying to 72 notebooks. Performance gains are substantial: compared to DIAS, RuleFlow achieves an average 4.3× speedup (up to 199× on single cells); compared to Modin, it achieves an average 1914.9× speedup (up to 1704× on single cells). The authors also report that the rule generation pipeline is scalable: as more notebooks are processed, the rule base grows, further increasing hit rates without additional LLM expense.

The paper provides detailed engineering insights, including prompt design, multi‑sample generation (k = 5), automated testing harnesses, and the integration of the DSL with Python’s AST. It also discusses limitations such as reliance on the quality of the LLM’s suggestions and the need for robust equivalence testing, which may miss subtle bugs in edge cases.

Finally, the authors argue that the “discover‑bridge‑deploy” paradigm is broadly applicable beyond Pandas, potentially benefiting SQL query optimization, Spark DataFrames, or GPU kernel generation. By confining LLM usage to an offline, exploratory phase and handling deployment with deterministic compiler techniques, RuleFlow achieves both the creativity of LLMs and the reliability of traditional optimizers, setting a new state‑of‑the‑art for data‑frame‑centric performance engineering.

Comments & Academic Discussion

Loading comments...

Leave a Comment