ADCanvas: Accessible and Conversational Audio Description Authoring for Blind and Low Vision Creators

Audio Description (AD) provides essential access to visual media for blind and low vision (BLV) audiences. Yet current AD production tools remain largely inaccessible to BLV video creators, who possess valuable expertise but face barriers due to visually-driven interfaces. We present ADCanvas, a multimodal authoring system that supports non-visual control over audio description (AD) creation. ADCanvas combines conversational interaction with keyboard-based playback control and a plain-text, screen reader-accessible editor to support end-to-end AD authoring and visual question answering (VQA). Combining screen-reader-friendly controls with a multimodal LLM agent, ADCanvas supports live VQA, script generation, and AD modification. Through a user study with 12 BLV video creators, we find that users adopt the conversational agent as an informational aide and drafting assistant, while maintaining agency through verification and editing. For example, participants saw themselves as curators who received information from the model and filtered it down for their audience. Our findings offer design implications for accessible media tools, including precise editing controls, accessibility support for creative ideation, and configurable rules for human-AI collaboration.

💡 Research Summary

Audio Description (AD) is a vital accessibility modality that enables blind and low‑vision (BLV) audiences to understand visual media through spoken narration. Despite its importance, existing AD authoring tools—digital audio workstations, video editors, and timeline‑based interfaces—are fundamentally visual, making them inaccessible to BLV creators. Consequently, BLV professionals often rely on sighted collaborators for visual information, which introduces logistical overhead, reduces creative autonomy, and can lead to mismatches between the creator’s intent and the final description.

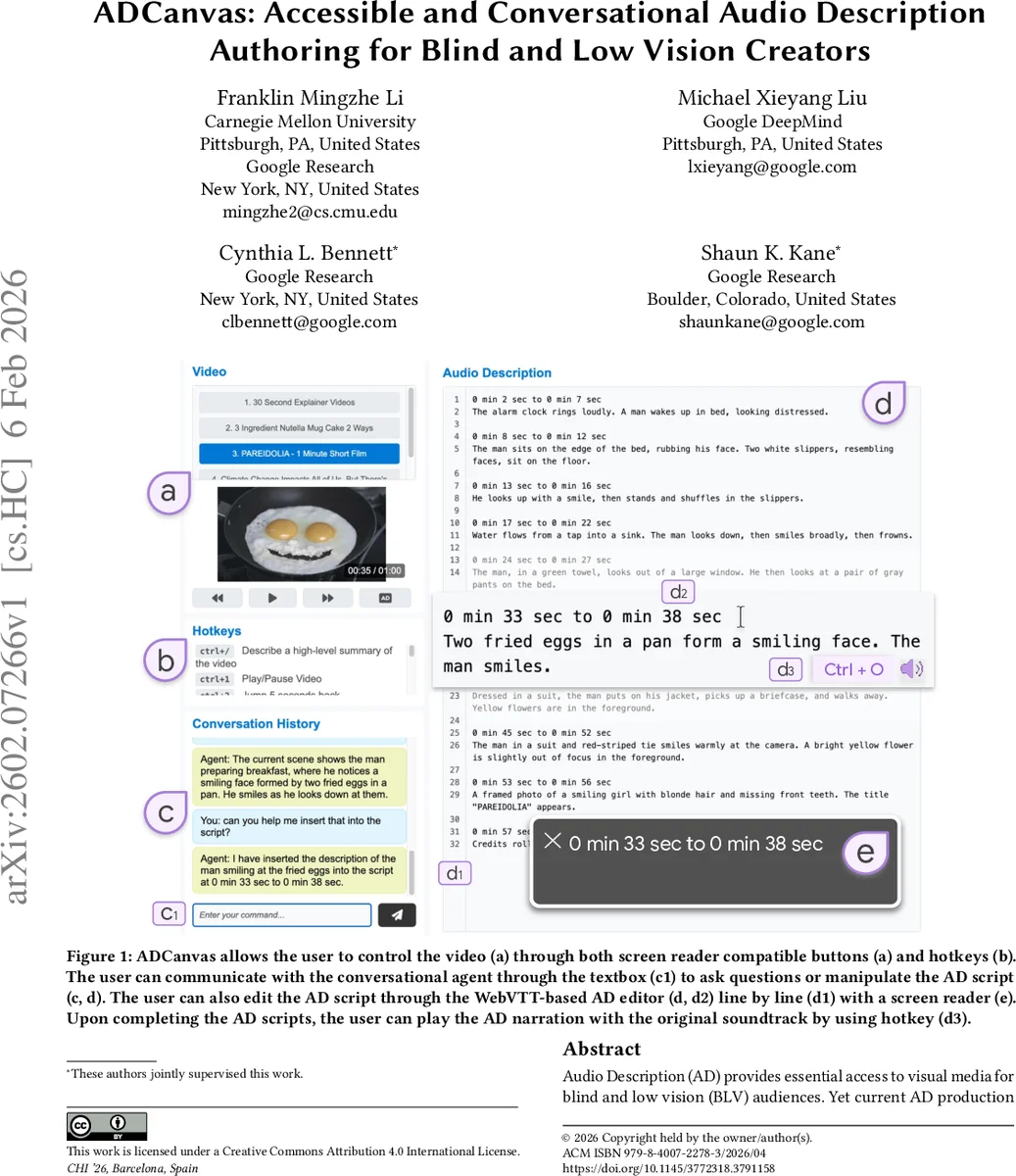

The paper introduces ADCanvas, a multimodal, screen‑reader‑compatible authoring system that empowers BLV creators to produce AD scripts without visual interaction. ADCanvas combines three core components: (1) a plain‑text WebVTT editor that can be navigated and edited entirely via keyboard and screen‑reader feedback; (2) a set of keyboard‑driven video playback controls (play/pause, frame‑step, timestamp jumps) with ARIA‑labeled buttons and hotkeys (e.g., Ctrl + O to open a file); and (3) a conversational agent powered by a state‑of‑the‑art multimodal large language model (MLLM). The agent can answer visual questions (VQA), generate scene summaries, and draft AD lines based on the video frames it processes. Users interact with the agent through a text box, asking for information (“Summarize the main character”) or issuing stylistic instructions (“Make the description more concise”). The model’s outputs can be directly inserted into the script, edited, or refined through iterative feedback, allowing creators to shape the AI’s behavior to match professional guidelines (present‑tense, objective tone, focus on actions over settings).

The system was evaluated with twelve BLV participants—both professional AD practitioners and independent video creators—in a qualitative study where each participant authored AD for short video clips using ADCanvas. The study addressed three research questions: (RQ1) how collaboration with a multimodal agent influences BLV creators’ practices; (RQ2) what interaction design challenges arise in non‑visual, conversational workflows for complex creative tasks; and (RQ3) how creators perceive human‑AI collaborative authoring. Findings reveal that participants treated the conversational agent as an informational aide and drafting assistant, while maintaining a supervisory “curator” role. They leveraged the agent for rapid scene understanding, initial script drafts, and targeted visual details, then verified and edited the output to ensure accuracy and stylistic consistency. The keyboard‑driven playback and screen‑reader‑friendly editor provided the precise timing control traditionally only available in visual timelines, enabling users to align descriptions with dialogue and sound effects accurately. Participants expressed high trust in the AI’s ability to surface visual content but emphasized the need for verification, reflecting a balanced trust‑verification dynamic.

Design implications emerged: (1) Precise Editing Controls – fine‑grained hotkeys and clear screen‑reader cues are essential for timing‑sensitive media work; (2) Creative Ideation Support – integrated VQA and automatic scene summarization help BLV creators generate ideas without sighted assistance; (3) Configurable Human‑AI Collaboration – allowing users to set style rules, adjust model temperature, and provide real‑time feedback fosters agency and reduces over‑reliance on the AI; (4) Conversation Flow Management – long, unstructured dialogues can cause workflow interruptions, suggesting the need for conversation summarization, turn‑taking cues, and possibly multimodal visual previews for sighted collaborators.

Limitations include the current lack of advanced DAW features such as waveform‑based gap detection, audio mixing, and fine‑grained automation curves, which remain outside ADCanvas’s scope and would still require integration with professional tools for high‑end production. Additionally, the accuracy of the underlying MLLM for detailed visual nuance is bounded by the model’s training data and may produce occasional hallucinations, reinforcing the necessity of human verification.

In conclusion, ADCanvas demonstrates that a thoughtfully designed multimodal conversational interface, combined with accessible keyboard navigation, can substantially lower the barrier for BLV creators to author high‑quality audio descriptions independently. The system re‑imagines the AD authoring workflow from a visual‑centric paradigm to a non‑visual, dialog‑driven process, preserving creative autonomy while leveraging AI assistance. Future work should explore improving model fidelity, automating conversation management, and integrating ADCanvas as a backend engine for community‑driven platforms like YouDescribe, thereby extending its impact to broader AD production ecosystems.

Comments & Academic Discussion

Loading comments...

Leave a Comment