aerial-autonomy-stack -- a Faster-than-real-time, Autopilot-agnostic, ROS2 Framework to Simulate and Deploy Perception-based Drones

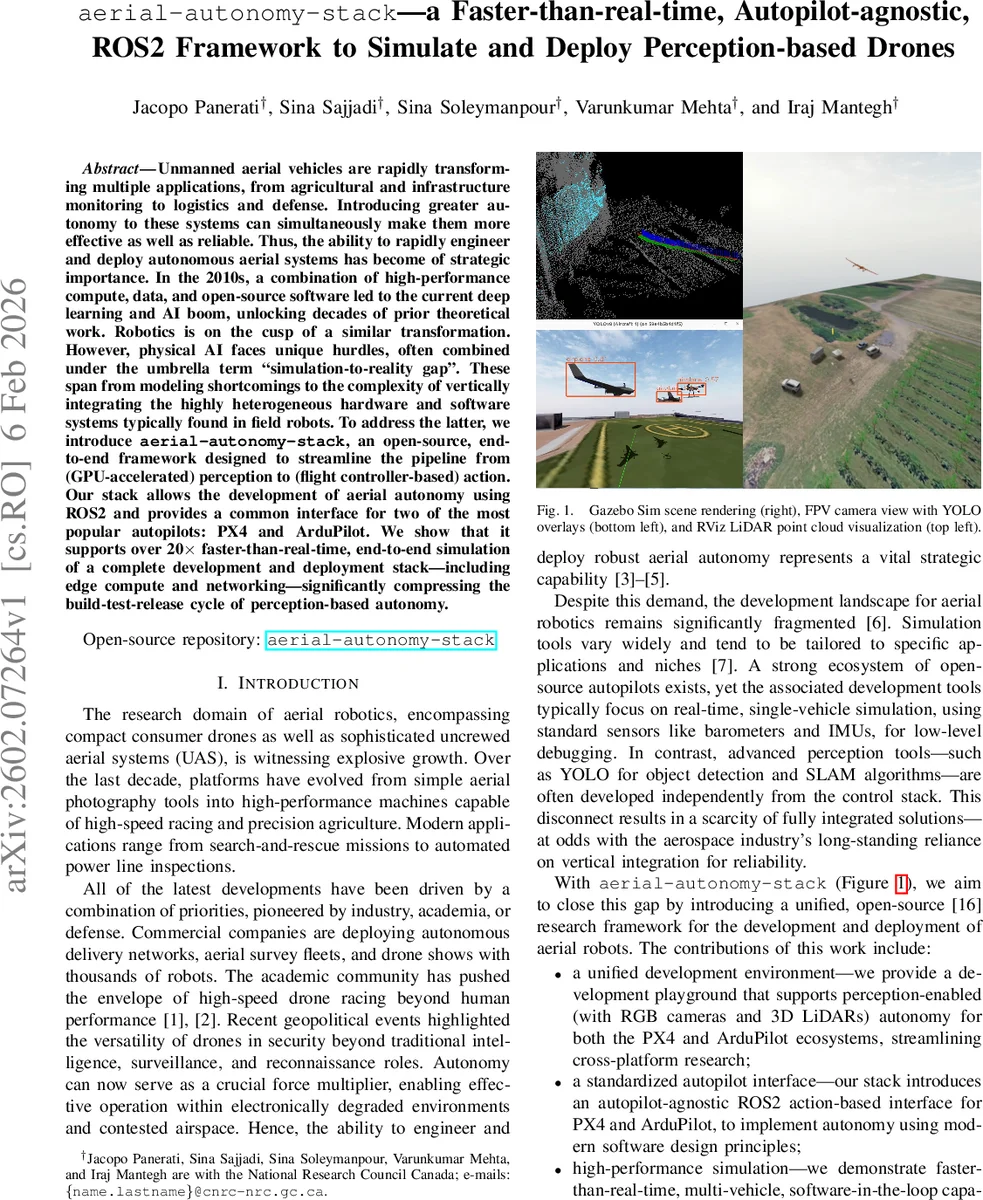

Unmanned aerial vehicles are rapidly transforming multiple applications, from agricultural and infrastructure monitoring to logistics and defense. Introducing greater autonomy to these systems can simultaneously make them more effective as well as reliable. Thus, the ability to rapidly engineer and deploy autonomous aerial systems has become of strategic importance. In the 2010s, a combination of high-performance compute, data, and open-source software led to the current deep learning and AI boom, unlocking decades of prior theoretical work. Robotics is on the cusp of a similar transformation. However, physical AI faces unique hurdles, often combined under the umbrella term “simulation-to-reality gap”. These span from modeling shortcomings to the complexity of vertically integrating the highly heterogeneous hardware and software systems typically found in field robots. To address the latter, we introduce aerial-autonomy-stack, an open-source, end-to-end framework designed to streamline the pipeline from (GPU-accelerated) perception to (flight controller-based) action. Our stack allows the development of aerial autonomy using ROS2 and provides a common interface for two of the most popular autopilots: PX4 and ArduPilot. We show that it supports over 20x faster-than-real-time, end-to-end simulation of a complete development and deployment stack – including edge compute and networking – significantly compressing the build-test-release cycle of perception-based autonomy.

💡 Research Summary

The paper introduces aerial‑autonomy‑stack, an open‑source, ROS 2‑based framework that unifies perception, control, and simulation for autonomous drones while remaining autopilot‑agnostic. By supporting both PX4 and ArduPilot through a common ROS 2 action interface, the stack eliminates the need for separate codebases when switching between the two dominant flight controllers. The core middleware consists of ROS 2 Humble for high‑level node orchestration, Micro‑XRCE‑DDS for low‑latency uORB‑ROS bridging, and MAVLink‑router for reliable telemetry.

Gazebo Sim (Harmonic) is selected as the simulation engine because it offers headless operation, ODE physics, and Ogre2 rendering that can be GPU‑accelerated. The authors exploit Gazebo’s “faster‑than‑real‑time stepping” to run software‑in‑the‑loop (SITL) and hardware‑in‑the‑loop (HITL) containers simultaneously, achieving more than 20× speed‑up compared with real‑time flight. Docker containers encapsulate three logical sub‑networks: simulation‑image (virtual world, sensor plugins, GStreamer bridge), air‑image (edge compute on Jetson, YOLOv8 nano inference via ONNX Runtime, KISS‑ICP LiDAR odometry), and ground‑image (QGroundControl, MAVLink router, Zenoh bridge). Zenoh enables peer‑to‑peer data replication across unreliable wireless links, preserving isolated ROS 2 namespaces while allowing selective state sharing among multiple drones.

Perception is built around Ultralytics YOLOv8 nano, exported to ONNX for portability across CUDA (desktop) and TensorRT (Jetson) back‑ends. The model processes RGB streams from simulated or real cameras without code changes, thanks to a custom Gazebo‑GStreamer bridge that mimics CSI camera pipelines. LiDAR odometry uses KISS‑ICP, providing high‑frequency ego‑motion estimates with minimal computational overhead. All perception outputs are published as ROS 2 topics, enabling downstream control nodes to remain perception‑agnostic.

The authors provide a library of vehicle SDF models (e.g., Holybro X500v2, 3DR Iris, standard VTOL, ALTI Transition) and world files (plain, empty, city, mountain) to cover a wide range of applications. The stack’s containerized architecture supports multi‑agent simulations, cross‑compilation for x86 and ARM64 targets, and distributed HITL tests that incorporate actual Jetson modules and RF links.

Performance evaluation demonstrates that a scenario with four multicopters and one VTOL can be simulated end‑to‑end—including sensor generation, YOLO inference, ICP odometry, and off‑board control—at more than twenty times real‑time speed. The same perception binaries run unchanged on a Jetson Orin, confirming simulation‑to‑real consistency. Comparative analysis shows that existing frameworks (Aerostack2, MRS UAV System, Agilicious, etc.) either lack integrated perception, are tied to a single autopilot, or do not provide a Docker‑based reproducible pipeline.

In summary, aerial‑autonomy‑stack bridges the “sim2real” gap not only by improving physical fidelity but also by simplifying system integration. Its fast‑forward simulation, autopilot‑agnostic ROS 2 interface, and container‑first design dramatically shorten development cycles, enable large‑scale data generation for learning‑based methods, and lay a solid foundation for future research and commercial deployment of perception‑driven autonomous drones.

Comments & Academic Discussion

Loading comments...

Leave a Comment