The Double-Edged Sword of Data-Driven Super-Resolution: Adversarial Super-Resolution Models

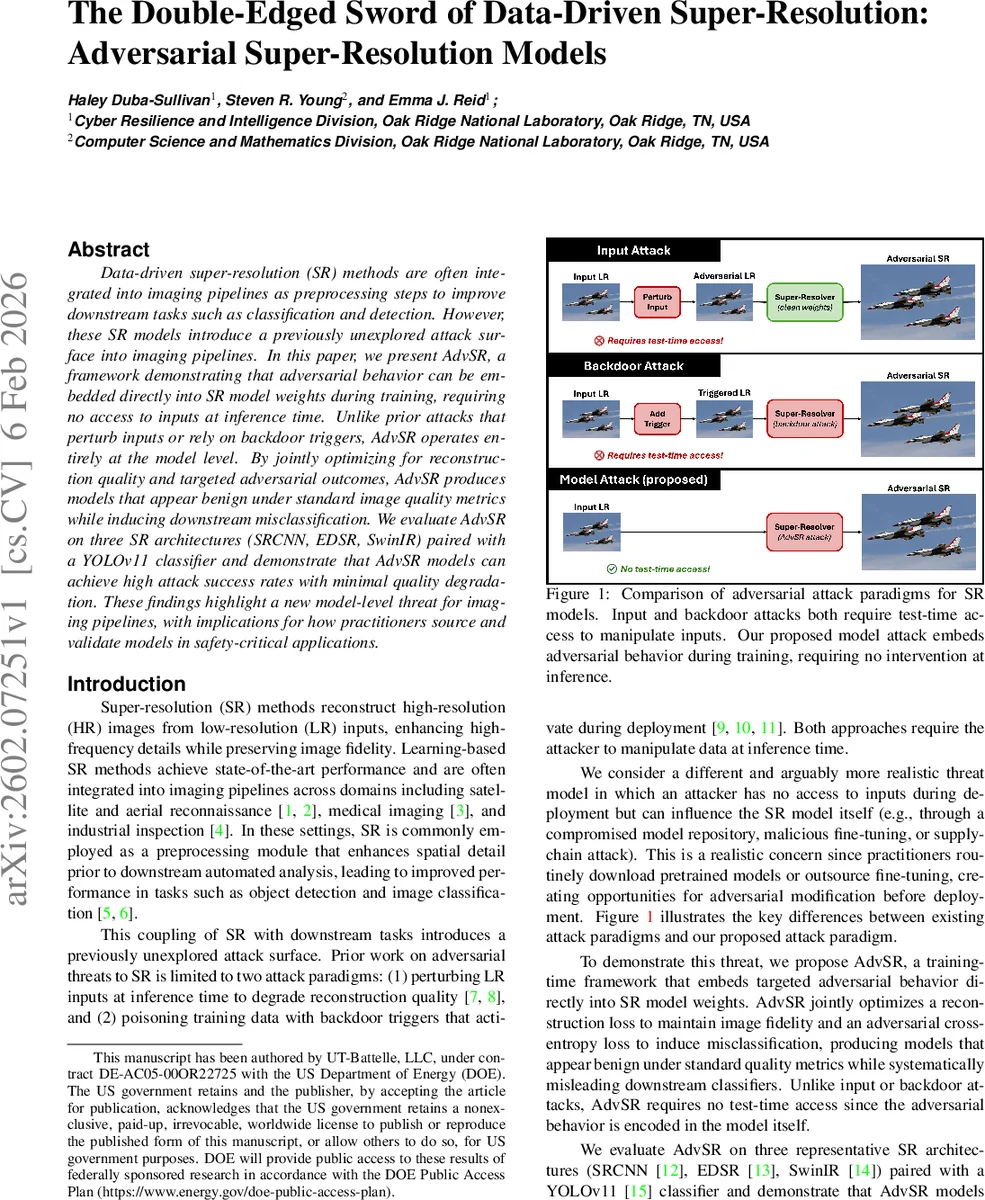

Data-driven super-resolution (SR) methods are often integrated into imaging pipelines as preprocessing steps to improve downstream tasks such as classification and detection. However, these SR models introduce a previously unexplored attack surface into imaging pipelines. In this paper, we present AdvSR, a framework demonstrating that adversarial behavior can be embedded directly into SR model weights during training, requiring no access to inputs at inference time. Unlike prior attacks that perturb inputs or rely on backdoor triggers, AdvSR operates entirely at the model level. By jointly optimizing for reconstruction quality and targeted adversarial outcomes, AdvSR produces models that appear benign under standard image quality metrics while inducing downstream misclassification. We evaluate AdvSR on three SR architectures (SRCNN, EDSR, SwinIR) paired with a YOLOv11 classifier and demonstrate that AdvSR models can achieve high attack success rates with minimal quality degradation. These findings highlight a new model-level threat for imaging pipelines, with implications for how practitioners source and validate models in safety-critical applications.

💡 Research Summary

The paper introduces AdvSR, a novel model‑level adversarial attack framework that embeds malicious behavior directly into the weights of data‑driven super‑resolution (SR) networks during training. Unlike prior attacks that perturb low‑resolution inputs at inference time or insert backdoor triggers, AdvSR requires no test‑time access to inputs; the SR model itself becomes the attack vector. The authors formulate a joint loss consisting of (1) a conventional SR reconstruction loss (L1 plus a perceptual loss based on VGG features) to preserve high‑fidelity image reconstruction, and (2) an adversarial cross‑entropy loss that relabels source‑class images as a chosen target class for the downstream classifier. A balancing hyper‑parameter λ (re‑parameterized as a more interpretable ratio r) controls the trade‑off between image quality and attack potency.

Experiments are conducted on three representative SR architectures—CNN‑based SRCNN, residual CNN EDSR, and transformer‑based SwinIR—trained on a curated ImageNet subset containing 20 vehicle‑related classes. Low‑resolution inputs are generated by Gaussian blur and 2× downsampling. The downstream task is object classification using YOLOv11, fine‑tuned on either a 5‑class subset (YOLO‑5) or the full 20‑class set (YOLO‑20). For each SR model, a “clean” version (trained only with the reconstruction loss) and an “AdvSR” version (trained with the joint loss) are compared.

Results show that AdvSR can achieve very high targeted attack success rates (Targeted‑ASR) while preserving standard image quality metrics. On YOLO‑5, SRCNN, EDSR, and SwinIR attain Targeted‑ASR of 82 %, 68 %, and 80 % respectively, with only minor drops in PSNR/SSIM and negligible increase in LPIPS. Non‑source accuracy (NSA) remains above 97 %, indicating that the attack selectively misclassifies only the chosen source class. When the classifier is expanded to 20 classes (YOLO‑20), Targeted‑ASR drops (0–26 %) and Untargeted‑ASR rises (40–60 %), reflecting a shift from precise targeted misclassification to broader obfuscation as the downstream model becomes more complex. Correspondingly, image quality degrades more noticeably (PSNR reductions of 3–6 dB, SSIM drops of 0.1–0.4). Qualitative examples reveal subtle edge artifacts in AdvSR outputs that are sufficient to fool the classifier while keeping the overall scene visually plausible.

The study highlights a previously unexamined supply‑chain threat: SR models obtained from external repositories or fine‑tuned by third parties could be maliciously altered to act as covert adversarial modules. Since the attack does not rely on input manipulation, conventional defenses such as input sanitization or adversarial detection at inference are ineffective. The authors discuss limitations, including dependence on the downstream model’s architecture, the need for careful hyper‑parameter tuning per SR architecture, and evaluation limited to a single classification backbone.

Future work is suggested in several directions: extending the attack to other downstream tasks (object detection, segmentation), developing robust model‑integrity verification (hashes, digital signatures) and runtime defenses, and exploring more efficient adversarial loss formulations that further reduce quality loss. Overall, AdvSR demonstrates that embedding adversarial objectives into SR networks is a feasible and potent attack strategy, raising important security considerations for any imaging pipeline that incorporates learned super‑resolution as a preprocessing step.

Comments & Academic Discussion

Loading comments...

Leave a Comment