Learning Nonlinear Systems In-Context: From Synthetic Data to Real-World Motor Control

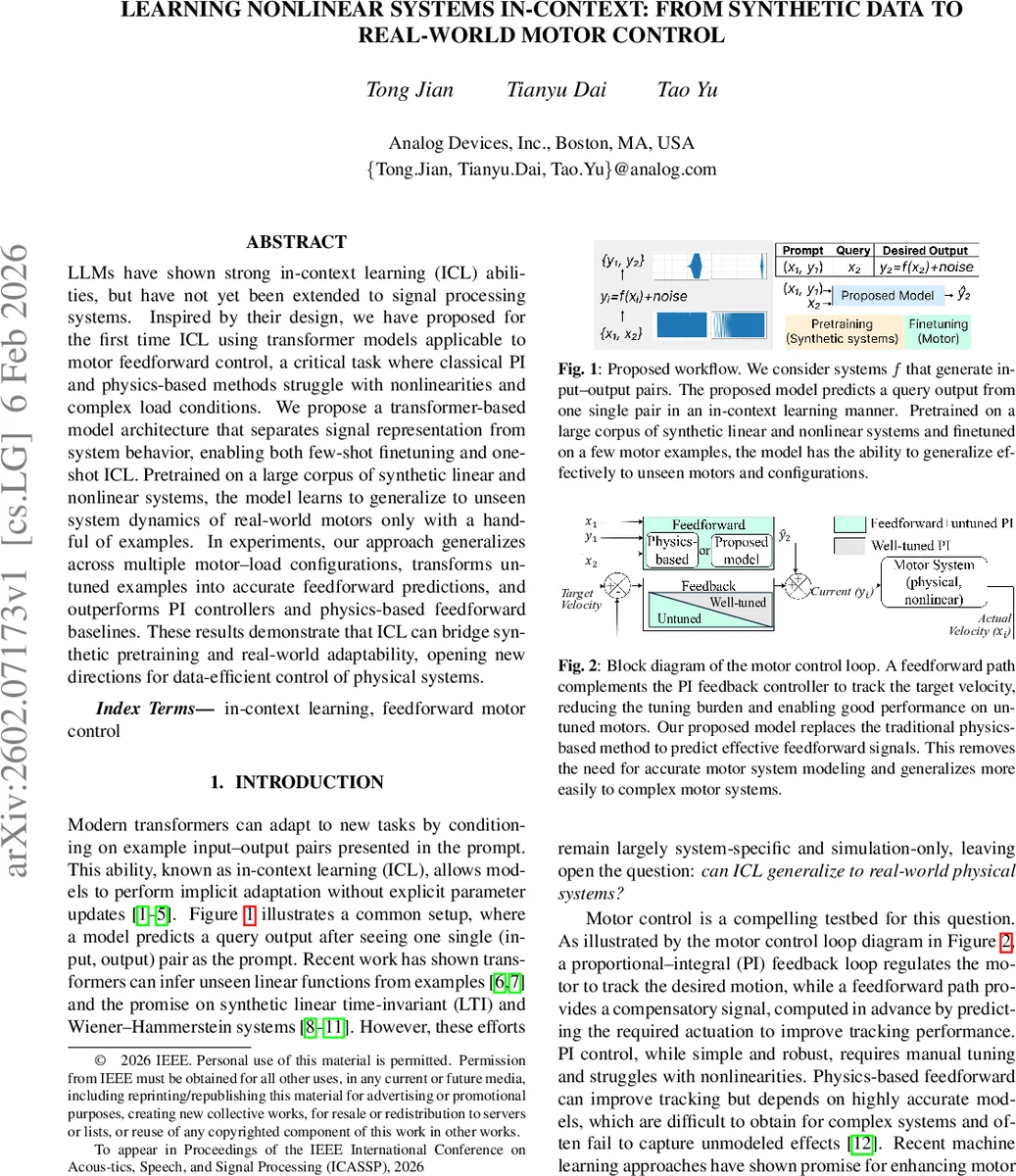

LLMs have shown strong in-context learning (ICL) abilities, but have not yet been extended to signal processing systems. Inspired by their design, we have proposed for the first time ICL using transformer models applicable to motor feedforward control, a critical task where classical PI and physics-based methods struggle with nonlinearities and complex load conditions. We propose a transformer based model architecture that separates signal representation from system behavior, enabling both few-shot finetuning and one-shot ICL. Pretrained on a large corpus of synthetic linear and nonlinear systems, the model learns to generalize to unseen system dynamics of real-world motors only with a handful of examples. In experiments, our approach generalizes across multiple motor load configurations, transforms untuned examples into accurate feedforward predictions, and outperforms PI controllers and physics-based feedforward baselines. These results demonstrate that ICL can bridge synthetic pretraining and real-world adaptability, opening new directions for data efficient control of physical systems.

💡 Research Summary

The paper introduces a novel approach that brings in‑context learning (ICL), a capability of large language models, to the domain of motor feed‑forward control. The authors design a transformer‑based architecture that separates signal representation from system behavior, enabling both few‑shot fine‑tuning and true one‑shot ICL. The method proceeds in two stages.

Stage 1 – Signal Representation.

A lightweight encoder‑decoder derived from Encodec converts raw input and output time‑series (velocity, torque current) into token sequences. The encoder downsamples by a factor of 320 and quantizes into eight codebooks of 128‑dimensional embeddings. Training uses a simple mean‑squared‑error reconstruction loss, achieving a mean RMSE of 0.0201 on held‑out synthetic signals, confirming that the tokens retain the essential waveform information while being length‑agnostic.

Stage 2 – System‑Behavior Learning.

Using the frozen token sequences, a second transformer learns a system embedding z from a single input‑output pair. The embedding block processes the paired tokens together with a learnable “system token” through self‑attention layers with relative positional encodings. A contrastive loss pulls embeddings of the same underlying system together and pushes different systems apart, producing a latent space where linear‑time‑invariant (LTI) and nonlinear‑time‑invariant (NTI) dynamics form distinct clusters. The prediction block then concatenates the learned z with tokens of a new query input x₂ and generates the corresponding output tokens ŷ₂. A reconstruction loss on ŷ₂ completes the joint objective. On unseen synthetic systems, the model attains a mean RMSE of 0.0469 with only one example as prompt.

Synthetic Pre‑training Corpus.

The authors generate 60 k input‑output pairs from 20 k synthetic systems. LTI systems are created with SciPy filter design (orders 1‑4, low‑pass, high‑pass, band‑pass, band‑stop) with random cut‑off frequencies and gains. NTI systems augment random LTI models with dead‑zones, saturation limits, and static friction to emulate realistic nonlinearities. The dataset is split 18 k/2 k for training/testing, and no explicit system equations are ever exposed to the model.

Real‑World Motor Experiments.

A hardware testbed uses Analog Devices’ TMC9660 controller driving both stepper and brushless DC (BLDC) motors under various inertial loads. The control goal is to predict the torque current i_q required to follow a target velocity ω. For fine‑tuning, two kinds of data are collected: (1) “untuned” pairs obtained from a plain PI controller (representing what a user would have without any prior calibration) and (2) “well‑tuned” pairs from a PI controller augmented with a physics‑based feed‑forward term (used as the target output during training). Only a single layer of the system‑behavior model is fine‑tuned for 20 epochs; the signal encoder remains frozen.

The authors discover that chirp signals (0.001–10 Hz) make the most informative prompts because they excite a wide frequency range. All reported results therefore use chirp prompts with ramp or step queries.

Three evaluation scenarios are presented:

-

Unseen load on known motors. The model, fine‑tuned on two motors across five load conditions, is tested on a new load within the same range. It achieves RMSEs of 0.48–0.70, outperforming a well‑tuned PI (1.36–1.74) and a physics‑based feed‑forward (0.70–0.76).

-

Unseen motor. The same fine‑tuned model is applied to a completely different stepper motor. Despite having seen only two motors during fine‑tuning, the model still predicts accurate feed‑forward currents, demonstrating strong cross‑motor generalization.

-

Two‑inertia system. A motor drives a linear slide; the system exhibits coupled dynamics that are hard to capture with analytical models. After fine‑tuning on just two load conditions (zero and heavy), the model accurately predicts the response for an intermediate load, achieving RMSE 1.13 versus 1.91 for the PI baseline and 0.46 for the physics‑based feed‑forward.

Ablation Studies.

The authors compare (a) the proposed two‑stage pre‑training against end‑to‑end joint training, finding the former reduces RMSE from 1.20 to 1.13 on ramp queries, and (b) pre‑training on both LTI + NTI versus LTI‑only data. Adding NTI improves performance modestly (e.g., RMSE 1.13 vs 1.17 on ramps, 1.79 vs 2.16 on steps) but even the LTI‑only model outperforms traditional baselines, indicating that exact synthetic matching is not essential.

Conclusions and Impact.

The work demonstrates that a transformer can learn a compact, transferable representation of system dynamics from raw time‑series alone, and that a single example from a new physical system can serve as an effective prompt for accurate feed‑forward control. This bridges the gap between massive synthetic pre‑training and data‑efficient real‑world adaptation, opening a path toward plug‑and‑play control solutions for a wide range of nonlinear electromechanical devices. Future extensions could target robotic manipulators, power‑electronics converters, or aerospace actuators where rapid deployment with minimal calibration data is critical.

Comments & Academic Discussion

Loading comments...

Leave a Comment