TLC-Plan: A Two-Level Codebook Based Network for End-to-End Vector Floorplan Generation

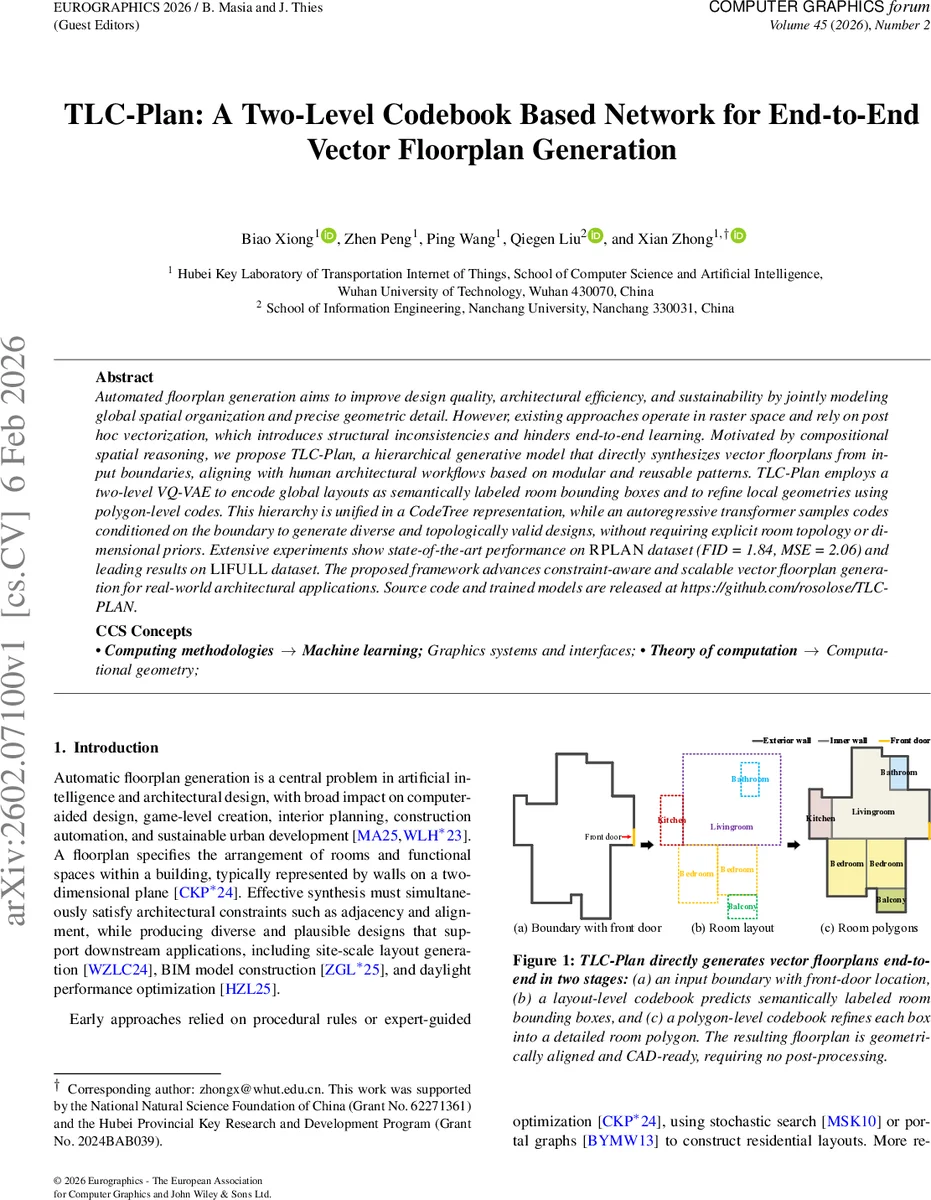

Automated floorplan generation aims to improve design quality, architectural efficiency, and sustainability by jointly modeling global spatial organization and precise geometric detail. However, existing approaches operate in raster space and rely on post hoc vectorization, which introduces structural inconsistencies and hinders end-to-end learning. Motivated by compositional spatial reasoning, we propose TLC-Plan, a hierarchical generative model that directly synthesizes vector floorplans from input boundaries, aligning with human architectural workflows based on modular and reusable patterns. TLC-Plan employs a two-level VQ-VAE to encode global layouts as semantically labeled room bounding boxes and to refine local geometries using polygon-level codes. This hierarchy is unified in a CodeTree representation, while an autoregressive transformer samples codes conditioned on the boundary to generate diverse and topologically valid designs, without requiring explicit room topology or dimensional priors. Extensive experiments show state-of-the-art performance on RPLAN dataset (FID = 1.84, MSE = 2.06) and leading results on LIFULL dataset. The proposed framework advances constraint-aware and scalable vector floorplan generation for real-world architectural applications. Source code and trained models are released at https://github.com/rosolose/TLC-PLAN.

💡 Research Summary

TLC‑Plan addresses the long‑standing challenge of generating high‑quality vector floorplans directly from building boundaries, eliminating the raster‑to‑vector conversion step that has plagued prior work with discretization artifacts and non‑differentiable post‑processing. The core contribution is a hierarchical generative architecture composed of two parallel vector‑quantized variational autoencoders (VQ‑VAEs) and an autoregressive transformer decoder.

The first VQ‑VAE encodes the global layout: each room is represented by its bottom‑left coordinates, width, height, and semantic type. These five attributes are discretized into 6‑bit values, embedded into a 32‑dimensional vector, and projected to a 256‑dim token. The second VQ‑VAE processes the detailed polygonal geometry of each room, treating the ordered list of vertices as a sequence and applying the same tokenization pipeline. Both codebooks are learned jointly but remain distinct, capturing high‑level spatial organization and low‑level shape details respectively.

To fuse the two levels, the authors introduce a “CodeTree” – a linear sequence of discrete indices that concatenates the layout code with the polygon codes of all rooms in a fixed order (living room first, then sorted by bottom‑left coordinates). This representation enables a single transformer decoder to model the joint distribution of global and local structure. During training, ground‑truth floorplans are converted into CodeTrees, and the transformer is supervised to predict the exact token sequence conditioned on a feature vector extracted from the input boundary (including front‑door location). A masked‑reconstruction objective forces the VQ‑VAEs to learn reusable design patterns rather than memorizing raw geometry.

Inference proceeds by feeding only the boundary feature into the transformer, which samples a CodeTree using nucleus (top‑p) sampling, thereby producing diverse yet plausible designs. The sampled CodeTree is then decoded by the pretrained polygon decoder, reconstructing each room’s bounding box and its precise polygon, with the front‑door encoded by reordering the first two vertices. No external graph, adjacency matrix, or dimensional priors are required, and the entire pipeline remains fully differentiable.

The loss function combines an Earth Mover’s Distance (EMD) reconstruction term, a codebook commitment loss, and a stop‑gradient term, while codebook updates employ an exponential moving average (EMA) to maintain stability without back‑propagating through discrete indices.

Extensive experiments on the RPLAN and LIFULL datasets demonstrate state‑of‑the‑art performance. On RPLAN, TLC‑Plan achieves an FID of 1.84 and an MSE of 2.06, surpassing raster‑based methods (FloorplanGAN, Graph2Plan), vector‑based GANs, and recent diffusion models (HouseDiffusion, Cons2Plan). Geometric fidelity metrics—corner alignment error, area deviation, and topological consistency—also show significant improvements. Ablation studies confirm that both hierarchical codebooks and the CodeTree formulation are essential: removing the polygon‑level codebook degrades fine‑grained geometry, while omitting the layout codebook harms global spatial coherence.

Beyond quantitative gains, the hierarchical discrete latent space offers practical advantages. Designers can manipulate the layout code (e.g., enforce adjacency constraints) without retraining the polygon decoder, and the model can be extended to accept high‑level semantic prompts or integrate with large language models for natural‑language‑driven design. The authors release code and pretrained models, facilitating reproducibility and future research on compositional, constraint‑aware architectural generation.

In summary, TLC‑Plan delivers a unified, end‑to‑end solution for vector floorplan synthesis that respects both macro‑scale planning and micro‑scale geometric detail, opening the door to scalable, CAD‑ready automated architecture pipelines.

Comments & Academic Discussion

Loading comments...

Leave a Comment