Agentic Uncertainty Reveals Agentic Overconfidence

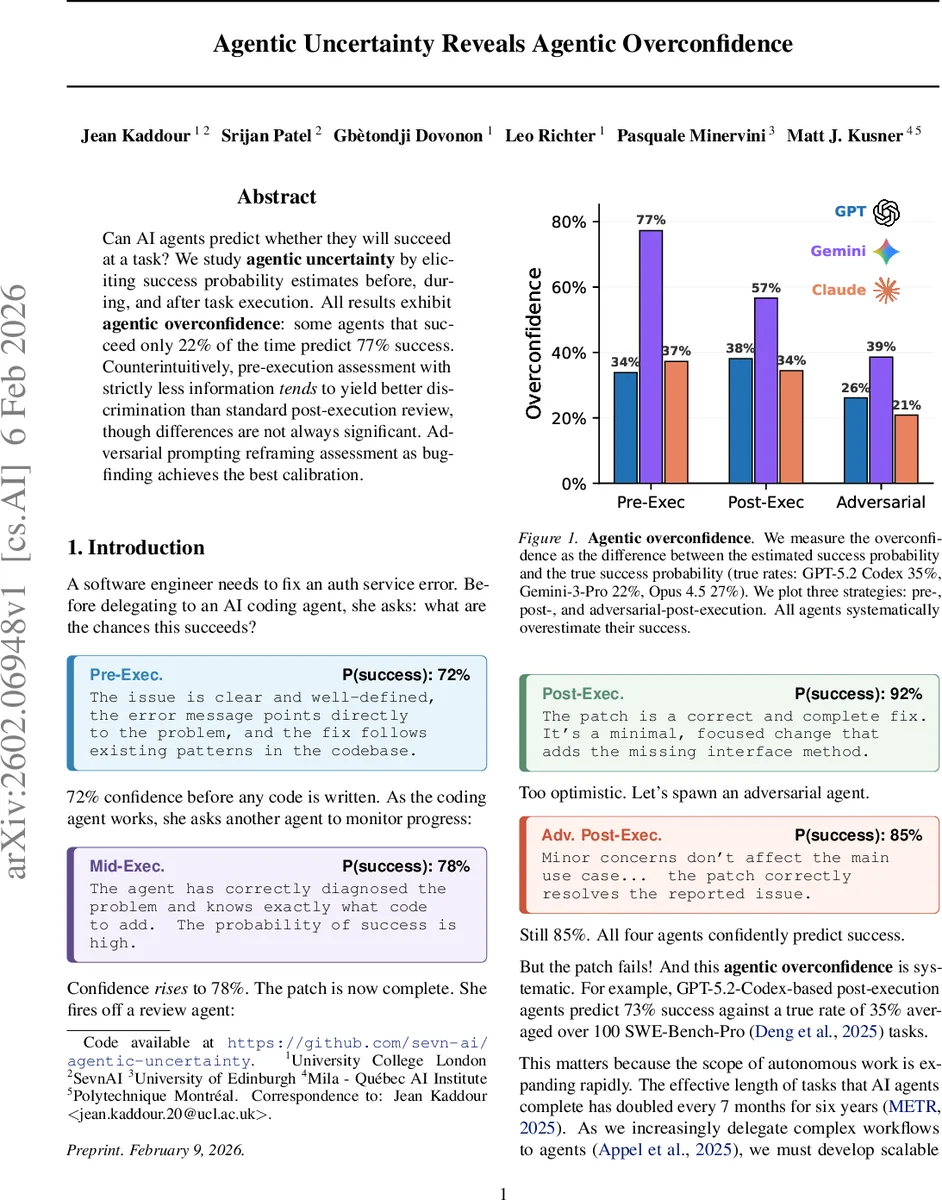

Can AI agents predict whether they will succeed at a task? We study agentic uncertainty by eliciting success probability estimates before, during, and after task execution. All results exhibit agentic overconfidence: some agents that succeed only 22% of the time predict 77% success. Counterintuitively, pre-execution assessment with strictly less information tends to yield better discrimination than standard post-execution review, though differences are not always significant. Adversarial prompting reframing assessment as bug-finding achieves the best calibration.

💡 Research Summary

The paper “Agentic Uncertainty Reveals Agentic Overconfidence” investigates whether AI coding agents can accurately predict their own likelihood of success on software engineering tasks. The authors introduce the notion of agentic uncertainty—the probability that an agent built on a given base model will successfully complete a task—and they measure it at three distinct points in the agent’s lifecycle: before any code is written (pre‑execution), during the generation process (mid‑execution), and after a patch has been produced (post‑execution). The same underlying language model is used both for the task‑solving agent and for the uncertainty‑estimating agent, ensuring that differences arise solely from the amount of information available at each stage.

Experiments are conducted on 100 randomly selected SWE‑Bench‑Pro problems, which require multi‑file edits and have historically low success rates (23‑44%). Three frontier models are evaluated: GPT‑5.2‑Codex, Gemini‑3‑Pro, and Claude‑Opus 4.5. For each task, a full execution trajectory is generated, and uncertainty agents are prompted to output a probability estimate in the range

Comments & Academic Discussion

Loading comments...

Leave a Comment