GaussianPOP: Principled Simplification Framework for Compact 3D Gaussian Splatting via Error Quantification

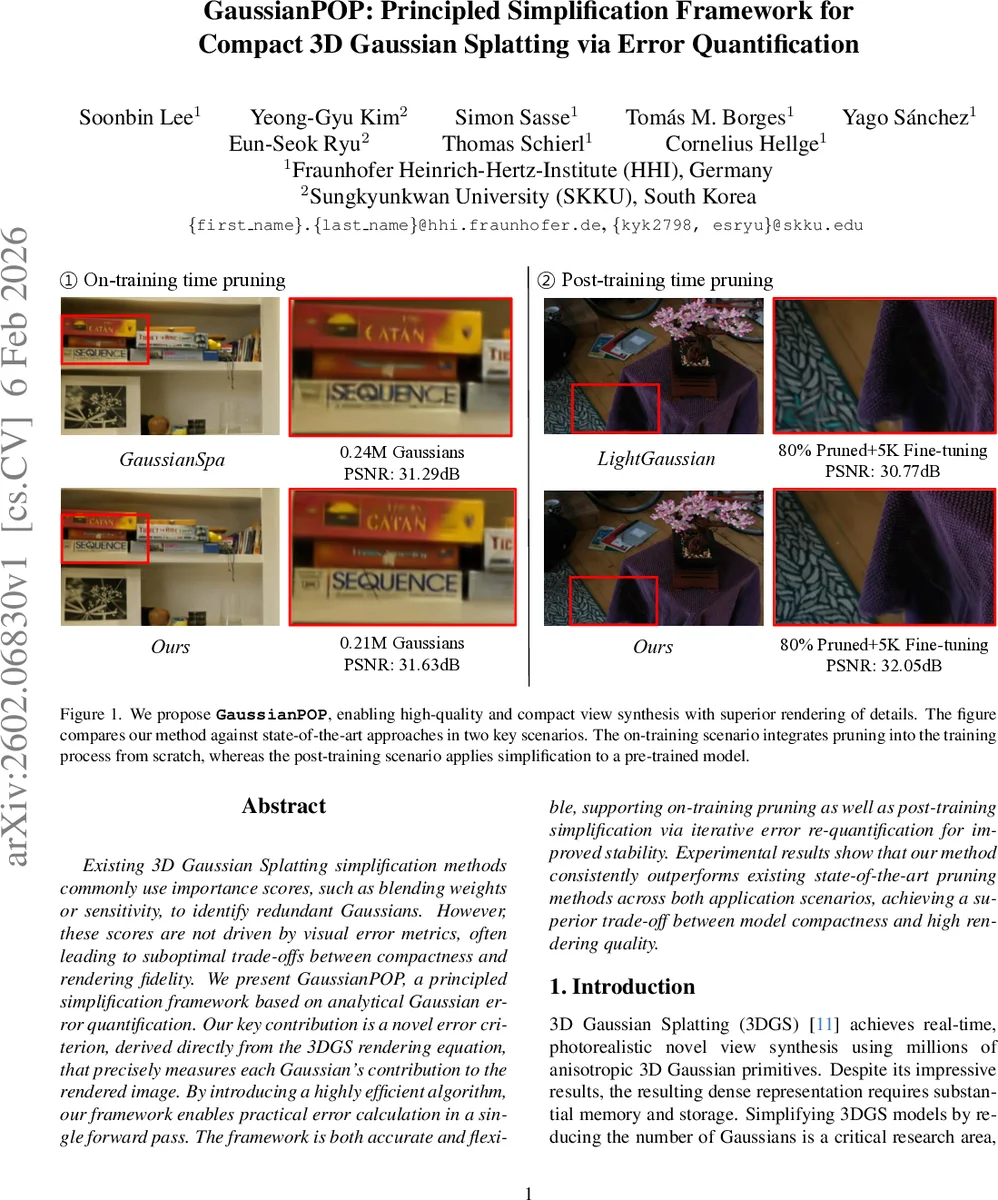

Existing 3D Gaussian Splatting simplification methods commonly use importance scores, such as blending weights or sensitivity, to identify redundant Gaussians. However, these scores are not driven by visual error metrics, often leading to suboptimal trade-offs between compactness and rendering fidelity. We present GaussianPOP, a principled simplification framework based on analytical Gaussian error quantification. Our key contribution is a novel error criterion, derived directly from the 3DGS rendering equation, that precisely measures each Gaussian’s contribution to the rendered image. By introducing a highly efficient algorithm, our framework enables practical error calculation in a single forward pass. The framework is both accurate and flexible, supporting on-training pruning as well as post-training simplification via iterative error re-quantification for improved stability. Experimental results show that our method consistently outperforms existing state-of-the-art pruning methods across both application scenarios, achieving a superior trade-off between model compactness and high rendering quality.

💡 Research Summary

3D Gaussian Splatting (3DGS) has emerged as a powerful technique for real‑time photorealistic view synthesis, representing a scene with millions of anisotropic 3D Gaussians that are blended in a front‑to‑back order. While the visual quality is impressive, the sheer number of Gaussians imposes heavy memory, storage, and rendering costs, motivating research on model simplification. Existing simplification methods rely on surrogate importance scores—opacity, accumulated blending weight, gradient‑based sensitivity, etc.—to decide which Gaussians to prune. These scores are only loosely correlated with the actual visual error, often leading to sub‑optimal trade‑offs between compactness and fidelity.

GaussianPOP addresses this gap by deriving a principled error metric directly from the 3DGS rendering equation. For a given pixel, the rendered color is

C_render = Σ_{k=1}^{N} T_k α_k c_k,

where c_k is the base color, α_k the per‑pixel opacity, and T_k = ∏{j<k}(1−α_j) the transmittance accumulated from all preceding Gaussians. If Gaussian k is removed, its contribution disappears and the background behind it is no longer attenuated, yielding a counterfactual color C′render = Σ{j<k} T_j α_j c_j + T_k b{k+1}. The per‑pixel squared error caused by removing k is therefore

ΔSE_k = ‖C_render − C′render‖² = ‖T_k α_k (c_k − b{k+1})‖².

Thus the error depends only on the effective contribution T_k α_k and the color difference between the Gaussian and the background that would become visible without it. This expression provides an exact, pixel‑wise quantification of visual impact, unlike heuristic scores.

Computing ΔSE_k for every Gaussian naïvely would require re‑rendering the scene after each removal, an O(M·V) operation (M = number of Gaussians, V = number of training views). GaussianPOP circumvents this by a “render‑once, compute‑locally” strategy (Algorithm 1). A single forward pass produces an ordered list H of all Gaussians that affect each pixel together with the final pixel color C_render. In a second pass, prefix‑sum operations compute cumulative color P_k = Σ_{j≤k} T_j α_j c_j and cumulative transmittance T_k = ∏{j<k}(1−α_j) for every k. In the third pass, each Gaussian independently reconstructs the background color b{k+1} = (C_render − P_k) / (T_{k+1}+ε) and evaluates ΔSE_k using the closed‑form expression. All three phases are fully parallelizable on the GPU, yielding an O(N) algorithm (N = number of Gaussians) with modest memory overhead.

GaussianPOP can be applied in two practical scenarios. (1) On‑training pruning: during the training of a 3DGS model, the error metric is evaluated at predefined intervals; a fraction of Gaussians with the smallest ΔSE are removed, and training continues to allow the remaining Gaussians to compensate. (2) Post‑training pruning: a pre‑trained model is first quantified across all training views, low‑error Gaussians are pruned, and a short fine‑tuning phase (e.g., 5 k iterations) restores quality. The post‑training pipeline can be iterated, recomputing ΔSE after each pruning step for higher accuracy.

Experiments were conducted on several benchmark datasets, including Mip‑NeRF 360 and Tanks & Temples. In the on‑training setting, GaussianPOP reduced a 0.24 M‑Gaussian model (PSNR 31.29 dB) to 0.21 M Gaussians (PSNR 31.63 dB) with negligible quality loss. In the post‑training setting, up to 80 % of Gaussians were removed; after 5 k fine‑tuning steps the LightGaussian variant achieved PSNR 32.05 dB, outperforming competing methods such as Mini‑Splatting, LightGaussian, MaskGaussian, and Compact3DGS. Table 1 and Table 2 show consistent gains in PSNR, SSIM, and lower LPIPS across all datasets. Figure 3 visualizes the distribution of cumulative ΔSE values on a log scale, revealing a long‑tailed distribution where the majority of Gaussians contribute near‑zero error, confirming the presence of many redundant primitives.

Complexity analysis confirms that GaussianPOP’s error computation scales linearly with the number of Gaussians and requires only O(N) additional memory, making it practical for scenes with millions of primitives. Limitations include the reliance on an L2 color difference, which may not fully capture perceptual artifacts such as structural distortions or high‑frequency texture loss. Extremely thin translucent layers (very low α) can lead to under‑estimation of error because T_k α_k becomes tiny. Future work may integrate perceptual metrics, explore multi‑objective optimization, and extend the framework to dynamic scenes where real‑time re‑quantification is needed.

Overall, GaussianPOP provides a mathematically grounded, highly efficient, and flexible tool for compressing 3DGS models, delivering superior compactness‑quality trade‑offs in both training‑time and post‑training scenarios.

Comments & Academic Discussion

Loading comments...

Leave a Comment