Hierarchical Activity Recognition and Captioning from Long-Form Audio

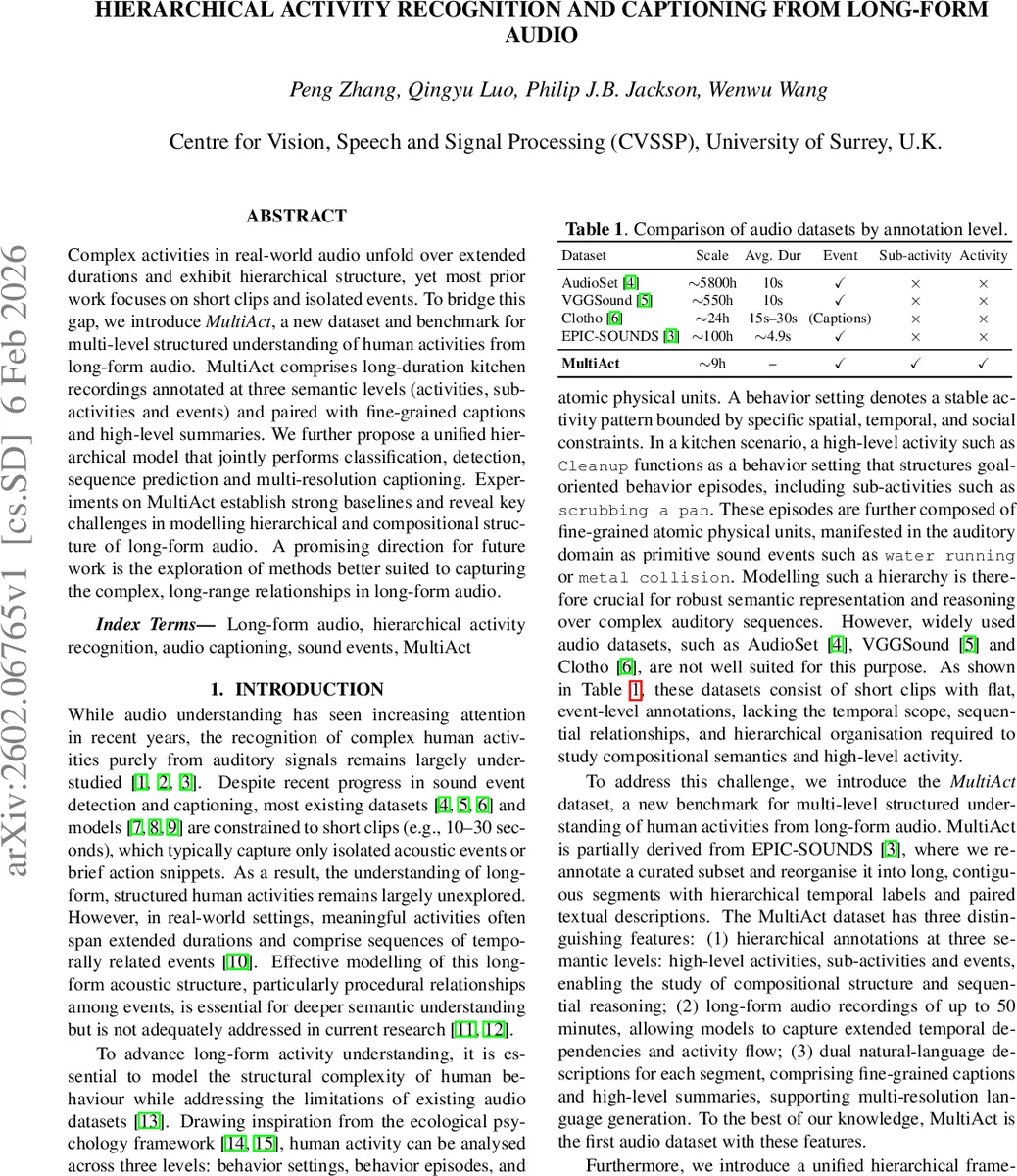

Complex activities in real-world audio unfold over extended durations and exhibit hierarchical structure, yet most prior work focuses on short clips and isolated events. To bridge this gap, we introduce MultiAct, a new dataset and benchmark for multi-level structured understanding of human activities from long-form audio. MultiAct comprises long-duration kitchen recordings annotated at three semantic levels (activities, sub-activities and events) and paired with fine-grained captions and high-level summaries. We further propose a unified hierarchical model that jointly performs classification, detection, sequence prediction and multi-resolution captioning. Experiments on MultiAct establish strong baselines and reveal key challenges in modelling hierarchical and compositional structure of long-form audio. A promising direction for future work is the exploration of methods better suited to capturing the complex, long-range relationships in long-form audio.

💡 Research Summary

The paper addresses a notable gap in audio understanding research: most existing datasets and models focus on short clips (typically 10–30 seconds) and flat event‑level annotations, which are insufficient for capturing the complex, procedural nature of real‑world human activities that unfold over minutes or even hours. To fill this gap, the authors introduce MultiAct, a new benchmark specifically designed for hierarchical activity recognition and captioning from long‑form audio. MultiAct consists of approximately 9 hours of continuous kitchen recordings drawn from EPIC‑SOUNDS, re‑annotated into three semantic levels—high‑level activities (e.g., “Cleanup”), mid‑level sub‑activities (e.g., “WashScrub”, “WipeTidy”), and low‑level sound events (e.g., “water running”, “metal collision”). The dataset contains 51 activity instances across three coarse categories, 472 sub‑activity instances spanning 12 categories, and 7,312 event instances covering 44 refined classes. Each segment is paired with two textual descriptions: fine‑grained, time‑ordered captions aligned to short acoustic events, and high‑level summaries describing the overall activity. This dual‑resolution annotation enables research on both detailed caption generation and abstract summarization.

The annotation pipeline leverages a two‑phase large language model (LLM) workflow. First, GPT‑4o is prompted with the raw audio and task instructions to generate a draft hierarchical annotation. Human annotators then iteratively correct these drafts using a predefined taxonomy and labeling rules, ensuring consistency across the three levels. This hybrid approach balances scalability with high label quality.

Methodologically, the authors propose a unified hierarchical framework that integrates a SlowFast‑style audio feature extractor (Auditory SlowFast, ASF) with three dedicated encoders for events, sub‑activities, and activities. ASF produces synchronized fast (high‑resolution, transient) and slow (long‑range spectral) pathways, yielding frame‑level token representations. The event encoder performs fine‑grained classification and boundary detection; the sub‑activity encoder adds temporal modeling via BiGRU layers and attention mechanisms, also predicting sub‑activity boundaries and sequences; the activity encoder aggregates sub‑activity embeddings to predict high‑level procedural categories. All three encoders share the same ASF tokens but are trained separately for their respective tasks.

For language generation, a BART‑based decoder is employed. Audio features are linearly projected and processed by a transformer audio encoder; optional textual cues (e.g., predicted sub‑activity sequences or activity labels) are encoded by a pretrained BART text encoder. The concatenated audio‑text embeddings feed the BART decoder, which autoregressively generates either fine‑grained captions or high‑level summaries, depending on the task.

The experimental evaluation covers four tasks:

-

Hierarchical Activity Classification – Predicting one label per level for a temporally bounded segment. Baselines include a simple linear classifier on ASF features for events, and BiGRU‑based models with self‑attention (ASF‑Atten) or cross‑attention (ASF‑CrossAtten) for sub‑activities and activities. Results show that cross‑attention yields the best activity‑level Top‑1 accuracy (83.3 % on the evaluation split), while self‑attention excels for sub‑activities (Top‑1 51.9 %).

-

Audio Activity Detection – Detecting temporal segments and assigning class labels. The authors adapt ActionFormer (originally for video) to audio, using ASF features extracted with 2‑second windows and 200 ms stride. Mean Average Precision (mAP) at IoU = 0.1 is 17 % for events and 44 % for sub‑activities, but performance drops sharply at higher IoU thresholds (e.g., 22 % at IoU = 0.5 for sub‑activities), indicating difficulty in precise boundary localization.

-

Audio Activity Sequence Prediction – Predicting the ordered list of sub‑activities within a segment without explicit boundaries. A Conformer encoder trained with the CTC loss is used. Activity Error Rate (AER) improves when the training context includes 2–4 sub‑activities (AER ≈ 66–75 %) but degrades with longer contexts (AER rises to ≈ 88 % for 8‑step context) and only partially recovers when the full sequence is used. This suggests that current models capture local procedural patterns but struggle with long‑range dependencies.

-

Audio Activity Captioning – Generating fine‑grained, time‑ordered captions and high‑level summaries. While detailed quantitative results are not fully reported in the excerpt, the architecture demonstrates the feasibility of multi‑resolution captioning within a single unified model.

Overall, the paper makes three primary contributions: (i) the MultiAct dataset, the first audio benchmark offering hierarchical annotations and dual‑resolution textual descriptions for long‑form recordings; (ii) a unified hierarchical model that jointly handles classification, detection, sequence prediction, and captioning; and (iii) a comprehensive baseline suite that reveals current strengths (reasonable activity‑level classification) and key weaknesses (boundary precision, long‑range sequence modeling). The authors argue that future work should explore architectures better suited for capturing complex, long‑range relationships—such as transformer models with extended context windows, hierarchical graph neural networks, or multimodal pre‑training that jointly learns audio‑text representations.

In summary, MultiAct opens a new research frontier in audio understanding, shifting focus from isolated events to structured, procedural activities that span minutes. The proposed hierarchical framework provides a solid starting point, but the experimental results clearly indicate that more sophisticated temporal modeling and cross‑level reasoning are needed to fully exploit the richness of long‑form audio.

Comments & Academic Discussion

Loading comments...

Leave a Comment