Not All Layers Need Tuning: Selective Layer Restoration Recovers Diversity

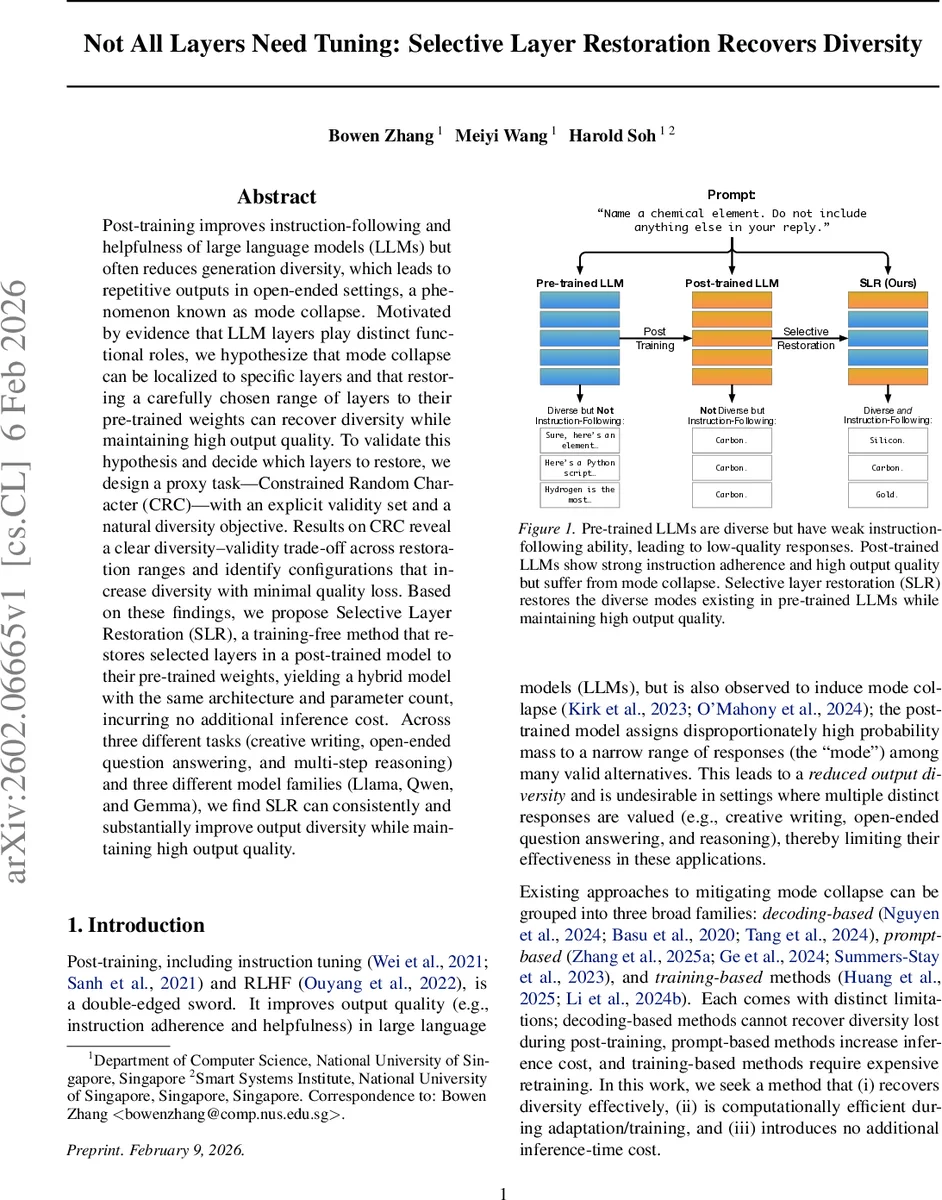

Post-training improves instruction-following and helpfulness of large language models (LLMs) but often reduces generation diversity, which leads to repetitive outputs in open-ended settings, a phenomenon known as mode collapse. Motivated by evidence that LLM layers play distinct functional roles, we hypothesize that mode collapse can be localized to specific layers and that restoring a carefully chosen range of layers to their pre-trained weights can recover diversity while maintaining high output quality. To validate this hypothesis and decide which layers to restore, we design a proxy task – Constrained Random Character(CRC) – with an explicit validity set and a natural diversity objective. Results on CRC reveal a clear diversity-validity trade-off across restoration ranges and identify configurations that increase diversity with minimal quality loss. Based on these findings, we propose Selective Layer Restoration (SLR), a training-free method that restores selected layers in a post-trained model to their pre-trained weights, yielding a hybrid model with the same architecture and parameter count, incurring no additional inference cost. Across three different tasks (creative writing, open-ended question answering, and multi-step reasoning) and three different model families (Llama, Qwen, and Gemma), we find SLR can consistently and substantially improve output diversity while maintaining high output quality.

💡 Research Summary

This paper addresses a critical issue in large language model (LLM) alignment known as “mode collapse,” where post-training processes like instruction tuning and RLHF enhance helpfulness and instruction-following but drastically reduce the diversity of model outputs, leading to repetitive and uncreative responses. The authors propose a novel, training-free intervention called Selective Layer Restoration (SLR) to mitigate this problem. Motivated by evidence that transformer layers serve distinct functional roles and that post-training primarily steers rather than creates knowledge, the core hypothesis is that the detrimental effects of mode collapse are localized to specific layers within the network. Consequently, restoring a carefully chosen contiguous block of layers in a post-trained model back to their original pre-trained weights can recover lost diversity while preserving the enhanced capabilities gained from post-training.

The key challenge is determining which layers to restore. To solve this efficiently, the authors design a simple proxy task named Constrained Random Character (CRC). CRC prompts (e.g., “Generate a random integer between 0 and 5”) have a finite, explicit set of valid single-token outputs. This allows for automatic, unambiguous measurement of quality (the total probability mass on valid tokens, indicating instruction adherence) and diversity (the entropy over the valid tokens, indicating output variation). By evaluating all possible contiguous restoration intervals on CRC, the authors map a clear diversity-quality trade-off landscape for each model. This analysis reveals that specific intervals exist where diversity can be substantially increased with only minimal loss in validity. The optimal interval—selected by maximizing diversity subject to a quality constraint—is then used for the downstream application of SLR.

The proposed SLR method is evaluated extensively across three diverse open-ended tasks (creative writing, open-ended QA, and multi-step reasoning) and three different model families (Llama3.1-8B, Qwen2.5-7B, and Gemma2-9B). Results consistently show that SLR significantly improves output diversity (as measured by metrics like distinct n-gram counts and embedding-based variance) while maintaining high output quality (as judged by GPT-4 or task-specific metrics for helpfulness, correctness, etc.). Furthermore, the paper demonstrates that SLR is complementary to existing decoding-based (e.g., high-temperature sampling) and prompt-based diversity techniques, yielding additional gains when combined.

In summary, this work provides strong evidence for a modular view of post-trained LLMs, where not all layers are equally affected by alignment training. The SLR method offers a practical, cost-effective solution to the mode collapse problem by performing targeted surgery in the model’s weight space. It requires no additional training, introduces no inference overhead, and effectively hybridizes the strengths of both pre-trained (diversity) and post-trained (helpfulness) models. The CRC proxy task serves as a crucial and efficient tool for guiding the restoration process. The findings suggest that desirable model properties can be recovered through precise architectural interventions, opening avenues for future work in model merging and editing.

Comments & Academic Discussion

Loading comments...

Leave a Comment