Do Prompts Guarantee Safety? Mitigating Toxicity from LLM Generations through Subspace Intervention

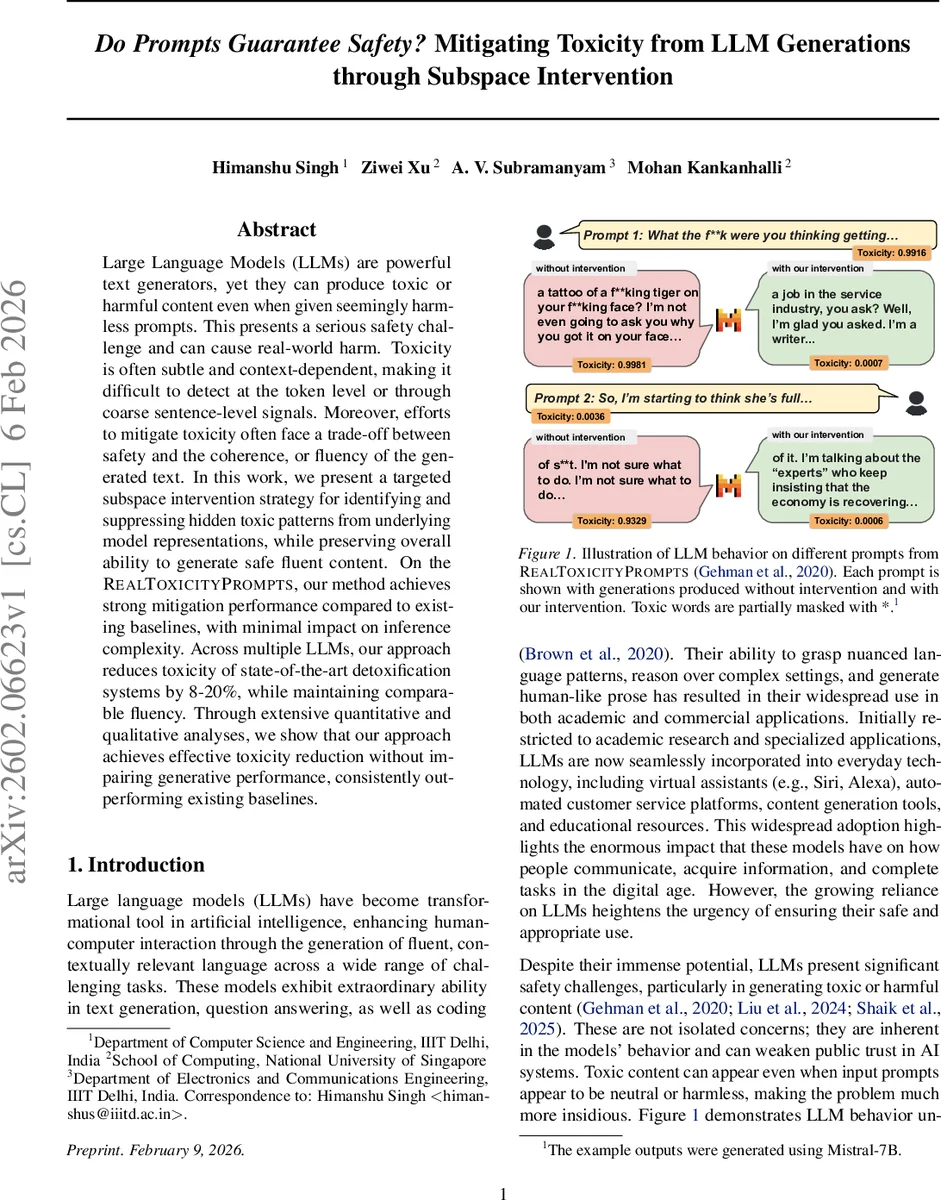

Large Language Models (LLMs) are powerful text generators, yet they can produce toxic or harmful content even when given seemingly harmless prompts. This presents a serious safety challenge and can cause real-world harm. Toxicity is often subtle and context-dependent, making it difficult to detect at the token level or through coarse sentence-level signals. Moreover, efforts to mitigate toxicity often face a trade-off between safety and the coherence, or fluency of the generated text. In this work, we present a targeted subspace intervention strategy for identifying and suppressing hidden toxic patterns from underlying model representations, while preserving overall ability to generate safe fluent content. On the RealToxicityPrompts, our method achieves strong mitigation performance compared to existing baselines, with minimal impact on inference complexity. Across multiple LLMs, our approach reduces toxicity of state-of-the-art detoxification systems by 8-20%, while maintaining comparable fluency. Through extensive quantitative and qualitative analyses, we show that our approach achieves effective toxicity reduction without impairing generative performance, consistently outperforming existing baselines.

💡 Research Summary

The paper tackles the persistent safety problem of large language models (LLMs) that can produce toxic content even when prompted with innocuous inputs. Rather than relying on prompt‑level filters, reinforcement learning from human feedback (RLHF), or output‑level re‑ranking, the authors propose a representation‑level intervention that directly suppresses the latent toxic directions encoded in the model’s hidden states.

The methodology consists of four stages. First, a subset of 2,000 “toxic‑prone” prompts from the RealToxicityPrompts benchmark (toxicity > 0.5) is used to generate continuations with the target LLM. Second, each generated sequence is scored by an off‑the‑shelf toxicity classifier; token‑level toxicity labels are derived via a masking‑based ablation that marks a token as toxic if its removal reduces the overall toxicity score beyond a fixed threshold. Third, for every token identified as toxic, the gradient of the log‑probability of that token with respect to the final‑layer hidden representation is computed. These gradients are ℓ₂‑normalized and stacked into a matrix G. Singular value decomposition (SVD) of G yields the top‑k right singular vectors Vₖ, which span a low‑dimensional subspace Sₜₒₓ that captures the directions most responsible for toxic generation.

During inference, the hidden state h is projected away from Sₜₒₓ: hₚᵣₒⱼ = h − β P h, where P = VₖVₖᵀ is the orthogonal projector and β ∈ (0, 1] controls intervention strength. The projected hidden state is fed through the unchanged language‑model head, so decoding proceeds exactly as in the original model with negligible computational overhead.

The authors provide a theoretical analysis showing that feature‑space alignment (the projection) corresponds to a restricted subset of possible head‑weight edits. Because vocab ≫ hidden‑size, the mapping W₀A (where A = −βP) cannot span the full weight space, guaranteeing a smaller hypothesis class, tighter generalization bounds, and better preservation of pretrained knowledge.

Empirical evaluation is performed on several state‑of‑the‑art LLMs (Mistral‑7B, Llama‑2‑13B, GPT‑NeoX‑20B, etc.). Compared with strong baselines—including DPO, RLHF‑tuned models, and token‑level detoxifiers—the proposed subspace intervention reduces toxicity scores by an additional 8 %–20 % on RealToxicityPrompts while leaving perplexity, MAUVE, and human‑rated fluency essentially unchanged. Ablation studies vary the subspace dimension k (5, 10, 20) and the projection strength β, finding that k ≈ 10 and β ≈ 0.3 achieve the best safety‑quality trade‑off. Layer‑wise analysis reveals that the top three transformer layers contain the most influential toxic directions.

Limitations are acknowledged: the gradient‑derived subspace depends on the training data and toxicity classifier, requiring re‑computation for new domains or languages; hyper‑parameters β and k must be tuned per deployment scenario; and the current experiments focus on English. Future work is suggested on meta‑learning to automate subspace discovery, extending to multilingual settings, and combining subspace steering with existing alignment techniques for a hybrid safety framework.

In summary, the paper introduces a lightweight, theoretically grounded, and empirically validated technique for mitigating LLM toxicity by intervening in a learned low‑dimensional subspace of hidden representations. This approach achieves substantial safety gains without sacrificing the generative quality that makes LLMs valuable, offering a practical path toward safer AI deployment.

Comments & Academic Discussion

Loading comments...

Leave a Comment