R2LED: Equipping Retrieval and Refinement in Lifelong User Modeling with Semantic IDs for CTR Prediction

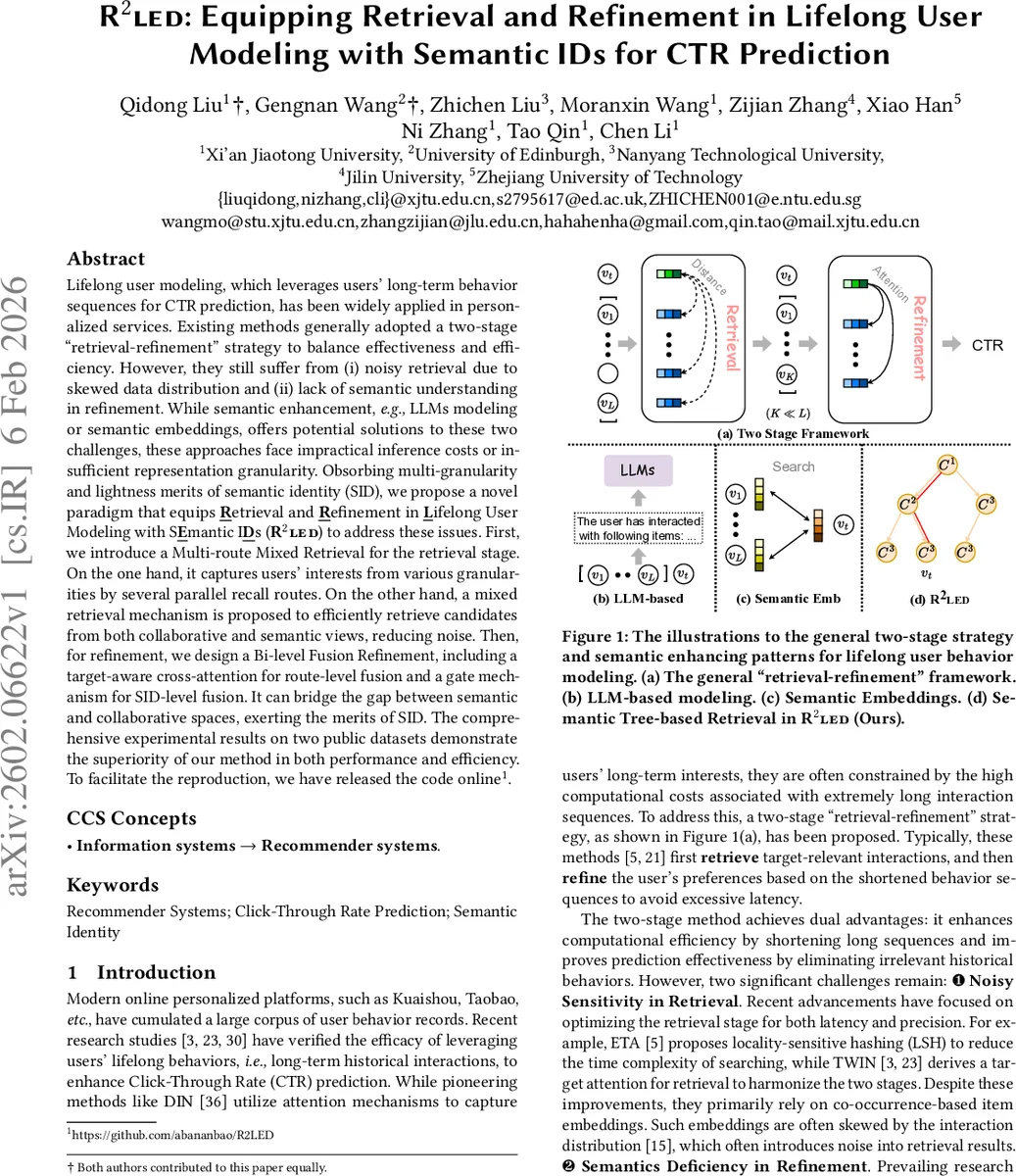

Lifelong user modeling, which leverages users’ long-term behavior sequences for CTR prediction, has been widely applied in personalized services. Existing methods generally adopted a two-stage “retrieval-refinement” strategy to balance effectiveness and efficiency. However, they still suffer from (i) noisy retrieval due to skewed data distribution and (ii) lack of semantic understanding in refinement. While semantic enhancement, e.g., LLMs modeling or semantic embeddings, offers potential solutions to these two challenges, these approaches face impractical inference costs or insufficient representation granularity. Obsorbing multi-granularity and lightness merits of semantic identity (SID), we propose a novel paradigm that equips retrieval and refinement in Lifelong User Modeling with SEmantic IDs (R2LED) to address these issues. First, we introduce a Multi-route Mixed Retrieval for the retrieval stage. On the one hand, it captures users’ interests from various granularities by several parallel recall routes. On the other hand, a mixed retrieval mechanism is proposed to efficiently retrieve candidates from both collaborative and semantic views, reducing noise. Then, for refinement, we design a Bi-level Fusion Refinement, including a target-aware cross-attention for route-level fusion and a gate mechanism for SID-level fusion. It can bridge the gap between semantic and collaborative spaces, exerting the merits of SID. The comprehensive experimental results on two public datasets demonstrate the superiority of our method in both performance and efficiency. To facilitate the reproduction, we have released the code online https://github.com/abananbao/R2LED.

💡 Research Summary

The paper “R2LED: Equipping Retrieval and Refinement in Lifelong User Modeling with Semantic IDs for CTR Prediction” tackles two persistent problems in long‑term user modeling for click‑through‑rate (CTR) prediction: noisy retrieval caused by skewed interaction distributions, and the lack of semantic understanding during the refinement stage. Existing two‑stage pipelines (retrieval → refinement) typically rely on collaborative‑based item embeddings for the retrieval step, which can introduce many irrelevant items when the data is long‑tailed. Meanwhile, attempts to inject semantics either fine‑tune large language models (LLMs) – which are prohibitively expensive at inference time – or use a single dense semantic embedding, which lacks granularity and incurs high latency for large‑scale retrieval.

R2LED proposes to bridge this gap by leveraging Semantic IDs (SIDs), a lightweight, multi‑granular representation obtained by quantizing high‑dimensional semantic embeddings (e.g., text embeddings) into a short sequence of discrete codes across several hierarchical levels (typically three). Each level corresponds to a coarser‑to‑finer semantic cluster, forming a prefix‑tree structure that enables efficient tree‑based matching while preserving rich semantic information.

The framework consists of two major components:

-

Multi‑route Mixed Retrieval (MMR) – a retrieval stage that simultaneously exploits collaborative and semantic signals through three parallel routes:

- Target Route: Uses the target item as a query in both spaces. In the semantic space, the target’s SID tuple is matched against historical SIDs via a strict prefix‑matching score that rewards deeper matches only when all higher‑level prefixes align. In the collaborative space, a locality‑sensitive hashing (LSH) based retrieval (SimHash) ranks items by Hamming distance. The two candidate sets are merged using a confidence‑gated filling mechanism: the variance of normalized Hamming distances serves as a quality indicator; only when the variance exceeds a threshold are the collaborative candidates kept, thereby filtering out noisy neighbors.

- Recent Route: Constructs a recent semantic window from the last W interactions, encodes it as a SID descriptor, and searches the entire long‑term history for items that share semantic codes with this window. This captures short‑term intent while avoiding direct inclusion of potentially noisy recent clicks.

- Global Route: Leverages global SID frequency statistics to retrieve items reflecting the user’s long‑term, coarse‑grained interests.

Each route returns a top‑K subsequence, yielding a multi‑route candidate set (M_{u, t} = {M^{T}{u,t}, M^{R}{u}, M^{G}_{u}}).

-

Bi‑level Fusion Refinement (BFR) – a refinement stage that aligns and fuses the heterogeneous signals:

- Route‑level Fusion: Applies a target‑aware cross‑attention mechanism to each route’s subsequence, producing route‑specific interest vectors (I_{\text{global}}, I_{\text{recent}}, I_{\text{target}}).

- SID‑level Fusion: Introduces a gated fusion across the three SID levels. A learnable gate (scalar or context‑dependent vector) weights each level’s contribution, allowing the model to dynamically balance fine‑grained semantic cues against coarser ones and against traditional ID‑based collaborative features.

- The fused representation is fed into a downstream CTR predictor (e.g., a deep neural network or factorization machine) to output the click probability.

Experimental Evaluation

The authors conduct extensive experiments on two public datasets (an Amazon review dataset and a Taobao click log). Baselines include DIN, ETA, TWIN, and recent LLM‑based approaches. R2LED consistently outperforms all baselines, achieving 2–4 percentage‑point gains in AUC/GAUC while maintaining inference latency under 30 % of that required by high‑dimensional semantic embedding methods. Memory consumption is reduced by roughly 40 % thanks to the compact SID representation. Ablation studies confirm that (i) the SID‑tree retrieval alone reduces noise but is insufficient without collaborative cues; (ii) the confidence gate is crucial for preventing noisy LSH results from degrading performance; and (iii) both route‑level and SID‑level fusions contribute additively to the final gain.

Contributions and Significance

- Introduces a novel two‑stage architecture that seamlessly integrates lightweight semantic IDs into both retrieval and refinement, addressing noise and semantic deficiency simultaneously.

- Designs a multi‑route mixed retrieval mechanism that combines collaborative LSH and hierarchical SID tree matching, with a variance‑based confidence gate to filter low‑quality neighbors.

- Proposes a bi‑level fusion refinement that aligns semantic and collaborative spaces via cross‑attention and gated SID‑level aggregation, effectively handling granularity mismatches.

- Provides open‑source code and reproducible experiments, demonstrating practical applicability for large‑scale online services.

Limitations and Future Work

Current SIDs are derived solely from textual features; extending to multimodal encoders (image, audio) could enrich semantics. The prefix‑tree is static, requiring re‑quantization when new items appear; an online‑updateable SID tokenizer would improve scalability. Finally, exploring joint SID representations for both users and items, or hybridizing SIDs with LLM‑generated contextual embeddings, presents promising directions for further boosting long‑term user modeling.

Comments & Academic Discussion

Loading comments...

Leave a Comment