Force Generative Imitation Learning: Bridging Position Trajectory and Force Commands through Control Technique

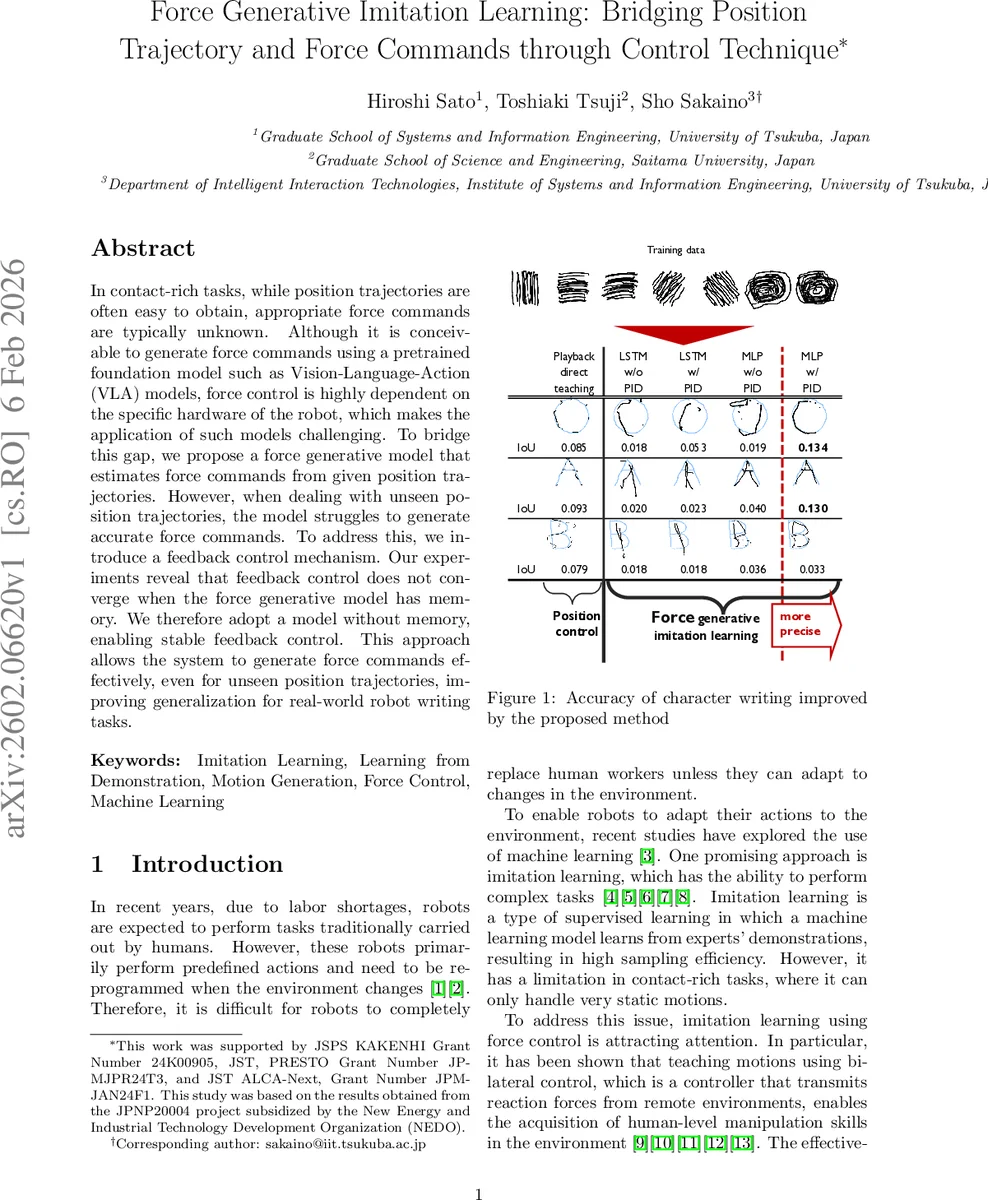

In contact-rich tasks, while position trajectories are often easy to obtain, appropriate force commands are typically unknown. Although it is conceivable to generate force commands using a pretrained foundation model such as Vision-Language-Action (VLA) models, force control is highly dependent on the specific hardware of the robot, which makes the application of such models challenging. To bridge this gap, we propose a force generative model that estimates force commands from given position trajectories. However, when dealing with unseen position trajectories, the model struggles to generate accurate force commands. To address this, we introduce a feedback control mechanism. Our experiments reveal that feedback control does not converge when the force generative model has memory. We therefore adopt a model without memory, enabling stable feedback control. This approach allows the system to generate force commands effectively, even for unseen position trajectories, improving generalization for real-world robot writing tasks.

💡 Research Summary

**

The paper addresses a fundamental challenge in contact‑rich robotic manipulation: while position trajectories are readily obtainable from demonstrations, the corresponding force commands required for compliant interaction are often unavailable. Existing approaches that attempt to generate force commands using pretrained foundation models such as Vision‑Language‑Action (VLA) struggle because force control is highly hardware‑specific, making direct transfer infeasible. To bridge this gap, the authors propose a hierarchical force‑generative imitation learning framework that predicts force commands directly from given position trajectories.

The architecture consists of two distinct layers. The upper layer is a memory‑based network (implemented as an LSTM) that processes the current state and historical information to forecast future joint angles (θ) and angular velocities (˙θ) up to ten steps ahead. These predictions constitute the “upper‑layer trajectory” and are supplied to the lower layer. The lower layer is deliberately designed to be memory‑less, realized as a multilayer perceptron (MLP). It receives the current joint angles, angular velocities, torques, and the ten‑step‑ahead trajectory from the upper layer, and outputs the next‑step state estimate (ŝₖ₊₁) together with the corresponding action (âₖ₊₁), which includes the desired torque command.

A proportional‑integral‑derivative (PID) feedback controller is embedded within the loop to correct deviations between the lower‑layer’s predicted state and the upper‑layer trajectory. Specifically, the error term

uₖ₊₁ = Kₚ(θₖ₊₁ – ŝθₖ₊₁) + K_d(˙θₖ₊₁ – ŝ˙θₖ₊₁) + K_i ∫(θₜ₊₁ – ŝθₜ₊₁) dt

is computed, differentiated, and filtered (cut‑off 0.3 Hz). The resulting correction is added to the future angles and velocities before being fed back into the upper‑layer network. This scheme ensures that the lower‑layer, being memory‑less, does not introduce the instability observed when recurrent networks are placed inside a feedback loop.

The experimental platform is a CRANE‑X7 manipulator (7 DOF plus a custom gripper) operating at a 500 Hz control frequency. Data were collected via bilateral teleoperation (leader‑follower) for a character‑writing task, which provides a clear metric for trajectory fidelity. Five line patterns and two circular patterns were demonstrated at two board heights, yielding 70 trials (56 for training, 14 for validation). During training, joint angles, angular velocities, and torques are available; during autonomous execution, only joint angles are supplied, requiring the model to infer torques from position data alone.

Two network variants were evaluated: (1) an LSTM‑based lower layer (with internal state) and (2) the proposed memory‑less MLP. Both were tested with and without the PID feedback. Performance was measured using Intersection‑over‑Union (IoU) between the executed and reference characters. The MLP + PID configuration achieved the highest IoU (0.134), outperforming the LSTM variants (which suffered from divergence due to internal memory interacting adversely with the feedback loop). These results confirm that separating memory (upper layer) from the controllable, memory‑less component (lower layer) yields a stable, generalizable system.

Key contributions of the work are:

- Hierarchical separation of memory‑based prediction and memory‑less control, enabling independent training of the upper‑layer trajectory predictor and the lower‑layer force generator.

- Demonstration that embedding memory within a feedback control loop can destabilize the system, and that a simple MLP combined with PID feedback ensures convergence.

- Empirical validation on a real robot writing task, showing that the framework can generate appropriate force commands for unseen position trajectories, thereby improving generalization to novel contact‑rich scenarios.

The proposed method can be readily combined with existing trajectory‑generation approaches (e.g., path planners, direct teaching, VLA models) to endow robots with the ability to execute compliant, force‑aware motions without requiring explicit force demonstrations for every new task. This opens avenues for more flexible, data‑efficient deployment of robots in unstructured environments where both position accuracy and force compliance are critical.

Comments & Academic Discussion

Loading comments...

Leave a Comment