ProtoQuant: Quantization of Prototypical Parts For General and Fine-Grained Image Classification

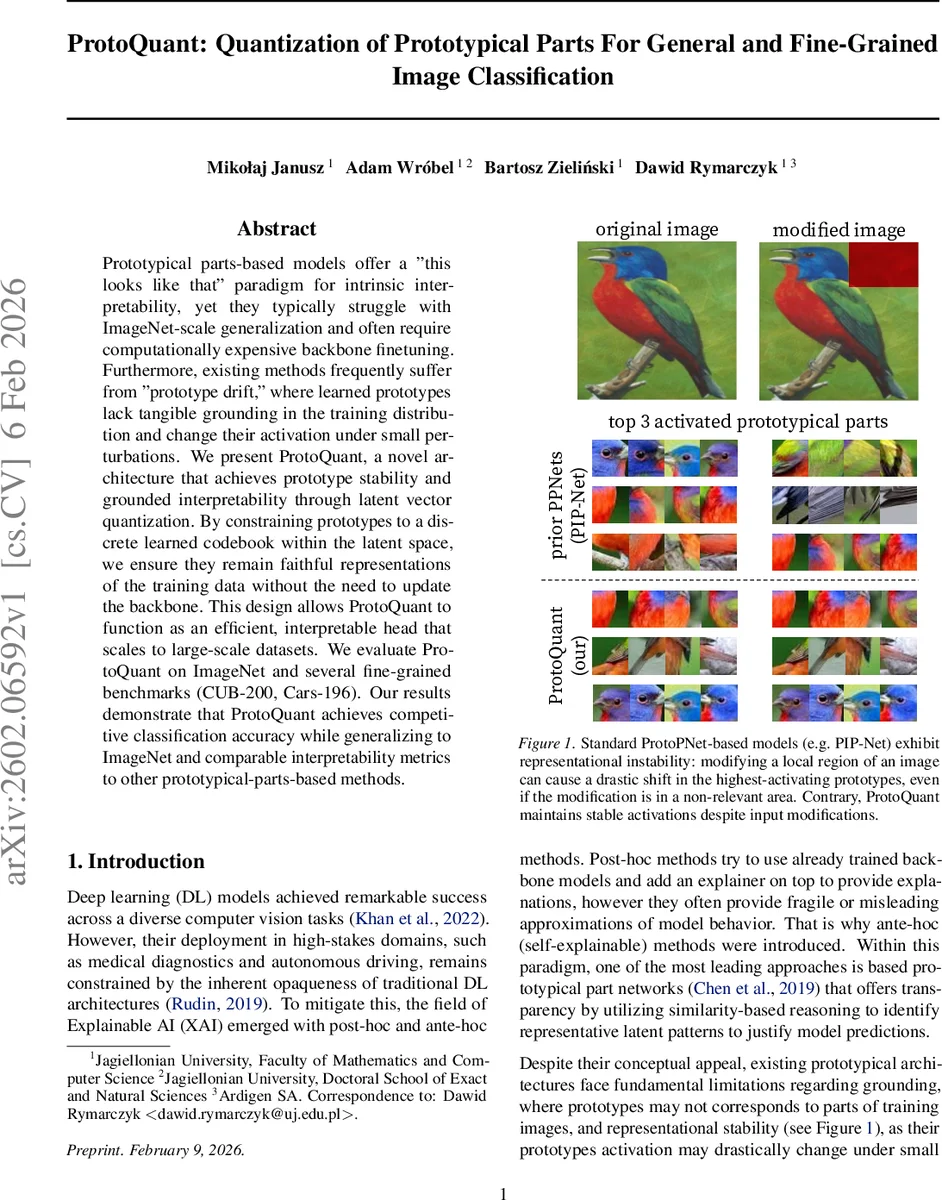

Prototypical parts-based models offer a “this looks like that” paradigm for intrinsic interpretability, yet they typically struggle with ImageNet-scale generalization and often require computationally expensive backbone finetuning. Furthermore, existing methods frequently suffer from “prototype drift,” where learned prototypes lack tangible grounding in the training distribution and change their activation under small perturbations. We present ProtoQuant, a novel architecture that achieves prototype stability and grounded interpretability through latent vector quantization. By constraining prototypes to a discrete learned codebook within the latent space, we ensure they remain faithful representations of the training data without the need to update the backbone. This design allows ProtoQuant to function as an efficient, interpretable head that scales to large-scale datasets. We evaluate ProtoQuant on ImageNet and several fine-grained benchmarks (CUB-200, Cars-196). Our results demonstrate that ProtoQuant achieves competitive classification accuracy while generalizing to ImageNet and comparable interpretability metrics to other prototypical-parts-based methods.

💡 Research Summary

ProtoQuant tackles two long‑standing issues of prototypical part‑based networks: prototype drift (the tendency of learned prototypes to lose grounding in the training distribution) and poor scalability to large‑scale datasets such as ImageNet. The authors adopt a vector‑quantized variational auto‑encoder (VQ‑VAE) style quantization to discretize the latent space of a frozen backbone into a learned codebook of concept vectors. Each spatial feature vector produced by the backbone is assigned to its nearest code (using cosine similarity) and only the codebook entries are updated during an unsupervised Stage 1, while the backbone parameters remain completely fixed. This quantization forces prototypes to be exact representatives of actual training patches, eliminating drift and guaranteeing that small input perturbations will not cause a prototype to switch to an unrelated region.

After the codebook is learned, a second supervised Stage 2 replaces the original classification head with an interpretable head composed of three deterministic functions: (1) Concept Matching computes a sharp soft‑max over cosine similarities between each feature vector and all codes, controlled by a temperature α, yielding per‑pixel concept probability maps p₍ᵢⱼ₎(m). (2) Concept Activation aggregates these maps by spatial max‑pooling, producing a vector s of concept presence scores (the highest activation of each code across the image). (3) Concept‑to‑Class Mapping multiplies s by a non‑negative weight matrix W∈ℝ^{k×M}_+ to obtain class logits ŷ = Ws. The non‑negative constraint gives a direct “this concept contributes positively to this class” interpretation, and the per‑code weights can be visualized for global explanations, while the probability maps provide local, patch‑level explanations.

ProtoQuant is backbone‑agnostic: the authors demonstrate it with CNNs (ResNet‑50, ConvNeXt‑Tiny/Large) and Vision Transformers (DeiT‑Small, ViT‑B). For ViTs, the

Comments & Academic Discussion

Loading comments...

Leave a Comment