DriveWorld-VLA: Unified Latent-Space World Modeling with Vision-Language-Action for Autonomous Driving

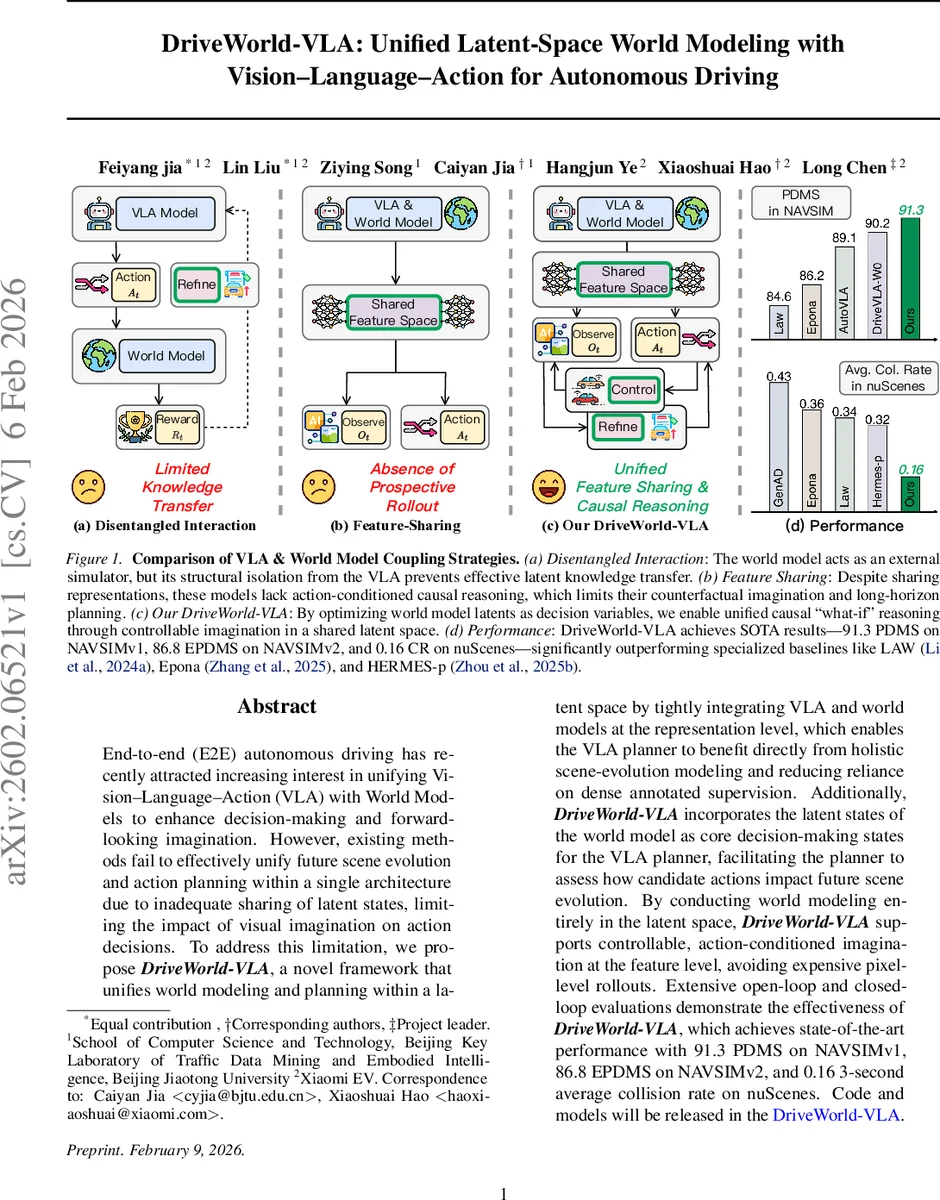

End-to-end (E2E) autonomous driving has recently attracted increasing interest in unifying Vision-Language-Action (VLA) with World Models to enhance decision-making and forward-looking imagination. However, existing methods fail to effectively unify future scene evolution and action planning within a single architecture due to inadequate sharing of latent states, limiting the impact of visual imagination on action decisions. To address this limitation, we propose DriveWorld-VLA, a novel framework that unifies world modeling and planning within a latent space by tightly integrating VLA and world models at the representation level, which enables the VLA planner to benefit directly from holistic scene-evolution modeling and reducing reliance on dense annotated supervision. Additionally, DriveWorld-VLA incorporates the latent states of the world model as core decision-making states for the VLA planner, facilitating the planner to assess how candidate actions impact future scene evolution. By conducting world modeling entirely in the latent space, DriveWorld-VLA supports controllable, action-conditioned imagination at the feature level, avoiding expensive pixel-level rollouts. Extensive open-loop and closed-loop evaluations demonstrate the effectiveness of DriveWorld-VLA, which achieves state-of-the-art performance with 91.3 PDMS on NAVSIMv1, 86.8 EPDMS on NAVSIMv2, and 0.16 3-second average collision rate on nuScenes. Code and models will be released in https://github.com/liulin815/DriveWorld-VLA.git.

💡 Research Summary

DriveWorld‑VLA introduces a unified framework that tightly couples Vision‑Language‑Action (VLA) models with world models (WM) in a shared latent space, enabling action‑conditioned future imagination and proactive planning for autonomous driving. Existing approaches either treat the WM as an external simulator, isolating it from the VLA, or merely share intermediate features without leveraging the WM’s causal reasoning capabilities. Both strategies limit the ability of the system to internalize physical dynamics and to evaluate the long‑term consequences of candidate actions.

The proposed method addresses these gaps through three core innovations. First, Feature‑Level Sharing uses the hidden states of a large language model (InternVL3‑2B) as a common latent representation (H_t). Multi‑modal inputs—multi‑view images, BEV maps, historical actions, and textual instructions—are tokenized and fed into the VLM; the final layer’s hidden states become (H_t), which serves simultaneously as the reasoning substrate for both future BEV prediction and action generation. This shared space forces the VLA to inherit the world model’s learned physics and dynamics.

Second, Action‑Conditioned “What‑If” Reasoning employs a diffusion‑transformer (DiT) based denoiser with two branches. The first branch predicts a coarse future BEV using only historical context, providing dense supervision for representation learning. The second branch is conditioned on ground‑truth future actions and performs flow‑matching diffusion to generate action‑specific future BEV states. By explicitly modeling the conditional distribution (p(B_{t+\Delta}|A_{t+\Delta}, H_t)), the system can simulate how each candidate trajectory would reshape the environment, offering a principled “what‑if” simulation directly inside the latent space.

Third, a Three‑Stage Progressive Training scheme stabilizes joint optimization. Stage 1 jointly trains the VLA and WM on BEV segmentation and imitation‑learning action loss, establishing a robust shared latent space. Stage 2 fine‑tunes the action‑conditioned denoiser using only the flow‑matching loss, turning the latent BEV space into a variational representation that faithfully reflects action effects. Stage 3 closes the loop: predicted actions are fed into the second denoiser branch, Euler‑based sampling produces action‑conditioned future BEV imaginations, and a learned reward network evaluates the consistency between imagined and ground‑truth future BEV. The reward signal is back‑propagated to refine the action decoder, enabling the planner to iteratively improve decisions based on imagined outcomes.

Extensive evaluations on open‑loop (NAVSIMv1/v2) and closed‑loop (nuScenes) benchmarks demonstrate that DriveWorld‑VLA achieves state‑of‑the‑art performance: 91.3 PDMS on NAVSIMv1, 86.8 EPDMS on NAVSIMv2, and a 0.16 % three‑second average collision rate on nuScenes. These results surpass specialized baselines such as LAW, Epona, and HERMES‑p, highlighting the advantage of latent‑space world modeling and action‑conditioned imagination. Moreover, because all future predictions occur in the latent feature domain, the approach avoids costly pixel‑level rollouts, offering significant computational savings.

In summary, DriveWorld‑VLA demonstrates that unifying VLA and world modeling at the representation level yields a powerful, causally aware decision engine for autonomous driving. By making the latent world state a first‑class decision variable, the system can reason about “what‑if” scenarios, refine actions through imagined futures, and achieve higher safety and efficiency without reliance on dense supervision. The authors will release code and pretrained models, paving the way for broader adoption in multimodal robotics and simulation‑based policy learning.

Comments & Academic Discussion

Loading comments...

Leave a Comment