Beyond the Majority: Long-tail Imitation Learning for Robotic Manipulation

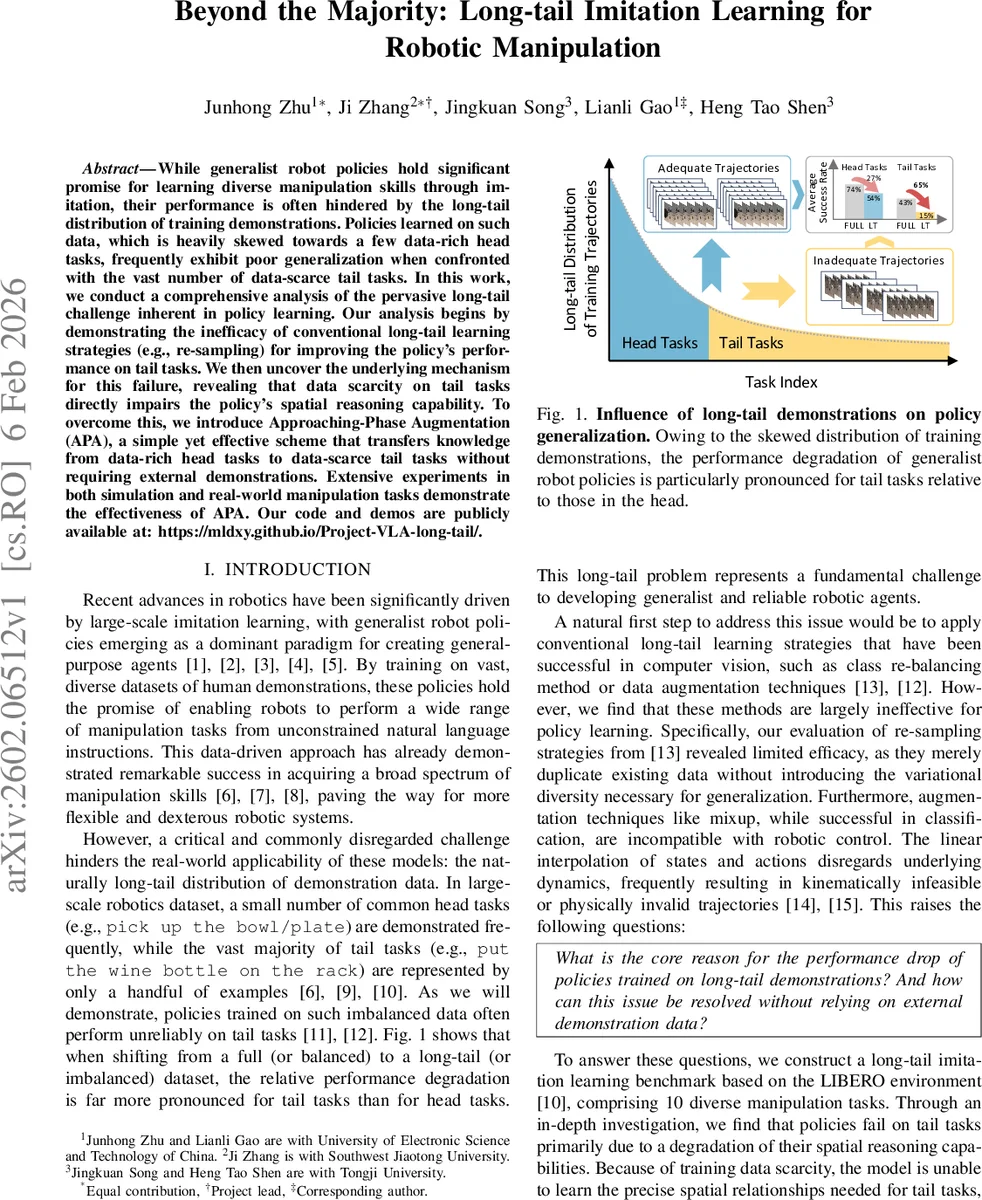

While generalist robot policies hold significant promise for learning diverse manipulation skills through imitation, their performance is often hindered by the long-tail distribution of training demonstrations. Policies learned on such data, which is heavily skewed towards a few data-rich head tasks, frequently exhibit poor generalization when confronted with the vast number of data-scarce tail tasks. In this work, we conduct a comprehensive analysis of the pervasive long-tail challenge inherent in policy learning. Our analysis begins by demonstrating the inefficacy of conventional long-tail learning strategies (e.g., re-sampling) for improving the policy’s performance on tail tasks. We then uncover the underlying mechanism for this failure, revealing that data scarcity on tail tasks directly impairs the policy’s spatial reasoning capability. To overcome this, we introduce Approaching-Phase Augmentation (APA), a simple yet effective scheme that transfers knowledge from data-rich head tasks to data-scarce tail tasks without requiring external demonstrations. Extensive experiments in both simulation and real-world manipulation tasks demonstrate the effectiveness of APA. Our code and demos are publicly available at: https://mldxy.github.io/Project-VLA-long-tail/.

💡 Research Summary

The paper addresses a fundamental challenge in large‑scale robot manipulation: the long‑tail distribution of demonstration data. While a few “head” tasks receive abundant human demonstrations, the majority of “tail” tasks are represented by only a handful of examples. This imbalance severely degrades the performance of generalist policies on tail tasks, a problem that has been largely overlooked in prior work.

Benchmark Construction

Using the LIBERO environment, the authors curate ten representative manipulation tasks and create two datasets: LIBERO‑Core‑FULL (balanced) and LIBERO‑Core‑LT (long‑tailed). Demonstrations are allocated according to a Pareto distribution, resulting in 30 % head tasks (data‑rich) and 70 % tail tasks (data‑scarce). The benchmark provides a controlled platform for studying the impact of data imbalance on language‑conditioned imitation learning.

Failure of Conventional Re‑sampling

The authors fine‑tune a miniVLA model on the long‑tailed dataset while applying several re‑sampling strategies (different values of the balancing hyper‑parameter q). Across all settings, success rates hover around 25 % with negligible improvement, demonstrating that simply oversampling tail trajectories does not help. The reason is that re‑sampling merely duplicates existing trajectories without adding the diversity needed for robust spatial reasoning.

Phase‑wise Failure Analysis

Each task trajectory is split into two phases: (1) the Target Approaching Phase, where the robot moves the end‑effector close to the target object, and (2) the Subsequent Execution Phase, which completes the manipulation. By measuring unconditional failure probability for the approaching phase (pₐₚₚᵣ) and conditional failure probability for the execution phase (pₑₓₑc), the authors compute Relative Risk (RR) between policies trained on full versus long‑tailed data. Tail tasks exhibit an RR of 400 % for the approaching phase and 164 % for the execution phase, indicating that data scarcity primarily harms the robot’s spatial reasoning during the approach.

Approaching‑Phase Augmentation (APA)

Motivated by the above insight, the authors propose APA, a self‑contained data augmentation technique that transfers knowledge from head tasks to tail tasks without collecting new demonstrations. The method extracts the relative pose between robot and object from head‑task trajectories, then re‑applies this pose to the target objects of tail tasks, generating synthetic but physically plausible approaching trajectories. These synthetic phases are concatenated with the original execution phases of tail tasks, producing full demonstrations that retain realistic dynamics.

Experimental Validation

APA is evaluated on both simulation (the ten benchmark tasks) and real‑world robot experiments (six tasks). In simulation, APA raises the average success rate from 26.5 % (baseline) to 38.2 %, with the most pronounced gains on tail tasks where the approaching‑phase failure drops from ~40 % to <12 %. Real‑world tests confirm similar improvements, demonstrating that APA generalizes across domains and hardware. Importantly, APA incurs negligible computational overhead and requires no external data.

Contributions

- A systematic diagnosis showing that long‑tail data harms spatial reasoning, especially during the target‑approach phase.

- The APA method, which leverages abundant head‑task data to synthesize high‑quality approaching trajectories for tail tasks, without any new human demonstrations.

- Extensive empirical evidence across simulation and real robots, establishing the practical effectiveness of APA.

Limitations and Future Work

APA currently assumes that the geometric relationship between robot and object can be transferred across tasks; extreme shape or affordance differences may limit its applicability. The method also focuses on a two‑phase decomposition; extending it to more complex, multi‑step tasks is an open direction. Future research could automate the pose‑mapping process, integrate APA with reinforcement learning, and explore its use in other modalities such as tactile feedback.

Overall, the paper provides a compelling analysis of the long‑tail problem in robot imitation learning and offers a simple yet powerful augmentation strategy that significantly improves the reliability of generalist manipulation policies on data‑scarce tasks.

Comments & Academic Discussion

Loading comments...

Leave a Comment