FloorplanVLM: A Vision-Language Model for Floorplan Vectorization

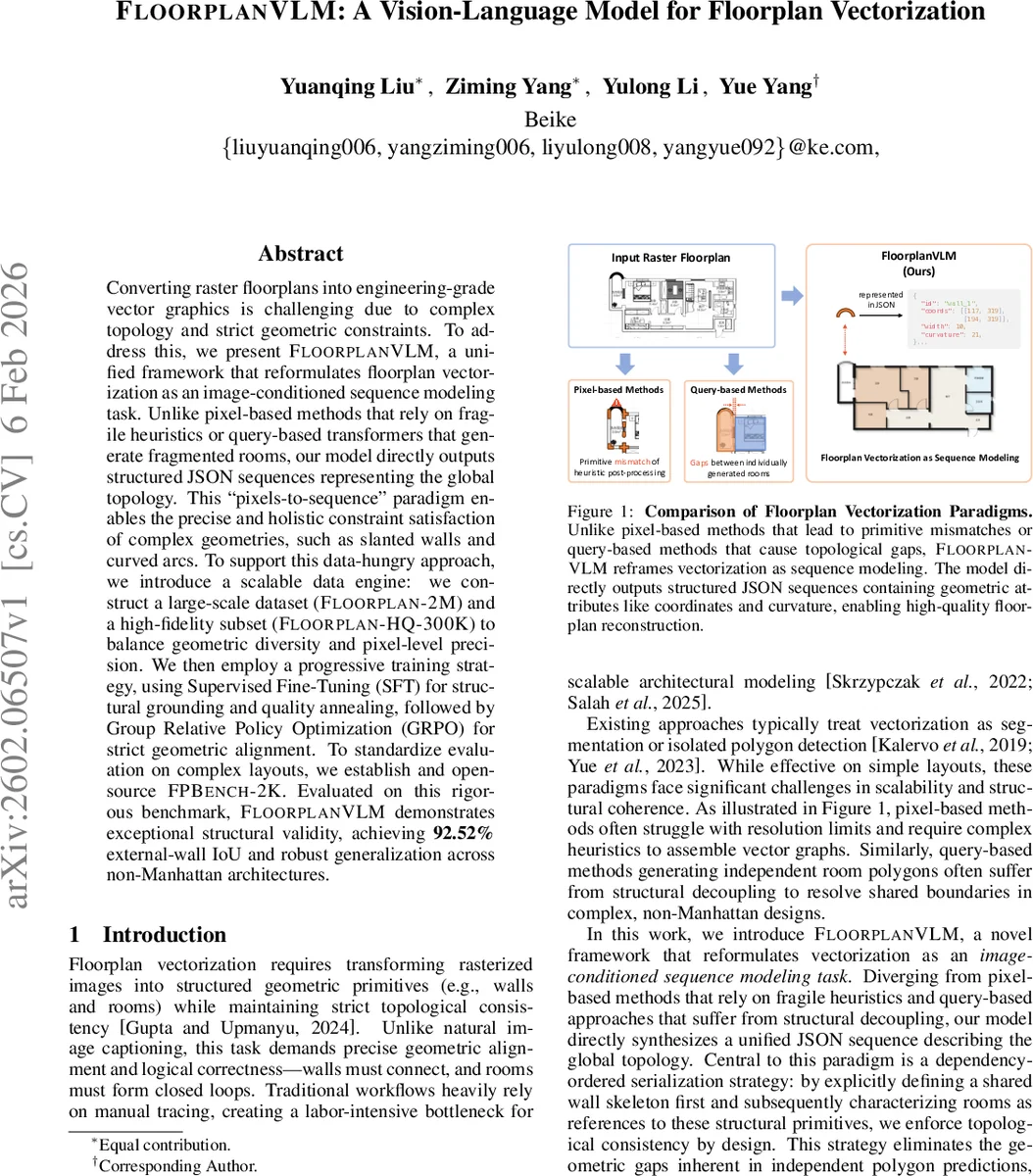

Converting raster floorplans into engineering-grade vector graphics is challenging due to complex topology and strict geometric constraints. To address this, we present FloorplanVLM, a unified framework that reformulates floorplan vectorization as an image-conditioned sequence modeling task. Unlike pixel-based methods that rely on fragile heuristics or query-based transformers that generate fragmented rooms, our model directly outputs structured JSON sequences representing the global topology. This ‘pixels-to-sequence’ paradigm enables the precise and holistic constraint satisfaction of complex geometries, such as slanted walls and curved arcs. To support this data-hungry approach, we introduce a scalable data engine: we construct a large-scale dataset (Floorplan-2M) and a high-fidelity subset (Floorplan-HQ-300K) to balance geometric diversity and pixel-level precision. We then employ a progressive training strategy, using Supervised Fine-Tuning (SFT) for structural grounding and quality annealing, followed by Group Relative Policy Optimization (GRPO) for strict geometric alignment. To standardize evaluation on complex layouts, we establish and open-source FPBench-2K. Evaluated on this rigorous benchmark, FloorplanVLM demonstrates exceptional structural validity, achieving $\textbf{92.52%}$ external-wall IoU and robust generalization across non-Manhattan architectures.

💡 Research Summary

FloorplanVLM tackles the long‑standing problem of converting raster floorplan images into engineering‑grade vector graphics by reframing the task as an image‑conditioned sequence generation problem. Traditional approaches fall into two categories: pixel‑based segmentation/heat‑map methods that output dense raster maps and then rely on fragile heuristics to assemble vector primitives, and query‑based transformer models that predict individual room polygons via fixed queries. Both suffer from structural inconsistencies—pixel‑based pipelines struggle with non‑Manhattan geometries such as slanted walls or curved arcs, while query‑based methods often generate overlapping rooms or gaps at shared walls because they treat rooms as independent entities.

FloorplanVLM adopts a “pixels‑to‑sequence” paradigm using a large Vision‑Language Model (Qwen2.5‑VL‑3B). The model receives a raster floorplan and a textual prompt, encodes both modalities, and autoregressively emits a compact JSON token stream that directly describes the entire floorplan topology. The key innovation is a dependency‑ordered serialization scheme: first a global wall skeleton is emitted, including all geometric attributes (start/end coordinates, thickness, curvature) and nested opening attributes (doors, windows). Rooms are then defined by referencing the identifiers of the walls that bound them, guaranteeing that every room forms a closed loop on the pre‑defined wall graph. This design enforces topological consistency at the token level, eliminating the need for post‑hoc merging or heuristic repairs.

To train such a data‑hungry model, the authors build a two‑tier data engine. Starting from a raw pool of 20 M floorplan screenshots, they apply structure‑aware clustering to obtain a diverse 2 M‑sample dataset (Floorplan‑2M). From this, a high‑fidelity subset of 300 K samples (Floorplan‑HQ‑300K) is curated through a hybrid of human recaptioning and synthetic re‑rendering, ensuring pixel‑perfect alignment between raster images and vector labels. The datasets cover a balanced mix of Manhattan, non‑Manhattan, slanted‑only, arc‑only, and mixed geometries, and present a wide distribution of primitive counts per floorplan.

Training proceeds in three stages. Stages 1 and 2 use Supervised Fine‑Tuning (SFT) to teach the model basic syntax, visual‑textual grounding, and high‑quality generation. Stage 3 introduces Group Relative Policy Optimization (GRPO), a reinforcement‑learning algorithm that directly optimizes a non‑differentiable geometric loss composed of external‑wall Intersection‑over‑Union, closed‑loop violations, and other topological metrics. By treating these geometric criteria as rewards, GRPO aligns the probabilistic token generation of the VLM with deterministic engineering constraints, mitigating the “geometric hallucination” problem common in generative VLMs.

For evaluation, the authors release FPBench‑2K, a benchmark of 2 000 complex floorplans featuring varied architectural styles and challenging non‑Manhattan layouts. On this benchmark FloorplanVLM achieves 92.52 % external‑wall IoU, substantially outperforming state‑of‑the‑art pixel‑based and query‑based baselines. It also demonstrates strong generalization to unseen non‑Manhattan designs, accurately reproducing slanted walls and curved arcs without manual post‑processing.

The paper’s contributions are threefold: (1) an end‑to‑end vision‑language framework that reformulates floorplan vectorization as a structured sequence generation task, guaranteeing topological consistency by design; (2) a scalable data engine and open benchmark that provide the community with large‑scale, high‑precision training data and a standardized testbed for architectural reasoning; (3) a novel reinforcement‑learning based geometric alignment method (GRPO) that bridges the gap between token‑level likelihood maximization and strict geometric fidelity. The approach opens avenues for automated architectural drafting, indoor navigation map generation, and AR/VR content creation, where precise, vector‑based floorplan representations are essential.

Comments & Academic Discussion

Loading comments...

Leave a Comment