MultiGraspNet: A Multitask 3D Vision Model for Multi-gripper Robotic Grasping

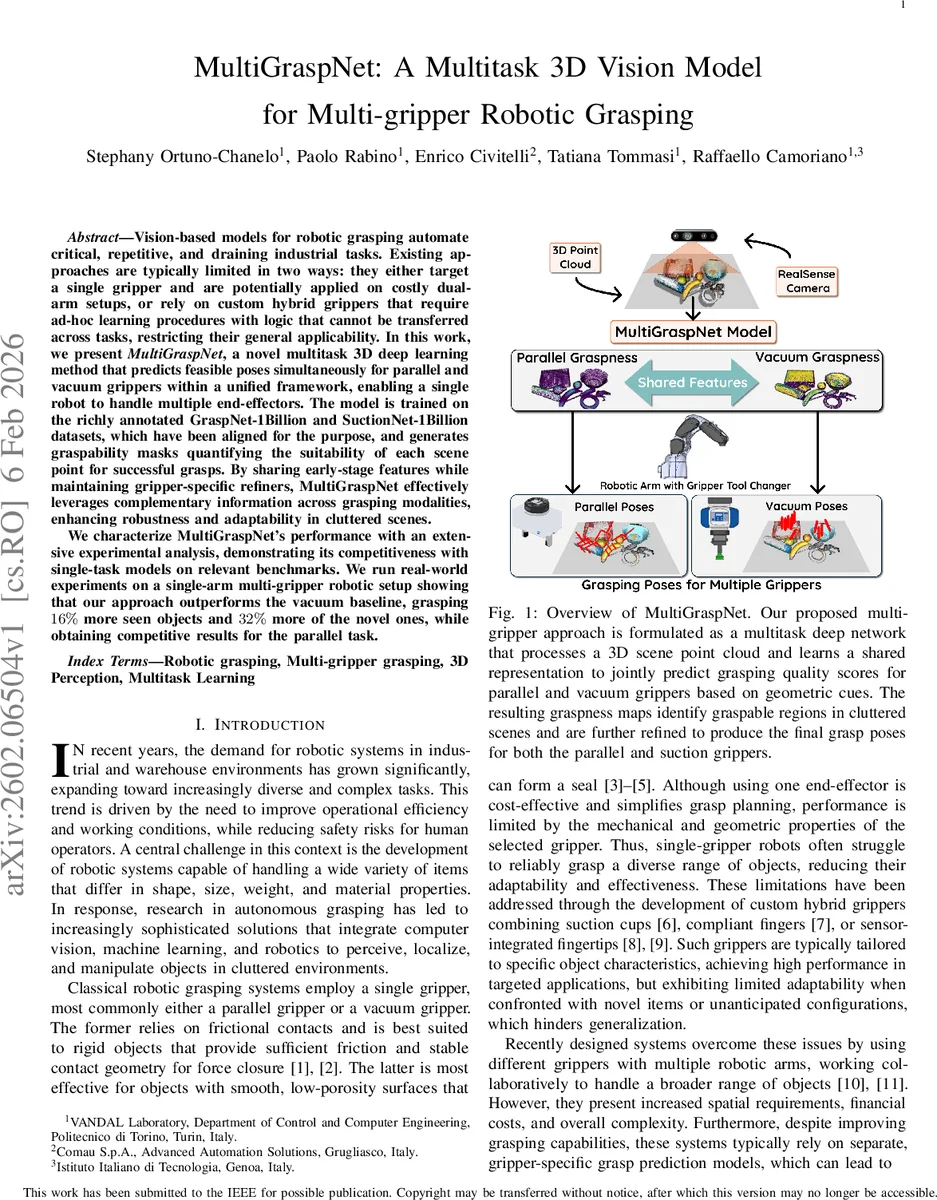

Vision-based models for robotic grasping automate critical, repetitive, and draining industrial tasks. Existing approaches are typically limited in two ways: they either target a single gripper and are potentially applied on costly dual-arm setups, or rely on custom hybrid grippers that require ad-hoc learning procedures with logic that cannot be transferred across tasks, restricting their general applicability. In this work, we present MultiGraspNet, a novel multitask 3D deep learning method that predicts feasible poses simultaneously for parallel and vacuum grippers within a unified framework, enabling a single robot to handle multiple end effectors. The model is trained on the richly annotated GraspNet-1Billion and SuctionNet-1Billion datasets, which have been aligned for the purpose, and generates graspability masks quantifying the suitability of each scene point for successful grasps. By sharing early-stage features while maintaining gripper-specific refiners, MultiGraspNet effectively leverages complementary information across grasping modalities, enhancing robustness and adaptability in cluttered scenes. We characterize MultiGraspNet’s performance with an extensive experimental analysis, demonstrating its competitiveness with single-task models on relevant benchmarks. We run real-world experiments on a single-arm multi-gripper robotic setup showing that our approach outperforms the vacuum baseline, grasping 16% percent more seen objects and 32% more of the novel ones, while obtaining competitive results for the parallel task.

💡 Research Summary

**

The paper introduces MultiGraspNet, a multitask 3D deep‑learning architecture that simultaneously predicts grasp poses for parallel‑jaw and vacuum end‑effectors from a single point‑cloud input. Recognizing that most existing robotic grasping solutions are limited to a single gripper or rely on custom hybrid hardware, the authors aim to enable a single‑arm robot to switch between two fundamentally different grippers without maintaining separate models. To this end, they align the large‑scale real‑world datasets GraspNet‑1Billion (parallel grasps) and SuctionNet‑1Billion (vacuum grasps) and construct a unified “graspness map” that encodes, for every scene point, the probability of a successful grasp for each gripper type.

The network architecture consists of four main components. A 3‑D U‑Net backbone built on the MinkowskiEngine extracts per‑point feature vectors (512‑dimensional) from the input cloud. A MultiGrasp Map Predictor then branches into three 1‑D convolutional heads that output (i) an objectness score, (ii) a parallel‑graspness score, and (iii) a vacuum‑graspness score. Objectness is trained with cross‑entropy loss, vacuum graspness with binary cross‑entropy, and parallel graspness with a weighted binary cross‑entropy that heavily up‑weights positive samples to mitigate class imbalance.

During inference, the objectness and graspness scores are multiplied point‑wise, thresholded, and then subsampled using farthest‑point sampling to obtain a set of seed points for each gripper (Mₚ for parallel, Mᵥ for vacuum). These seeds, together with their backbone features, are fed to gripper‑specific pose refiners. The parallel‑grasp refiner follows the pipeline of ViewNet (to predict approach vectors), cylinder grouping (to collect candidate points), and a Grasp Head that regresses width, depth, in‑plane rotation and a final graspness classification. The vacuum refiner simply uses the seed point’s surface normal and center, applying a lightweight MLP to refine the vacuum score. Losses for the refiner include Smooth L1 for continuous parameters and cross‑entropy for discrete ones.

Quantitative evaluation on the aligned datasets shows that MultiGraspNet matches or exceeds the performance of dedicated single‑task models on both grasp modalities, demonstrating the benefit of shared feature learning. In real‑world experiments with a single‑arm robot equipped with interchangeable parallel and vacuum grippers, the multitask system outperforms a vacuum‑only baseline by grasping 16 % more of the previously seen objects and 32 % more of novel objects, while achieving competitive results for parallel grasps.

The study concludes that multitask learning can effectively transfer knowledge between complementary grasping strategies, reducing hardware complexity and improving robustness in cluttered industrial settings. Future work is suggested to extend the framework to additional gripper types, incorporate dynamic gripper‑switching policies, and explore model compression for real‑time deployment.

Comments & Academic Discussion

Loading comments...

Leave a Comment