Prism: Spectral Parameter Sharing for Multi-Agent Reinforcement Learning

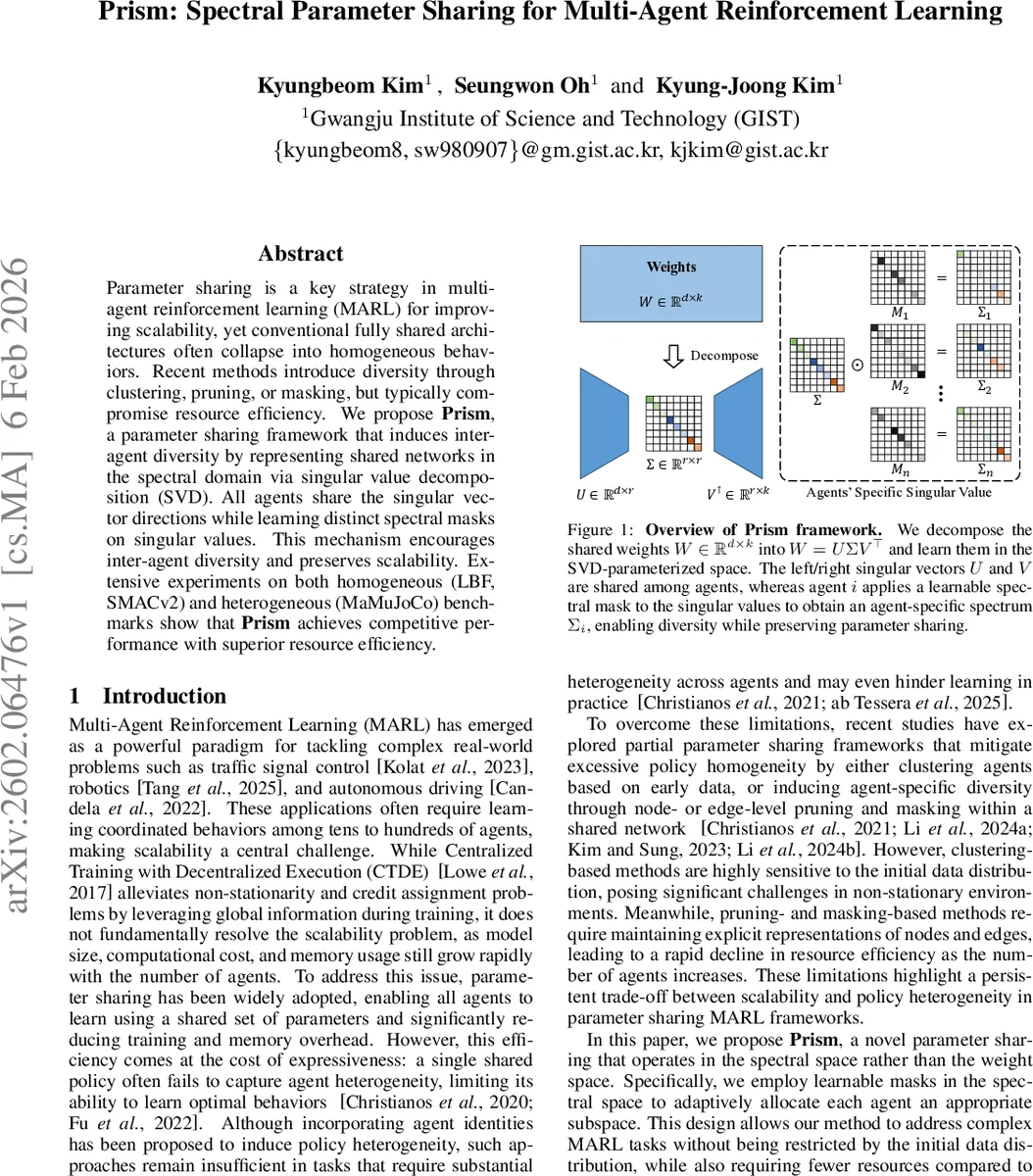

Parameter sharing is a key strategy in multi-agent reinforcement learning (MARL) for improving scalability, yet conventional fully shared architectures often collapse into homogeneous behaviors. Recent methods introduce diversity through clustering, pruning, or masking, but typically compromise resource efficiency. We propose Prism, a parameter sharing framework that induces inter-agent diversity by representing shared networks in the spectral domain via singular value decomposition (SVD). All agents share the singular vector directions while learning distinct spectral masks on singular values. This mechanism encourages inter-agent diversity and preserves scalability. Extensive experiments on both homogeneous (LBF, SMACv2) and heterogeneous (MaMuJoCo) benchmarks show that Prism achieves competitive performance with superior resource efficiency.

💡 Research Summary

The paper addresses a central tension in multi‑agent reinforcement learning (MARL): parameter sharing dramatically reduces memory and computation, yet fully shared policies often collapse into homogeneous behaviors that are sub‑optimal when agents need to specialize. Existing remedies—clustering agents, node‑level pruning, or edge‑level masking—introduce diversity but at the cost of additional storage, sensitivity to early data, or reduced scalability.

Prism proposes a fundamentally different approach: instead of sharing raw weight matrices, the shared network is parameterized in the spectral domain using singular value decomposition (SVD). A weight matrix (W\in\mathbb{R}^{d\times k}) is expressed as (W = U\Sigma V^\top), where (U) (left singular vectors) and (V) (right singular vectors) are orthonormal bases shared by all agents, and (\Sigma) contains the singular values. Diversity is introduced by giving each agent a learnable mask (m_i\in\mathbb{R}^r) that modulates the singular values:

\

Comments & Academic Discussion

Loading comments...

Leave a Comment