Adaptive Protein Tokenization

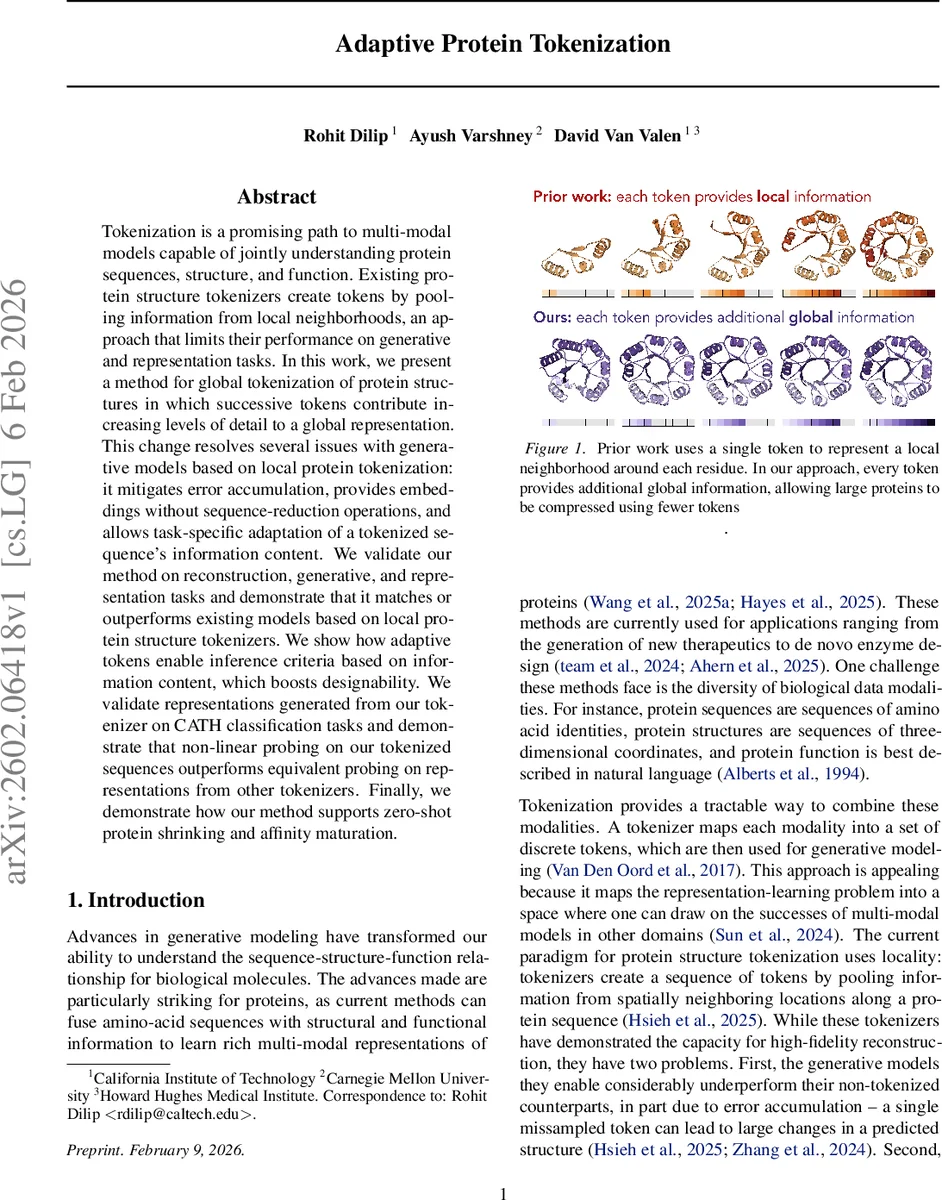

Tokenization is a promising path to multi-modal models capable of jointly understanding protein sequences, structure, and function. Existing protein structure tokenizers create tokens by pooling information from local neighborhoods, an approach that limits their performance on generative and representation tasks. In this work, we present a method for global tokenization of protein structures in which successive tokens contribute increasing levels of detail to a global representation. This change resolves several issues with generative models based on local protein tokenization: it mitigates error accumulation, provides embeddings without sequence-reduction operations, and allows task-specific adaptation of a tokenized sequence’s information content. We validate our method on reconstruction, generative, and representation tasks and demonstrate that it matches or outperforms existing models based on local protein structure tokenizers. We show how adaptive tokens enable inference criteria based on information content, which boosts designability. We validate representations generated from our tokenizer on CATH classification tasks and demonstrate that non-linear probing on our tokenized sequences outperforms equivalent probing on representations from other tokenizers. Finally, we demonstrate how our method supports zero-shot protein shrinking and affinity maturation.

💡 Research Summary

The paper introduces Adaptive Protein Tokenizer (APT), a novel global‑tokenization framework for protein structures that overcomes the limitations of existing local‑neighborhood tokenizers. Traditional tokenizers compress each residue’s spatial neighborhood into a single token, leading to a linear increase in token count with protein length and severe error accumulation during generation. APT instead generates a sequence of tokens that progressively add finer‑grained information to a global representation, analogous to a coarse‑to‑fine decomposition (e.g., Fourier or wavelet transforms).

Methodology

APT is built as a diffusion auto‑encoder with a discrete bottleneck. Raw Cα coordinates are fed into a bidirectional transformer encoder, producing latent vectors c∈ℝ^{L×d}. These vectors are quantized using Finite‑Scalar Quantization (FSQ) into a codebook of ~1,000 tokens. To enforce adaptivity, an upper token limit U is sampled uniformly and nested dropout is applied, encouraging the model to place essential global information in early tokens and high‑frequency details in later tokens. Relative positional encodings and shared adaLN parameters are used throughout the decoder.

The decoder is a diffusion model trained with a flow‑matching loss L_flow = ‖(x‑ε)‑v_θ(x_t,t)‖². The first token (or the first few tokens) also regresses protein length via a cross‑entropy loss L_size, weighted by λ_size≈0.01, achieving length predictions within two residues of the ground truth. The total loss is L = L_flow + λ_size·L_size.

During generation, a GPT‑style autoregressive model predicts token sequences. Because of the nested‑dropout design, any prefix of the token sequence is a valid conditioning signal. The authors introduce three inference‑time strategies for controlling the amount of information passed to the diffusion decoder: (1) fixed‑token cutoff, (2) entropy‑based cutoff (stop when per‑token entropy falls below a threshold), and (3) minimum‑entropy cutoff (select the first local minimum of the entropy curve). This “tail dropout” allows the diffusion decoder to fill in high‑frequency details, mitigating error exposure that would otherwise degrade designability.

A classifier‑free guidance scheme is also refined: the score function is interpolated between unconditional and conditional versions using a parameter α∈

Comments & Academic Discussion

Loading comments...

Leave a Comment