VENOMREC: Cross-Modal Interactive Poisoning for Targeted Promotion in Multimodal LLM Recommender Systems

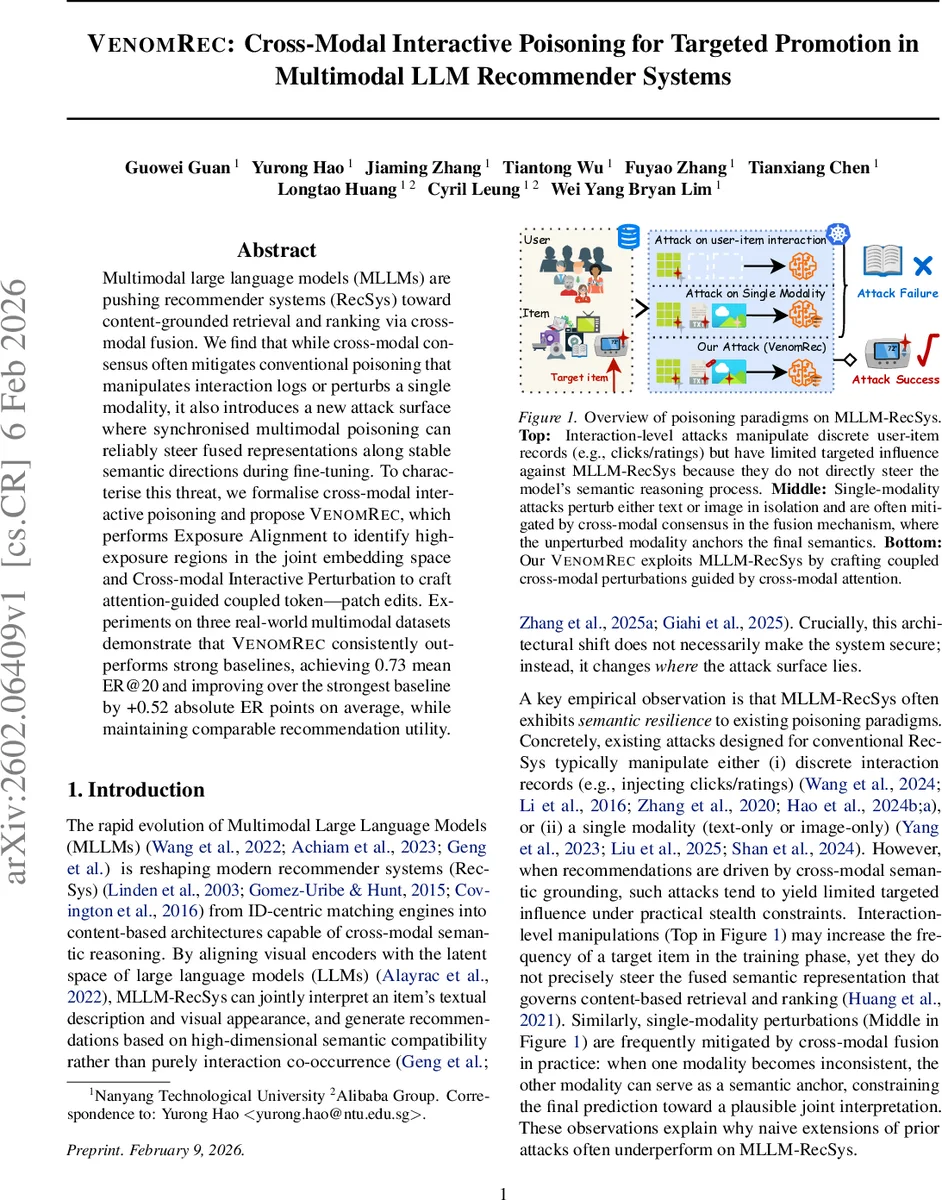

Multimodal large language models (MLLMs) are pushing recommender systems (RecSys) toward content-grounded retrieval and ranking via cross-modal fusion. We find that while cross-modal consensus often mitigates conventional poisoning that manipulates interaction logs or perturbs a single modality, it also introduces a new attack surface where synchronised multimodal poisoning can reliably steer fused representations along stable semantic directions during fine-tuning. To characterise this threat, we formalise cross-modal interactive poisoning and propose VENOMREC, which performs Exposure Alignment to identify high-exposure regions in the joint embedding space and Cross-modal Interactive Perturbation to craft attention-guided coupled token-patch edits. Experiments on three real-world multimodal datasets demonstrate that VENOMREC consistently outperforms strong baselines, achieving 0.73 mean ER@20 and improving over the strongest baseline by +0.52 absolute ER points on average, while maintaining comparable recommendation utility.

💡 Research Summary

The paper “VENOMREC: Cross‑Modal Interactive Poisoning for Targeted Promotion in Multimodal LLM Recommender Systems” identifies a novel security vulnerability that emerges when recommender systems (RecSys) are built on multimodal large language models (MLLMs). Traditional poisoning attacks either tamper with user‑item interaction logs or perturb a single modality (text or image). In MLLM‑based RecSys, cross‑modal attention fuses textual tokens and visual features, which often neutralizes unilateral noise because the unperturbed modality acts as a semantic anchor. The authors argue that this very cross‑modal consensus becomes an attack surface: if an adversary can synchronously manipulate both modalities, the fusion mechanism amplifies the coordinated signal rather than suppressing it, steering the joint embedding of a target item toward a high‑exposure region.

VENOMREC is a two‑stage attack framework designed to exploit this weakness while respecting strict stealth constraints.

- Exposure Alignment (EA) – The attacker first identifies a “hot‑spot” in the joint embedding space that corresponds to naturally high‑exposure items. Using publicly observable popularity lists (e.g., best‑sellers), a set of anchor items is selected, and their proxy embeddings (computed with publicly available backbones such as CLIP for vision and T5 for language) are averaged and ℓ₂‑normalized to obtain a centroid z★. This centroid serves as the destination vector that the target item’s representation should approach.

- Cross‑modal Interactive Perturbation (CIP) – With the destination defined, the attacker leverages the same backbones to compute cross‑modal attention matrices A (size Lₜ × Lᵥ). High‑attention token‑patch pairs indicate the most influential components of the fusion process. CIP then optimizes minimal, coordinated edits on those tokens and patches: textual edits are limited to synonym substitution or slight re‑phrasings that preserve grammar and semantics; visual edits consist of subtle color, texture, or small‑region perturbations that remain imperceptible to humans. The optimization minimizes a loss L_adv = 1 − cos(ϕ(˜t, ˜v), z★) while enforcing ℓ₂/ℓ_∞ bounds to keep perturbations within the stealth manifold B (unimodal naturalness and cross‑modal coherence). The process iterates, re‑computing attention after each update to stay aligned with the model’s current fusion dynamics.

The threat model assumes a grey‑box adversary: the attacker does not have access to the victim’s private parameters or gradients but knows the public backbone architectures and can manipulate a limited fraction ρ of users (U_mal) who contribute user‑generated content (UGC). Poisoned UGC is injected into the training set, and the victim model is fine‑tuned on the combined benign and poisoned data. The objective is to maximize the exposure R(I†; Θ*) of a set of target items I† across benign users, measured as the proportion of users for whom a target appears in the top‑K recommendation list.

Experiments are conducted on three real‑world multimodal datasets (e.g., Amazon product reviews with images, Yelp reviews with photos, and MovieLens movies with posters). Baselines include single‑modality poisoning (text‑only, image‑only), the recent multi‑modal “Shadowcast” attack, and other state‑of‑the‑art poisoning methods. Evaluation metrics are Exposure Rate at 20 (ER@20) for targeted promotion, and standard recommendation quality metrics (NDCG, Recall) to assess stealthiness. VENOMREC consistently outperforms all baselines, achieving an average ER@20 of 0.73 and improving over the strongest baseline by +0.52 absolute points. Importantly, recommendation utility remains essentially unchanged, confirming the attack’s stealth.

Key contributions are: (1) formalizing cross‑modal interactive poisoning for MLLM‑based RecSys, (2) introducing the VENOMREC framework that exploits attention‑guided token‑patch coupling, and (3) providing extensive empirical evidence of its effectiveness and stealth across multiple datasets. The paper also discusses defensive directions: monitoring attention weight distributions, detecting anomalous high‑exposure centroid shifts, and enforcing stricter cross‑modal consistency checks during training.

In summary, VENOMREC demonstrates that the fusion mechanism, once thought to be a defensive barrier, can be weaponized to steer multimodal representations toward malicious objectives. This work calls for a re‑examination of security assumptions in multimodal recommendation pipelines and motivates the development of robust fusion‑level defenses.

Comments & Academic Discussion

Loading comments...

Leave a Comment