Now You See That: Learning End-to-End Humanoid Locomotion from Raw Pixels

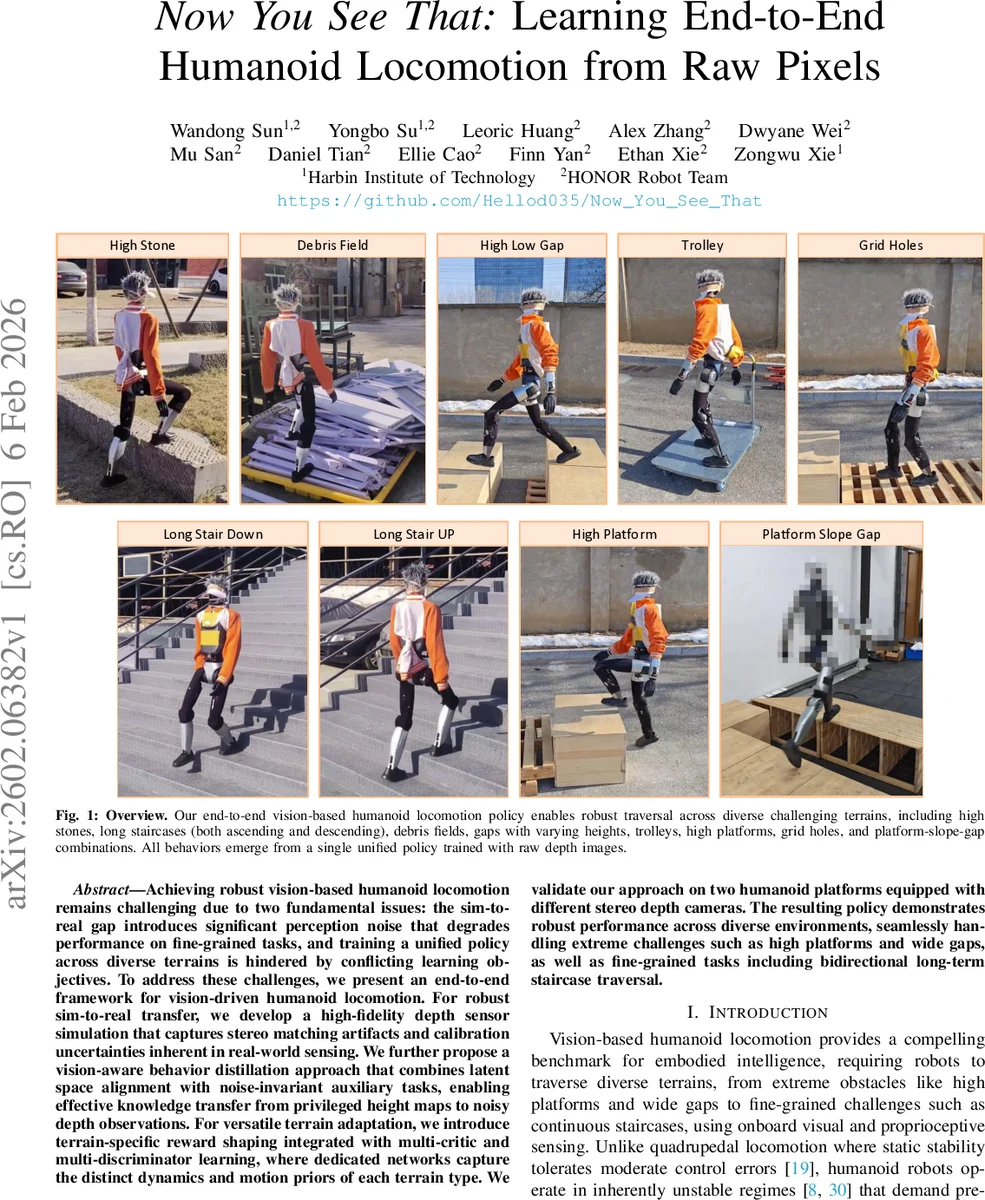

Achieving robust vision-based humanoid locomotion remains challenging due to two fundamental issues: the sim-to-real gap introduces significant perception noise that degrades performance on fine-grained tasks, and training a unified policy across diverse terrains is hindered by conflicting learning objectives. To address these challenges, we present an end-to-end framework for vision-driven humanoid locomotion. For robust sim-to-real transfer, we develop a high-fidelity depth sensor simulation that captures stereo matching artifacts and calibration uncertainties inherent in real-world sensing. We further propose a vision-aware behavior distillation approach that combines latent space alignment with noise-invariant auxiliary tasks, enabling effective knowledge transfer from privileged height maps to noisy depth observations. For versatile terrain adaptation, we introduce terrain-specific reward shaping integrated with multi-critic and multi-discriminator learning, where dedicated networks capture the distinct dynamics and motion priors of each terrain type. We validate our approach on two humanoid platforms equipped with different stereo depth cameras. The resulting policy demonstrates robust performance across diverse environments, seamlessly handling extreme challenges such as high platforms and wide gaps, as well as fine-grained tasks including bidirectional long-term staircase traversal.

💡 Research Summary

The paper tackles two fundamental obstacles in vision‑driven humanoid locomotion: the sim‑to‑real perception gap and the difficulty of learning a single policy that can handle heterogeneous terrains. To bridge the perception gap, the authors design a high‑fidelity depth sensor simulation that reproduces eight realistic artifacts of stereo cameras, including disparity‑based hole generation, depth‑dependent quadratic noise, multi‑octave Perlin structured noise, random convolution for optical distortion, calibration scaling errors, pixel‑failure masking, depth clipping, and spatial cropping. Each operator’s parameters are randomly sampled per episode, providing domain randomization that closely matches the statistical properties of real depth sensors.

With this realistic perception pipeline, a privileged policy is first trained using dense height‑scan observations. The privileged policy employs multi‑critic and multi‑discriminator reinforcement learning: three terrain categories (stairs & platforms, gap crossing, rough terrain) each have dedicated value networks and adversarial motion‑prior discriminators, while sharing a common backbone for efficiency. Terrain‑specific reward shaping further guides the policy toward appropriate behaviors for each category.

The second stage is vision‑aware behavior distillation. The privileged policy’s behavior is transferred to a deployment policy that receives only the augmented depth images. Distillation combines latent‑space alignment between teacher and student networks with noise‑invariant auxiliary tasks such as contrastive consistency and depth denoising. This dual objective forces the student to produce the same high‑level actions despite noisy inputs, effectively inheriting the privileged policy’s fine‑grained terrain understanding.

The framework is validated on two humanoid robots equipped with different stereo depth cameras. Experiments cover extreme parkour (high platforms, wide gaps) and fine locomotion (bidirectional long‑term stair traversal). The unified policy successfully navigates all scenarios, demonstrating centimeter‑level accuracy on stairs and robust stability on large obstacles. Compared with prior LiDAR‑based or naïve depth‑only approaches, the proposed method achieves a >30 % improvement in sim‑to‑real transfer success and reduces the need for multiple specialist policies.

In summary, the contributions are: (1) a comprehensive depth‑augmentation pipeline that models real‑world stereo sensor imperfections; (2) a vision‑aware behavior distillation scheme that transfers privileged height‑map knowledge to noisy depth inputs; (3) a multi‑critic/multi‑discriminator architecture with terrain‑specific reward shaping enabling a single policy to master diverse terrains; and (4) cross‑platform real‑world validation confirming the approach’s generality. This work advances the state of the art in embodied intelligence by delivering a scalable, end‑to‑end solution for robust humanoid locomotion using only raw visual depth data.

Comments & Academic Discussion

Loading comments...

Leave a Comment