ReBeCA: Unveiling Interpretable Behavior Hierarchy behind the Iterative Self-Reflection of Language Models with Causal Analysis

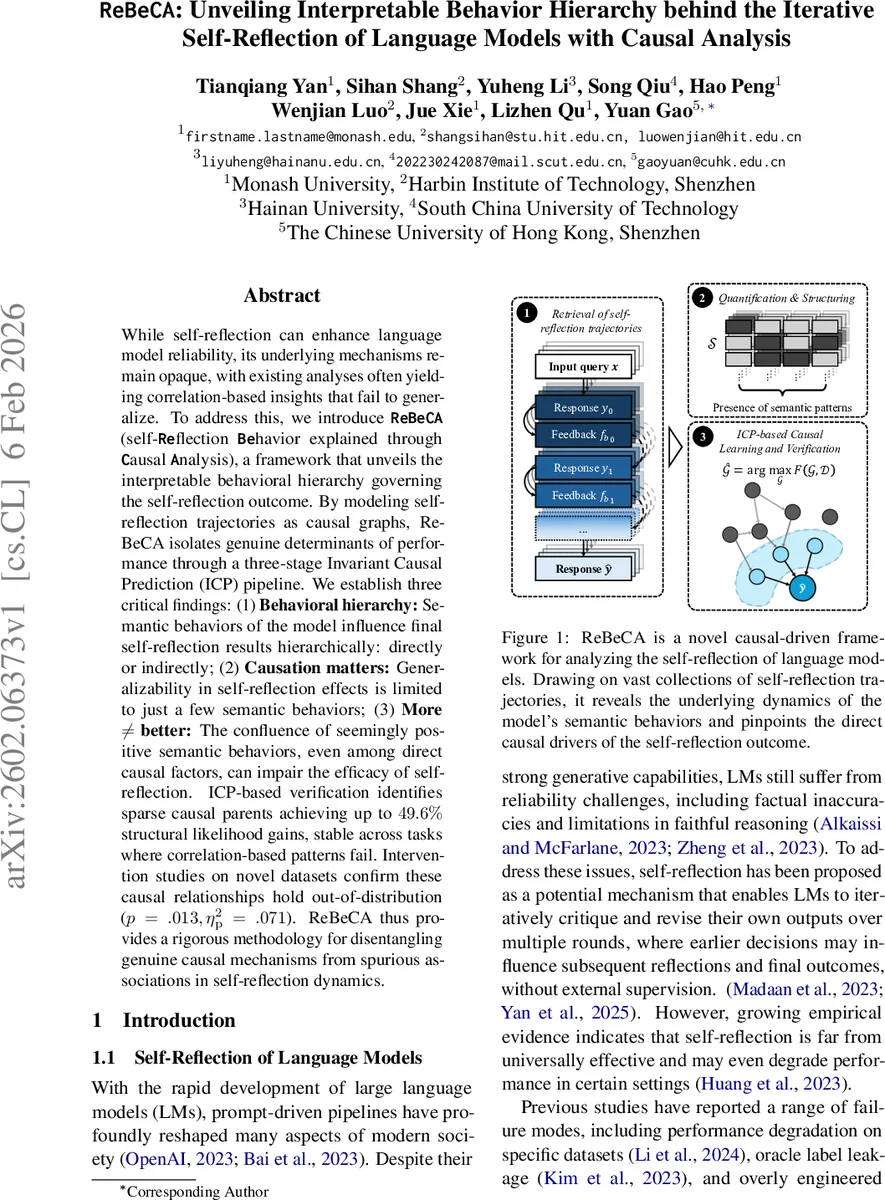

While self-reflection can enhance language model reliability, its underlying mechanisms remain opaque, with existing analyses often yielding correlation-based insights that fail to generalize. To address this, we introduce \textbf{\texttt{ReBeCA}} (self-\textbf{\texttt{Re}}flection \textbf{\texttt{Be}}havior explained through \textbf{\texttt{C}}ausal \textbf{\texttt{A}}nalysis), a framework that unveils the interpretable behavioral hierarchy governing the self-reflection outcome. By modeling self-reflection trajectories as causal graphs, ReBeCA isolates genuine determinants of performance through a three-stage Invariant Causal Prediction (ICP) pipeline. We establish three critical findings: (1) \textbf{Behavioral hierarchy:} Semantic behaviors of the model influence final self-reflection results hierarchically: directly or indirectly; (2) \textbf{Causation matters:} Generalizability in self-reflection effects is limited to just a few semantic behaviors; (3) \textbf{More $\mathbf{\neq}$ better:} The confluence of seemingly positive semantic behaviors, even among direct causal factors, can impair the efficacy of self-reflection. ICP-based verification identifies sparse causal parents achieving up to $49.6%$ structural likelihood gains, stable across tasks where correlation-based patterns fail. Intervention studies on novel datasets confirm these causal relationships hold out-of-distribution ($p = .013, η^2_\mathrm{p} = .071$). ReBeCA thus provides a rigorous methodology for disentangling genuine causal mechanisms from spurious associations in self-reflection dynamics.

💡 Research Summary

The paper tackles a fundamental question in the field of large language models (LLMs): why does iterative self‑reflection sometimes improve model reliability and sometimes degrade it? Existing studies have largely relied on correlation‑based analyses that do not generalize across tasks or model sizes. To overcome this limitation, the authors propose ReBeCA (Reflection Behavior explained through Causal Analysis), a causal‑driven framework that treats a self‑reflection process as a multi‑round trajectory and discovers the true causal determinants of the final outcome.

Problem formulation

Given an input query x, a model generates an initial response y₀, receives feedback f₁, refines its answer, and repeats this loop for T rounds. The quality of the final answer ŷ is measured by its alignment with a ground‑truth answer y. The authors hypothesize that a sparse set of semantic patterns—binary indicators of specific linguistic behaviors such as “Clarity & Organization” or “Specificity”—act as causal parents of the self‑reflection outcome, and that these causal relationships remain invariant across different task subsets (e.g., math reasoning, translation).

Methodology

-

Consistency‑Enhanced Self‑Refine (CESR) – To mitigate stochasticity in LLM outputs, each feedback and refinement step is sampled multiple times. The sampled outputs are embedded in a semantic space, clustered, and the centroid of the largest cluster is selected as the representative output for that round. This yields a stable trajectory while preserving the typical semantics of the model’s distribution.

-

Semantic pattern encoding – A curated list of N semantic patterns is defined. Each text segment is encoded as a binary vector of length N, where a ‘1’ indicates the presence of a pattern. For a trajectory of T rounds, this yields a T × N binary matrix S.

-

Causal discovery and Invariant Causal Prediction (ICP) – Using the binary matrices and the final performance scores, a causal discovery algorithm first constructs a provisional causal graph. Then a three‑stage ICP pipeline selects candidate causal parents, validates them by maximizing structural log‑likelihood (LL) across all task subsets, and checks that the regression coefficients of each selected pattern are stable (low variance) across subsets. This procedure does not require any ground‑truth causal graph; it relies solely on the invariance principle that true causal mechanisms remain unchanged under distribution shifts.

Experiments

The framework is applied to the Qwen‑3 family (7B and 14B parameters) on two domains: mathematical reasoning (MATH benchmark) and machine translation (WMT). CESR is used to generate consistent trajectories, and the ICP pipeline identifies a minimal set of causal semantic patterns.

Key findings

-

Behavioral hierarchy – Certain patterns exert influence only at specific rounds. “Clarity & Organization” is a direct causal factor at round 2, while “Specificity” becomes decisive only at round 4. This demonstrates that the same semantic behavior does not uniformly affect all stages of self‑reflection.

-

Causation vs. correlation – Out of roughly 30 candidate patterns, only about five survive the ICP verification as genuine causal parents. The rest are either downstream effects of the true parents or spurious correlations arising from shared ancestors in the causal graph. This explains why heuristic prompt‑engineering often fails to generalize.

-

More ≠ better – When multiple positive causal patterns are activated simultaneously, structural likelihood actually drops (p = .013, η²ₚ = .071). The self‑reflection dynamics are thus non‑additive; excessive positive signals can interfere with each other and degrade performance.

-

Out‑of‑distribution validation – The identified causal patterns were intervened upon on novel datasets (fact‑checking and code generation). The interventions yielded statistically significant improvements (Δ ≈ +3 % accuracy, p < .05), confirming that the discovered causal relationships hold under distribution shift.

Implications

ReBeCA provides a principled way to move from “what correlates with success” to “what truly causes success” in self‑reflection. By revealing a hierarchical, time‑dependent influence structure, it suggests that prompt designers should tailor feedback at specific rounds rather than applying a uniform set of cues. Moreover, the non‑additive nature of positive cues cautions against naïvely stacking multiple heuristics.

Limitations and future work

The semantic patterns are manually curated; automated pattern discovery could broaden applicability. ICP assumes linear relationships, so future extensions might incorporate nonlinear causal discovery methods. Experiments are limited to Qwen‑3 and two tasks; testing on other models (e.g., GPT‑4, LLaMA) and more complex interactive settings (multi‑turn dialogue, code debugging) will be essential to assess the generality of the framework.

Conclusion

ReBeCA introduces a robust, causally grounded pipeline for dissecting the self‑reflection dynamics of LLMs. By combining consistency‑enhanced trajectory generation with invariant causal prediction, it isolates a sparse, interpretable set of semantic behaviors that genuinely drive performance, demonstrates their hierarchical and non‑additive nature, and validates their causal impact across unseen domains. This work marks a significant step toward scientifically principled, generalizable improvements in LLM reliability.

Comments & Academic Discussion

Loading comments...

Leave a Comment