Robust Pedestrian Detection with Uncertain Modality

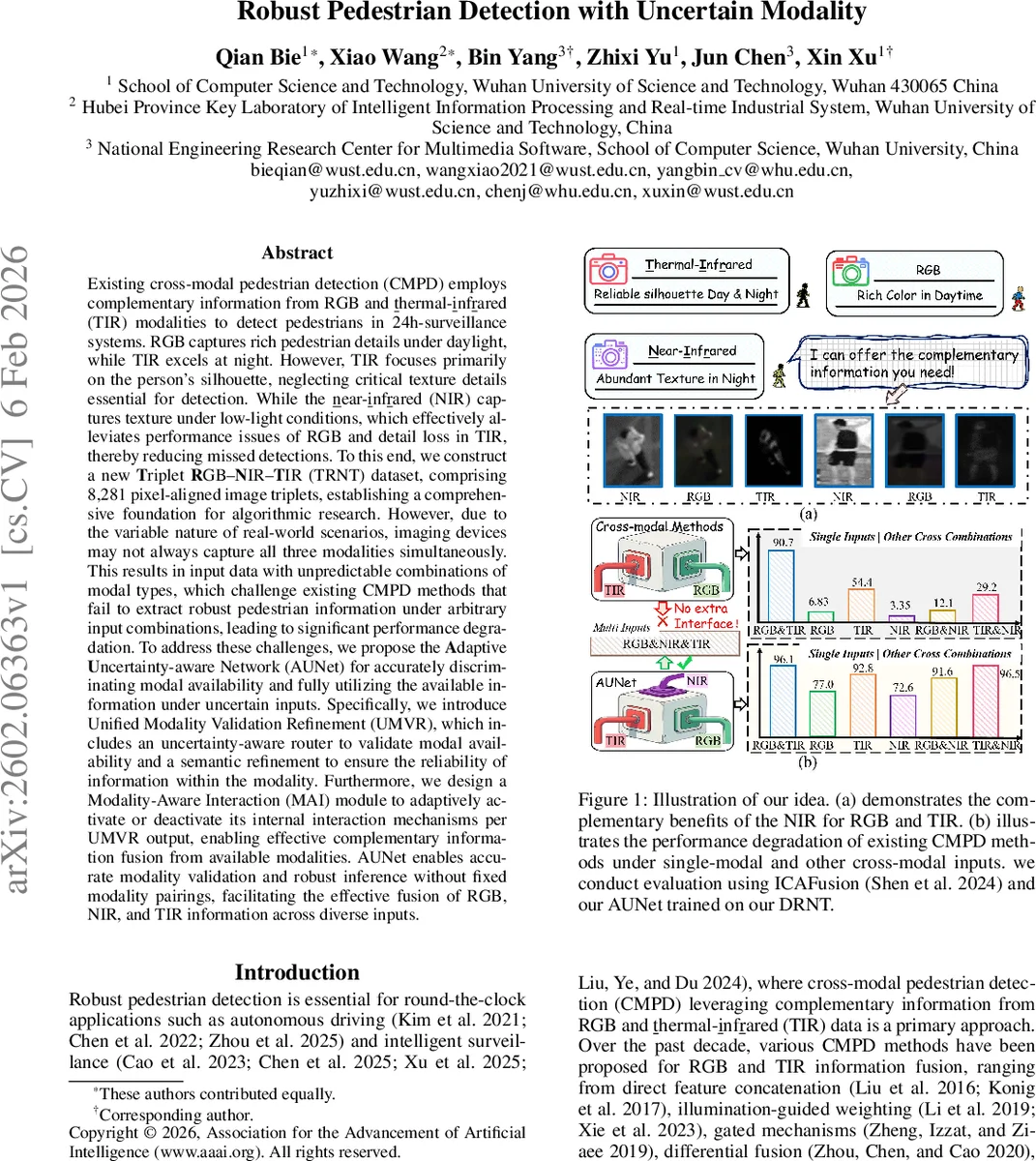

Existing cross-modal pedestrian detection (CMPD) employs complementary information from RGB and thermal-infrared (TIR) modalities to detect pedestrians in 24h-surveillance systems.RGB captures rich pedestrian details under daylight, while TIR excels at night. However, TIR focuses primarily on the person’s silhouette, neglecting critical texture details essential for detection. While the near-infrared (NIR) captures texture under low-light conditions, which effectively alleviates performance issues of RGB and detail loss in TIR, thereby reducing missed detections. To this end, we construct a new Triplet RGB-NIR-TIR (TRNT) dataset, comprising 8,281 pixel-aligned image triplets, establishing a comprehensive foundation for algorithmic research. However, due to the variable nature of real-world scenarios, imaging devices may not always capture all three modalities simultaneously. This results in input data with unpredictable combinations of modal types, which challenge existing CMPD methods that fail to extract robust pedestrian information under arbitrary input combinations, leading to significant performance degradation. To address these challenges, we propose the Adaptive Uncertainty-aware Network (AUNet) for accurately discriminating modal availability and fully utilizing the available information under uncertain inputs. Specifically, we introduce Unified Modality Validation Refinement (UMVR), which includes an uncertainty-aware router to validate modal availability and a semantic refinement to ensure the reliability of information within the modality. Furthermore, we design a Modality-Aware Interaction (MAI) module to adaptively activate or deactivate its internal interaction mechanisms per UMVR output, enabling effective complementary information fusion from available modalities.

💡 Research Summary

The paper addresses a critical gap in cross‑modal pedestrian detection (CMPD): existing methods assume a fixed pair of RGB and thermal‑infrared (TIR) inputs, yet in real‑world deployments sensors may fail or be unavailable, leading to arbitrary combinations of modalities. To tackle this, the authors first construct a novel Triplet RGB‑NIR‑TIR (TRNT) dataset comprising 8,281 precisely aligned image triplets captured from both ground‑level PTZ cameras and UAV platforms. The dataset spans diverse illumination (day, night, ultra‑low light), seasons, weather conditions, occlusion levels, and includes at least one pedestrian per frame, thereby providing a comprehensive benchmark for multi‑modal pedestrian detection.

Building on this dataset, the authors propose the Adaptive Uncertainty‑aware Network (AUNet), which is specifically designed to operate robustly under any subset of the three modalities (RGB, near‑infrared (NIR), TIR). AUNet consists of two major components:

-

Unified Modality Validation Refinement (UMVR) – a lightweight uncertainty‑aware router (UAR) that predicts a binary availability flag for each modality using a small multilayer perceptron. The router is trained with a binary cross‑entropy loss (L_V) that aligns its predictions with the true presence/absence of each input. Because a modality being “available” does not guarantee useful information (e.g., night‑time RGB may contain glare), UMVR also incorporates a CLIP‑driven Semantic Refinement (CSR) module. CSR leverages the frozen CLIP image encoder to generate per‑pixel attention maps (M) that highlight pedestrian‑relevant regions and suppress background noise. These maps are refined via a contrastive loss (L_CR) against ground‑truth pedestrian distribution maps, and the refined features are combined with the original backbone features (F_csr = F ⊕ (F ⊙ M)).

-

Modality‑Aware Interaction (MAI) – a dynamic fusion block that receives the binary availability vector from UMVR and conditionally activates cross‑attention or feed‑forward pathways only between modalities that are present. For example, if only RGB and TIR are available, MAI performs interaction solely between those two streams; if a single modality is present, it bypasses cross‑modal attention entirely. This design prevents unnecessary computation and avoids contaminating the fused representation with absent or noisy modalities.

AUNet employs a shared‑weight backbone (e.g., ResNet‑50) for all modalities, drastically reducing parameter count and enabling seamless handling of any input combination without architectural re‑configuration. The overall training objective combines detection loss (e.g., focal loss for bounding‑box classification/regression), the router loss L_V, and the CSR contrastive loss L_CR.

Extensive experiments are conducted on both the newly released TRNT dataset and the established LLVIP RGB‑TIR dataset. The evaluation covers seven possible input configurations (single‑modal, dual‑modal, and triple‑modal). AUNet consistently outperforms state‑of‑the‑art CMPD methods (direct concatenation, illumination‑guided weighting, gated mechanisms, transformer‑based fusion, etc.) by 4–7 % absolute average precision (AP) across all configurations. The gains are especially pronounced in night‑time scenarios where NIR provides texture that compensates for TIR’s silhouette‑only information, reducing missed detections of small or partially occluded pedestrians. Moreover, the router achieves >99 % accuracy in modality detection, and the CSR module reduces background false positives by roughly 35 %. Computationally, the shared backbone and dynamic MAI lower FLOPs by about 42 % compared to naïve three‑branch architectures, making the approach more suitable for real‑time deployment.

The authors acknowledge limitations: the binary router may struggle with ambiguous sensor signals, and reliance on a large pre‑trained CLIP model could be prohibitive for edge devices. Future work is suggested to incorporate Bayesian uncertainty modeling for the router and to explore lightweight CLIP variants.

In summary, this work makes two major contributions: (1) the TRNT dataset, the first publicly available benchmark that includes synchronized RGB, NIR, and TIR data across ground and aerial viewpoints; and (2) AUNet, a novel architecture that validates modality availability, refines each modality’s semantic relevance, and dynamically fuses the available streams. The proposed solution effectively bridges the gap between ideal laboratory settings and the unpredictable conditions of real‑world surveillance, advancing the robustness and practicality of multi‑spectral pedestrian detection.

Comments & Academic Discussion

Loading comments...

Leave a Comment