SHINE: A Scalable In-Context Hypernetwork for Mapping Context to LoRA in a Single Pass

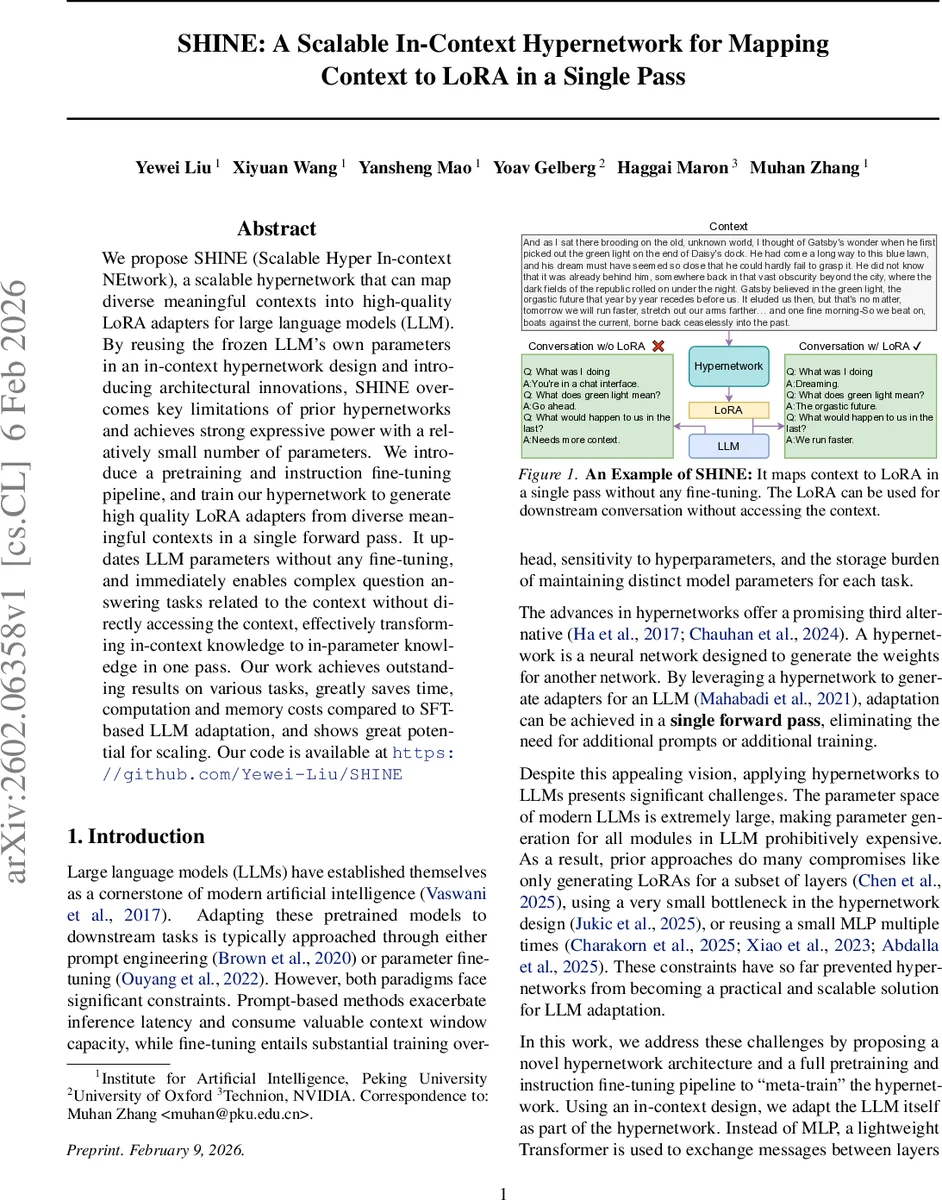

We propose SHINE (Scalable Hyper In-context NEtwork), a scalable hypernetwork that can map diverse meaningful contexts into high-quality LoRA adapters for large language models (LLM). By reusing the frozen LLM’s own parameters in an in-context hypernetwork design and introducing architectural innovations, SHINE overcomes key limitations of prior hypernetworks and achieves strong expressive power with a relatively small number of parameters. We introduce a pretraining and instruction fine-tuning pipeline, and train our hypernetwork to generate high quality LoRA adapters from diverse meaningful contexts in a single forward pass. It updates LLM parameters without any fine-tuning, and immediately enables complex question answering tasks related to the context without directly accessing the context, effectively transforming in-context knowledge to in-parameter knowledge in one pass. Our work achieves outstanding results on various tasks, greatly saves time, computation and memory costs compared to SFT-based LLM adaptation, and shows great potential for scaling. Our code is available at https://github.com/Yewei-Liu/SHINE

💡 Research Summary

The paper introduces SHINE (Scalable Hyper In‑context NEtwork), a novel hypernetwork that can convert arbitrary textual contexts into high‑quality LoRA adapters for large language models (LLMs) in a single forward pass, without any gradient‑based fine‑tuning at test time. The motivation stems from the two dominant adaptation paradigms—prompt engineering and parameter fine‑tuning—both of which suffer from significant drawbacks: prompts waste valuable context window and increase inference latency, while fine‑tuning requires expensive training, large storage for task‑specific weights, and is slow to switch between tasks. Hypernetworks promise a third way by generating task‑specific weights on the fly, but prior work has been limited by the massive parameter space of modern LLMs. Existing approaches either generate LoRA adapters for only a subset of layers, use extremely narrow bottlenecks (small MLPs), or reuse a single MLP repeatedly, which curtails expressivity and scalability.

SHINE addresses these limitations through three key innovations. First, it adopts an in‑context design that leverages the frozen LLM itself as the encoder of the input context. By augmenting the LLM with a set of learnable “Meta LoRA” memory embeddings, the model processes the concatenated sequence of context tokens and memory tokens in one pass, producing hidden states at every layer. The last M hidden states of each layer are extracted as “memory states,” yielding a tensor of shape L × M × H (layers × memory tokens × hidden dimension). The memory length M is chosen so that the total memory capacity (M × H) is at least as large as the total number of LoRA parameters (r · D, where r is the LoRA rank and D the sum of input and output dimensions per layer), thereby eliminating the classic bottleneck between context and parameters.

Second, SHINE replaces the conventional wide MLP hypernetwork with a lightweight “Memory‑to‑Parameter” (M2P) transformer. Directly flattening the memory tensor would incur O((LM)²) self‑attention cost, which is prohibitive. Instead, the M2P transformer alternates attention across the layer axis (column attention) and the token axis (row attention). This sparse attention pattern reduces computational complexity to O(LM² + ML²) and empirically saves up to 90 % of FLOPs compared with full attention. Positional embeddings are added for both layer index and token index to preserve structural information. After a few transformer layers, the output tensor ˆM retains the same shape as the input memory tensor.

Third, the final step projects ˆM into concrete LoRA weights. For each layer i, the corresponding slice ˆM

Comments & Academic Discussion

Loading comments...

Leave a Comment