HiWET: Hierarchical World-Frame End-Effector Tracking for Long-Horizon Humanoid Loco-Manipulation

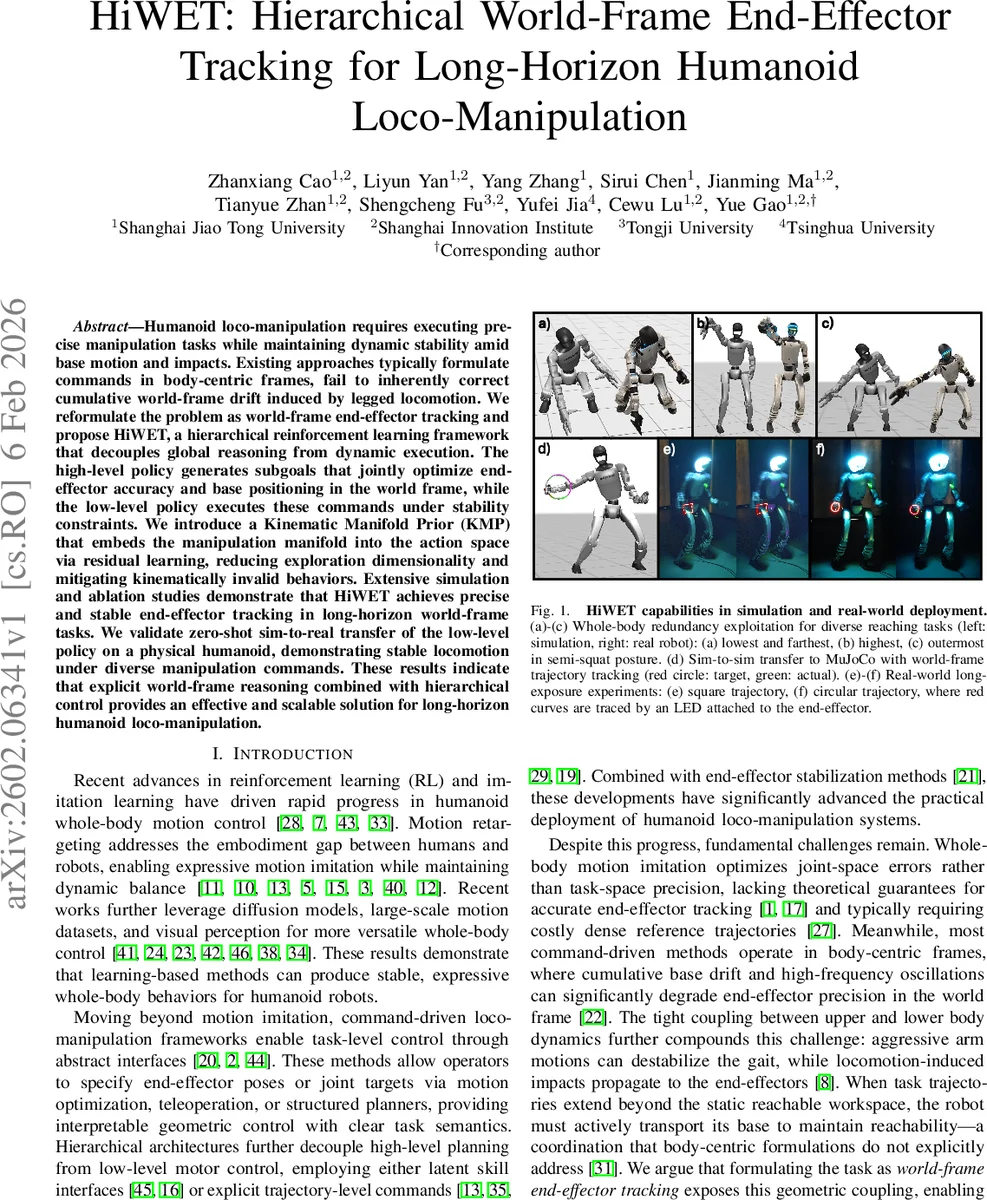

Humanoid loco-manipulation requires executing precise manipulation tasks while maintaining dynamic stability amid base motion and impacts. Existing approaches typically formulate commands in body-centric frames, fail to inherently correct cumulative world-frame drift induced by legged locomotion. We reformulate the problem as world-frame end-effector tracking and propose HiWET, a hierarchical reinforcement learning framework that decouples global reasoning from dynamic execution. The high-level policy generates subgoals that jointly optimize end-effector accuracy and base positioning in the world frame, while the low-level policy executes these commands under stability constraints. We introduce a Kinematic Manifold Prior (KMP) that embeds the manipulation manifold into the action space via residual learning, reducing exploration dimensionality and mitigating kinematically invalid behaviors. Extensive simulation and ablation studies demonstrate that HiWET achieves precise and stable end-effector tracking in long-horizon world-frame tasks. We validate zero-shot sim-to-real transfer of the low-level policy on a physical humanoid, demonstrating stable locomotion under diverse manipulation commands. These results indicate that explicit world-frame reasoning combined with hierarchical control provides an effective and scalable solution for long-horizon humanoid loco-manipulation.

💡 Research Summary

HiWET introduces a hierarchical reinforcement‑learning (HRL) architecture that explicitly tackles the world‑frame end‑effector tracking problem for long‑horizon humanoid loco‑manipulation. The core insight is that formulating tasks in the global (world) coordinate system, rather than the conventional body‑centric frame, exposes the geometric coupling between the robot’s base motion and its manipulators. This enables the controller to actively compensate for base drift and to reshape the reachable workspace by moving the torso or adjusting height.

The system consists of two policies operating at different time scales. The high‑level “command” policy (π_H) runs every K simulation steps (e.g., 20 ms) and receives the current world‑frame end‑effector target together with the robot’s pose. It outputs three structured sub‑goals: (i) desired base velocity v_des^b (including linear x‑y and yaw), (ii) desired torso height h_des, and (iii) desired base‑relative hand poses bT_des^L and bT_des^R. These sub‑goals constitute a global plan (where to go) while preserving the necessary information for the lower‑level controller to keep the robot balanced.

The low‑level “tracking” policy (π_L) runs at a high frequency (≈100 Hz) and translates the sub‑goals into joint‑space targets. Crucially, the upper‑body joints are not learned from scratch; instead a pretrained Kinematic Manifold Prior (KMP) provides a kinematically consistent reference configuration ˆq_up for the arms and waist given the desired hand poses and a scalar waist‑regularization weight α. The policy learns only a residual Δq_up that is added to ˆq_up, dramatically reducing the exploration dimensionality and guaranteeing that the upper body stays on the valid manipulation manifold. The lower‑body joints are commanded directly (absolute joint targets) to retain full authority over gait generation and base propulsion.

To cope with partial observability, HiWET incorporates a History Encoder that processes a short buffer of recent (state, command) tuples with a convolutional network, producing a latent temporal embedding e_t. A State Estimator network predicts privileged quantities (base linear velocity and exact hand poses) that are not directly measurable on the robot; these estimates are fed to the critic to lower variance in value estimation. The actor receives a concatenation of raw proprioceptive observations, the high‑level command, the privileged estimates, the history embedding, and the KMP reference.

Training efficiency is further boosted by an importance‑sampled command dataset. Random Cartesian hand targets often lie near workspace boundaries, yielding high inverse‑kinematics error or low manipulability. The authors curate a dataset by discarding commands with large IK reconstruction error and weighting the remaining samples by a manipulability index. During learning, commands are drawn from a mixture of uniform sampling and this curated prior (controlled by a mixing coefficient β), ensuring exposure to both realistic reachable poses and edge‑case scenarios.

The entire hierarchy is optimized with Proximal Policy Optimization (PPO). Rewards balance world‑frame end‑effector tracking error, base‑position error, and dynamic stability terms (e.g., Center‑of‑Mass height, foot contact forces). An auxiliary estimation loss trains the State Estimator, while the KMP remains frozen throughout policy learning.

Experimental evaluation is extensive. In simulation (MuJoCo humanoid), HiWET achieves an average world‑frame end‑effector tracking error of 12.4 mm over long trajectories, including square and circular paths that require the robot to walk several meters while continuously reaching. Ablation studies show that removing the KMP (learning absolute upper‑body joints) or the importance‑sampled command distribution degrades performance by a factor of two to three and leads to frequent balance failures. The hierarchical decomposition itself (high‑level vs. low‑level) is essential; a monolithic policy cannot simultaneously satisfy long‑horizon planning and high‑frequency stability constraints.

A zero‑shot sim‑to‑real transfer is demonstrated by deploying only the low‑level tracking policy on a physical humanoid platform (Shanghai Jiao‑Tong University robot). Without any fine‑tuning, the robot reproduces the simulated trajectories, maintains balance under diverse torso heights and arm configurations, and tracks a LED attached to the hand with comparable accuracy. This validates that the learned residual policy, grounded in the KMP’s kinematic prior, generalizes across the reality gap.

In summary, HiWET contributes four major advances: (1) explicit world‑frame reasoning that eliminates cumulative base drift, (2) a clean separation of global planning and dynamic execution via hierarchical RL, (3) a Kinematic Manifold Prior that embeds kinematic feasibility into the action space and enables residual learning, and (4) a training pipeline that combines importance‑sampled task commands, privileged state estimation, and PPO‑based optimization to achieve robust, transferable whole‑body control. The work opens avenues for more complex humanoid tasks such as multi‑robot collaboration, perception‑driven manipulation on uneven terrain, and integration of tactile/visual feedback for contact‑rich interactions.

Comments & Academic Discussion

Loading comments...

Leave a Comment