Taming SAM3 in the Wild: A Concept Bank for Open-Vocabulary Segmentation

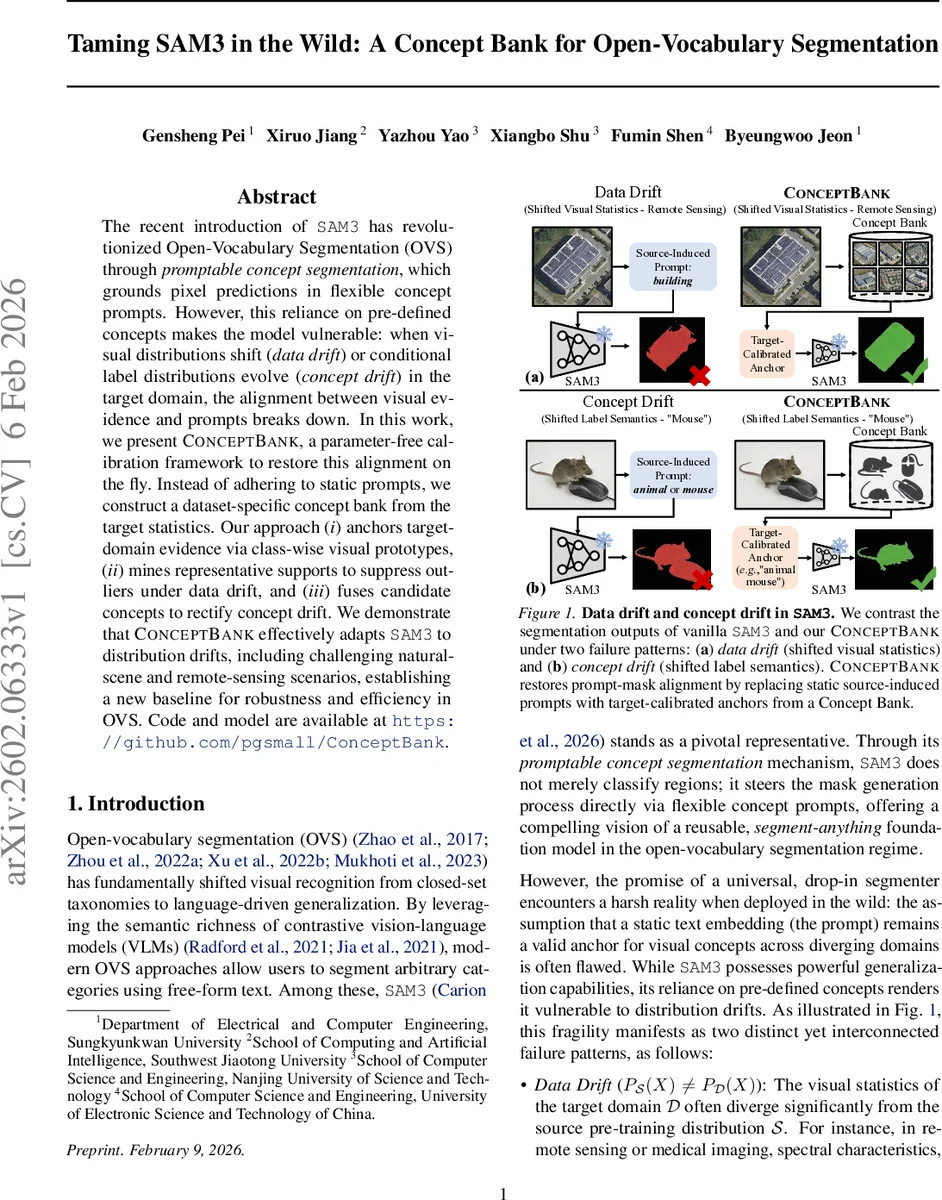

The recent introduction of \texttt{SAM3} has revolutionized Open-Vocabulary Segmentation (OVS) through \textit{promptable concept segmentation}, which grounds pixel predictions in flexible concept prompts. However, this reliance on pre-defined concepts makes the model vulnerable: when visual distributions shift (\textit{data drift}) or conditional label distributions evolve (\textit{concept drift}) in the target domain, the alignment between visual evidence and prompts breaks down. In this work, we present \textsc{ConceptBank}, a parameter-free calibration framework to restore this alignment on the fly. Instead of adhering to static prompts, we construct a dataset-specific concept bank from the target statistics. Our approach (\textit{i}) anchors target-domain evidence via class-wise visual prototypes, (\textit{ii}) mines representative supports to suppress outliers under data drift, and (\textit{iii}) fuses candidate concepts to rectify concept drift. We demonstrate that \textsc{ConceptBank} effectively adapts \texttt{SAM3} to distribution drifts, including challenging natural-scene and remote-sensing scenarios, establishing a new baseline for robustness and efficiency in OVS. Code and model are available at https://github.com/pgsmall/ConceptBank.

💡 Research Summary

The paper addresses a critical weakness of the recently introduced SAM 3 model for open‑vocabulary segmentation (OVS). While SAM 3 can generate masks conditioned on arbitrary textual prompts, its reliance on static prompt embeddings makes it fragile when deployed in new domains where either the visual appearance (data drift) or the semantic meaning of class names (concept drift) differs from the pre‑training distribution. Existing remedies—fine‑tuning, adapter layers, or manual prompt engineering—either require additional training, introduce domain bias, or involve costly trial‑and‑error.

To solve this, the authors propose ConceptBank, a parameter‑free calibration framework that builds a domain‑specific “concept bank” from the target dataset’s own statistics, without modifying SAM 3’s frozen visual or text encoders. The method consists of three stages.

-

Prototype Estimation – For each class, mask‑pooled embeddings are extracted from all annotated crops in a support set, normalized, and averaged to obtain a class‑wise visual prototype (p_c). This prototype captures the centroid of the target visual distribution for that class.

-

Representative Support Mining – Because the raw crop set may contain outliers (rare appearances, occlusions, annotation noise), the method selects the top‑K crops whose embeddings have the highest cosine similarity to the prototype. This “representative support set” (R_c) trims the long‑tail and yields a robust visual core.

-

Prototype‑Consistent Concept Fusion – The original prompt embedding (e_{S_c}) (produced by SAM 3’s text encoder) is combined with a language‑model‑expanded textual description and the visual prototype (p_c). A weighted fusion (e.g., softmax of (\alpha e_{S_c} + \beta p_c)) produces a calibrated query embedding (e^_c). Collecting all ((c, e^_c)) forms the ConceptBank (\mathcal{B}).

During inference, the pre‑computed calibrated embeddings are directly supplied to SAM 3’s mask predictor, bypassing the text encoder entirely. This yields a plug‑and‑play module that restores cross‑modal alignment under both data and concept drift with virtually no extra computation.

The authors evaluate ConceptBank on natural‑scene benchmarks (COCO‑Stuff, ADE20K) and remote‑sensing datasets (e.g., DFC15, SpaceNet) where distribution shifts are pronounced. Compared with vanilla SAM 3, fine‑tuned SAM 3, prompt‑learning baselines, and recent domain‑adaptation techniques, ConceptBank consistently improves mean IoU and panoptic quality by 4–7 percentage points, and up to 10 % in severe remote‑sensing drift scenarios. Importantly, it achieves these gains without any gradient updates, making it far more efficient than fine‑tuning approaches.

Key contributions include: (1) a clear drift‑centric formulation separating data and concept drift; (2) a fully data‑driven, parameter‑free calibration pipeline; (3) a three‑stage construction process that jointly mitigates visual outliers and semantic mismatch; and (4) extensive empirical evidence of robustness across diverse domains.

Limitations are acknowledged: the method depends on a sufficiently labeled support set; the LLM‑based textual expansion may introduce bias in low‑resource domains; and current handling of multi‑label or heavily overlapping objects is simplistic. Future work could explore domain‑specific LLMs, hierarchical prototypes, and extensions to multi‑object scenarios.

Overall, ConceptBank demonstrates that “fix the model, leverage the data” can be an effective strategy for adapting foundation vision‑language models like SAM 3 to real‑world, distribution‑shifted environments, opening a practical path toward truly universal, prompt‑driven segmentation.

Comments & Academic Discussion

Loading comments...

Leave a Comment