Halt the Hallucination: Decoupling Signal and Semantic OOD Detection Based on Cascaded Early Rejection

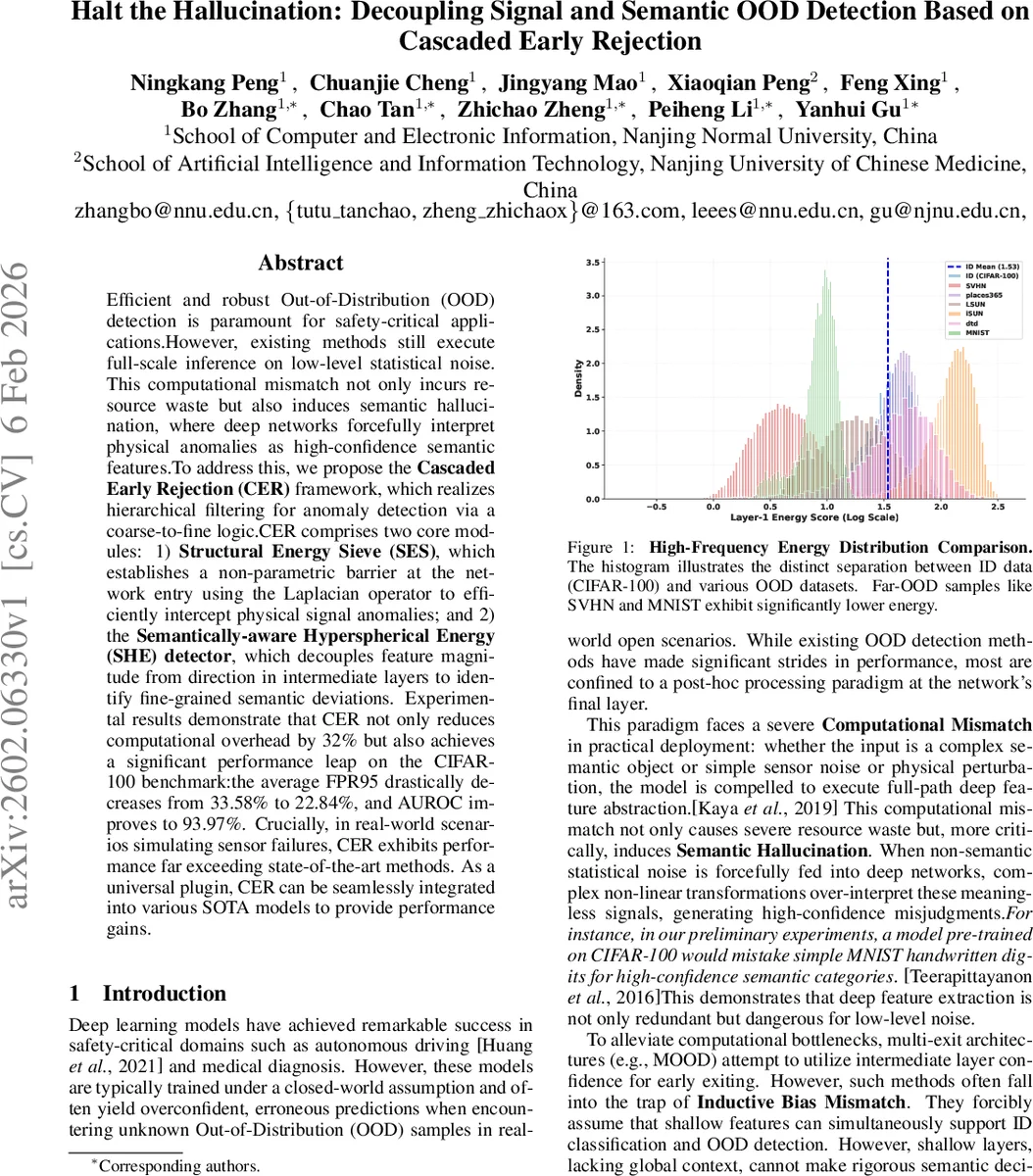

Efficient and robust Out-of-Distribution (OOD) detection is paramount for safety-critical applications.However, existing methods still execute full-scale inference on low-level statistical noise. This computational mismatch not only incurs resource waste but also induces semantic hallucination, where deep networks forcefully interpret physical anomalies as high-confidence semantic features.To address this, we propose the Cascaded Early Rejection (CER) framework, which realizes hierarchical filtering for anomaly detection via a coarse-to-fine logic.CER comprises two core modules: 1)Structural Energy Sieve (SES), which establishes a non-parametric barrier at the network entry using the Laplacian operator to efficiently intercept physical signal anomalies; and 2) the Semantically-aware Hyperspherical Energy (SHE) detector, which decouples feature magnitude from direction in intermediate layers to identify fine-grained semantic deviations. Experimental results demonstrate that CER not only reduces computational overhead by 32% but also achieves a significant performance leap on the CIFAR-100 benchmark:the average FPR95 drastically decreases from 33.58% to 22.84%, and AUROC improves to 93.97%. Crucially, in real-world scenarios simulating sensor failures, CER exhibits performance far exceeding state-of-the-art methods. As a universal plugin, CER can be seamlessly integrated into various SOTA models to provide performance gains.

💡 Research Summary

The paper tackles two critical shortcomings of current out‑of‑distribution (OOD) detection methods: (1) a computational mismatch where every input, even pure sensor noise, forces a full‑depth forward pass, wasting resources, and (2) semantic hallucination, where deep networks over‑interpret low‑level anomalies as high‑confidence semantic classes. To resolve these issues, the authors propose the Cascaded Early Rejection (CER) framework, which separates early physical‑signal filtering from later semantic‑level discrimination through a two‑stage cascade.

The first stage, Structural Energy Sieve (SES), sits at the network entry and uses a fixed Laplacian kernel to approximate high‑frequency spectral energy. By convolving the first‑layer feature map with the Laplacian, taking absolute values, and then applying an Adaptive Top‑K Spectral Pooling (averaging only the K most energetic channels), SES produces a scalar “structural energy” score. This score captures the sparsity of high‑frequency anomalies typical of OOD noise while suppressing background texture. A derived metric, Spectral Contrast Gain (G), quantifies how much the strongest channel exceeds the average, providing a robust threshold for early rejection.

If an input passes SES, it proceeds to the second stage, Semantically‑aware Hyperspherical Energy (SHE). Conventional energy‑based OOD scores are dominated by the L2 norm of the feature vector, which can be inflated by high‑frequency noise, leading to false‑negative OOD detections. SHE addresses this by constructing class prototypes μ_k from weighted averages of intermediate‑layer features and projecting both the test feature z and the prototypes onto a unit hypersphere. The resulting score is E(z)=−log∑_j exp(κ_j·(zᵀμ_j)/‖z‖), which completely decouples magnitude from direction. Consequently, decisions depend only on semantic alignment (cosine similarity) with class prototypes, eliminating magnitude‑driven hallucination.

CER formalizes the cascade as K sequential rejection modules M₁…M_K, each emitting a binary gate G_i(z_i) based on a stage‑specific scoring function S_i and an acceptance region A_i. The final classifier f_K is invoked only when all gates output 1, allowing the system to stop inference early for obvious OOD samples without any retraining.

Experiments use a ResNet‑34 backbone trained on CIFAR‑100 (ID) and evaluate on standard OOD benchmarks (SVHN, MNIST, Places365, LSUN, iSUN, Textures). CER achieves an FPR95 of 22.84% (vs. 33.58% for the previous best PALM) and an AUROC of 93.97%, while reducing overall FLOPs by ~32% thanks to the cheap Laplacian‑based early filter. In simulated sensor‑failure scenarios, CER’s performance gap widens, demonstrating robustness to pure physical anomalies.

The authors acknowledge limitations: the Laplacian kernel is hand‑crafted and may not be optimal for all domains, and SHE requires storing class prototypes, incurring modest memory overhead. Future work is suggested on learning adaptive high‑frequency filters and updating prototypes online to improve cross‑domain adaptability. Overall, CER offers a principled, plug‑and‑play solution that aligns computational effort with the intrinsic difficulty of each input, mitigating both wasteful processing and semantic hallucination in OOD detection.

Comments & Academic Discussion

Loading comments...

Leave a Comment